Uploaded README

Browse files- README_HF_PLM_1.3B.md +112 -0

- asset/plm_benchmark_en.png +0 -0

- asset/plm_benchmark_ko.png +0 -0

README_HF_PLM_1.3B.md

ADDED

|

@@ -0,0 +1,112 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language:

|

| 3 |

+

- en

|

| 4 |

+

- ko

|

| 5 |

+

pipeline_tag: text-generation

|

| 6 |

+

inference: false

|

| 7 |

+

tags:

|

| 8 |

+

- pytorch

|

| 9 |

+

- llama

|

| 10 |

+

- causal-lm

|

| 11 |

+

- 42dot-llm

|

| 12 |

+

license: cc-by-nc-4.0

|

| 13 |

+

---

|

| 14 |

+

# 42dot-PLM 1.3B

|

| 15 |

+

|

| 16 |

+

**42dot-PLM** is a pre-trained language model (PLM) developed by [**42dot**](https://42dot.ai/) which is trained using Korean and English text corpus. This repository contains a 1.3B-parameter version of the model.

|

| 17 |

+

|

| 18 |

+

## Model Description

|

| 19 |

+

|

| 20 |

+

### Hyperparameters

|

| 21 |

+

42dot-PLM is built upon a Transformer decoder architecture similar to the [LLaMA 2](https://ai.meta.com/research/publications/llama-2-open-foundation-and-fine-tuned-chat-models/) and its hyperparameters are listed below.

|

| 22 |

+

|

| 23 |

+

| Params | Layers | Attention heads | Hidden size | FFN size |

|

| 24 |

+

| -- | -- | -- | -- | -- |

|

| 25 |

+

| 1.3B | 24 | 32 | 2,048 | 5,632 |

|

| 26 |

+

|

| 27 |

+

### Pre-training

|

| 28 |

+

|

| 29 |

+

Pre-training took 6 days using 256 * NVIDIA A100 GPUs. Related settings are listed below.

|

| 30 |

+

|

| 31 |

+

| Params | Global batch size\* | Initial learning rate | Train iter.\* | Max length\* | Weight decay |

|

| 32 |

+

| -- | -- | -- | -- | -- | -- |

|

| 33 |

+

| 1.3B | 4.0M | 4E-4 | 1.0T | 2K | 0.1 |

|

| 34 |

+

|

| 35 |

+

(\* unit: tokens)

|

| 36 |

+

|

| 37 |

+

### Pre-training datasets

|

| 38 |

+

We used a set of publicly available text corpus, including:

|

| 39 |

+

- Korean: including [Jikji project](http://jikji.duckdns.org/), [mC4-ko](https://huggingface.co/datasets/mc4), [LBox Open](https://github.com/lbox-kr/lbox-open), [KLUE](https://huggingface.co/datasets/klue), [Wikipedia (Korean)](https://ko.wikipedia.org/) and so on.

|

| 40 |

+

- English: including [The Pile](https://github.com/EleutherAI/the-pile), [RedPajama](https://github.com/togethercomputer/RedPajama-Data), [C4](https://huggingface.co/datasets/c4) and so on.

|

| 41 |

+

|

| 42 |

+

### Tokenizer

|

| 43 |

+

The tokenizer is based on Byte-level BPE algorithm. We trained its vocabulary from scratch using a subset of the pre-training corpus. For constructing subset, 10M and 10M documents are sampled from Korean and English corpus respectively. The resulting vocabulary sizes about 50K.

|

| 44 |

+

|

| 45 |

+

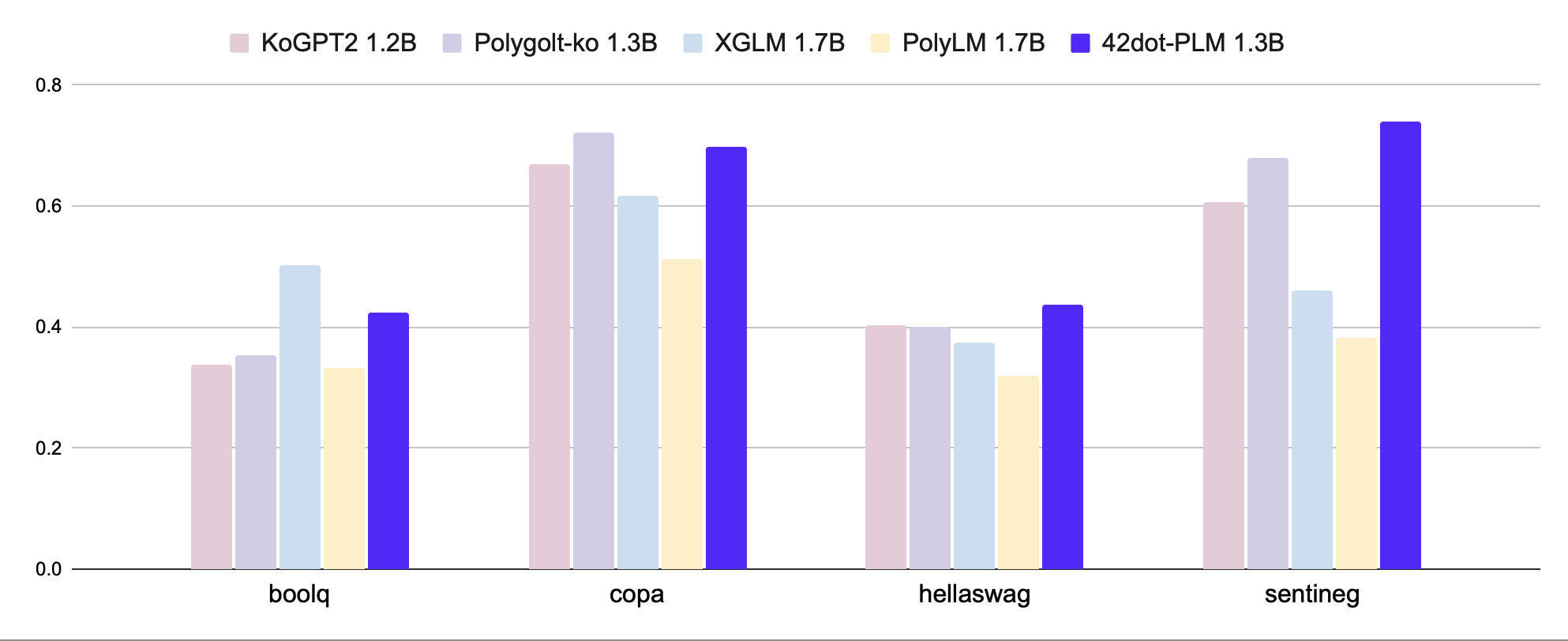

### Zero-shot evaluations

|

| 46 |

+

We evaluate 42dot-PLM on a variety of academic benchmarks both on Korean and English. All the results are obtained using [lm-eval-harness](https://github.com/EleutherAI/lm-evaluation-harness/tree/polyglot) and models released on the Hugging Face Hub.

|

| 47 |

+

#### Korean (KOBEST)

|

| 48 |

+

|

| 49 |

+

<figure align="center">

|

| 50 |

+

<img src="asset/plm_benchmark_ko.png" width="90%" height="90%"/>

|

| 51 |

+

</figure>

|

| 52 |

+

|

| 53 |

+

|Tasks / Macro-F1|[KoGPT2](https://github.com/SKT-AI/KoGPT2) <br>1.2B|[Polyglot-Ko](https://github.com/EleutherAI/polyglot) <br>1.3B|[XGLM](https://huggingface.co/facebook/xglm-1.7B) <br>1.7B|[PolyLM](https://huggingface.co/DAMO-NLP-MT/polylm-1.7b) <br>1.7B|42dot-PLM <br>1.3B ko-en|

|

| 54 |

+

|--------------|-----------|----------------|---------|-----------|------------------------|

|

| 55 |

+

|boolq |0.337 |0.355 |**0.502** |0.334 |0.424 |

|

| 56 |

+

|copa |0.67 |**0.721** |0.616 |0.513 |0.698 |

|

| 57 |

+

|hellaswag |0.404 |0.401 |0.374 |0.321 |**0.438** |

|

| 58 |

+

|sentineg |0.606 |0.679 |0.46 |0.382 |**0.74** |

|

| 59 |

+

|**average** |0.504 |0.539 |0.488 |0.388 |**0.575** |

|

| 60 |

+

|

| 61 |

+

|

| 62 |

+

#### English

|

| 63 |

+

|

| 64 |

+

<figure align="center">

|

| 65 |

+

<img src="asset/plm_benchmark_en.png" width="90%" height="90%"/>

|

| 66 |

+

</figure>

|

| 67 |

+

|

| 68 |

+

| Tasks / Metric | [MPT](https://huggingface.co/mosaicml/mpt-1b-redpajama-200b) <br>1B | [OPT](https://huggingface.co/facebook/opt-1.3b) <br>1.3B | XGLM <br>1.7B | PolyLM <br>1.7B | 42dot-PLM <br>1.3B ko-en |

|

| 69 |

+

| ---------------------- | ------ | -------- | --------- | ----------- | ------------------------ |

|

| 70 |

+

| anli_r1/acc | 0.309 | **0.341** | 0.334 | 0.336 | 0.303 |

|

| 71 |

+

| anli_r2/acc | 0.334 | **0.339** | 0.331 | 0.314 | 0.337 |

|

| 72 |

+

| anli_r3/acc | 0.33 | 0.336 | 0.333 | **0.339** | 0.328 |

|

| 73 |

+

| arc_challenge/acc | 0.268 | 0.234 | 0.21 | 0.198 | **0.287** |

|

| 74 |

+

| arc_challenge/acc_norm | 0.291 | 0.295 | 0.243 | 0.256 | **0.319** |

|

| 75 |

+

| arc_easy/acc | 0.608 | 0.571 | 0.537 | 0.461 | **0.617** |

|

| 76 |

+

| arc_easy/acc_norm | **0.555** | 0.51 | 0.479 | 0.404 | 0.548 |

|

| 77 |

+

| boolq/acc | 0.517 | 0.578 | 0.585 | 0.617 | **0.62** |

|

| 78 |

+

| hellaswag/acc | **0.415** | **0.415** | 0.362 | 0.322 | 0.412 |

|

| 79 |

+

| hellaswag/acc_norm | 0.532 | **0.537** | 0.458 | 0.372 | 0.527 |

|

| 80 |

+

| openbookqa/acc | **0.238** | 0.234 | 0.17 | 0.166 | 0.212 |

|

| 81 |

+

| openbookqa/acc_norm | **0.334** | **0.334** | 0.298 | **0.334** | 0.328 |

|

| 82 |

+

| piqa/acc | 0.714 | **0.718** | 0.697 | 0.667 | 0.711 |

|

| 83 |

+

| piqa/acc_norm | 0.72 | **0.724** | 0.703 | 0.649 | 0.721 |

|

| 84 |

+

| record/f1 | 0.84 | **0.857** | 0.775 | 0.681 | 0.841 |

|

| 85 |

+

| record/em | 0.832 | **0.849** | 0.769 | 0.674 | 0.834 |

|

| 86 |

+

| rte/acc | 0.541 | 0.523 | **0.559** | 0.513 | 0.524 |

|

| 87 |

+

| truthfulqa_mc/mc1 | 0.224 | 0.237 | 0.215 | **0.251** | 0.247 |

|

| 88 |

+

| truthfulqa_mc/mc2 | 0.387 | 0.386 | 0.373 | **0.428** | 0.392 |

|

| 89 |

+

| wic/acc | 0.498 | **0.509** | 0.503 | 0.5 | 0.502 |

|

| 90 |

+

| winogrande/acc | 0.574 | **0.595** | 0.55 | 0.519 | 0.579 |

|

| 91 |

+

| **avearge** | 0.479 | 0.482 | 0.452 | 0.429 | **0.485** |

|

| 92 |

+

|

| 93 |

+

## Limitations and Ethical Considerations

|

| 94 |

+

42dot-PLM shares a number of well-known limitations of other large language models (LLMs). For example, it may generate false and misinformative contents since 42dot-PLM is also subject to [hallucination](https://en.wikipedia.org/wiki/Hallucination_(artificial_intelligence)). In addition, 42dot-PLM may generate toxic, harmful and biased contents due to use of web-available training corpus. We strongly suggest that 42dot-PLM users should beware of those limitations and take necessary steps for mitigating those issues.

|

| 95 |

+

|

| 96 |

+

## Disclaimer

|

| 97 |

+

The contents generated by 42dot LLM series ("42dot LLMs") do not necessarily reflect the views or opinions of 42dot Inc. ("42dot"). 42dot disclaims any and all liability to any part for any direct, indirect, implied, punitive, special, incidental or other consequential damages arising any use of the 42dot LLMs and theirs generated contents.

|

| 98 |

+

|

| 99 |

+

## License

|

| 100 |

+

The 42dot-PLM is licensed under the Creative Commons Attribution-NonCommercial 4.0 (CC BY-NC 4.0) license.

|

| 101 |

+

|

| 102 |

+

## Citation

|

| 103 |

+

|

| 104 |

+

```

|

| 105 |

+

@misc{42dot2023lm,

|

| 106 |

+

title={42dot LM: Instruction Tuned Large Language Model of 42dot},

|

| 107 |

+

author={Woo-Jong Ryu and Sang-Kil Park and Jinwoo Park and Sungmin Lee and Yongkeun Hwang},

|

| 108 |

+

year={2023},

|

| 109 |

+

url = {https://gitlab.42dot.ai/NLP/hyperai/ChatBaker},

|

| 110 |

+

version = {pre-release},

|

| 111 |

+

}

|

| 112 |

+

```

|

asset/plm_benchmark_en.png

ADDED

|

asset/plm_benchmark_ko.png

ADDED

|