Uploaded Fine-Tuned SD Model on Cartoon Image Dataset

Browse files- README.md +66 -0

- checkpoint-10000/optimizer.bin +3 -0

- checkpoint-10000/random_states_0.pkl +3 -0

- checkpoint-10000/scaler.pt +3 -0

- checkpoint-10000/scheduler.bin +3 -0

- checkpoint-10000/unet/config.json +73 -0

- checkpoint-10000/unet/diffusion_pytorch_model.safetensors +3 -0

- checkpoint-10000/unet_ema/config.json +80 -0

- checkpoint-10000/unet_ema/diffusion_pytorch_model.safetensors +3 -0

- checkpoint-20000/optimizer.bin +3 -0

- checkpoint-20000/random_states_0.pkl +3 -0

- checkpoint-20000/scaler.pt +3 -0

- checkpoint-20000/scheduler.bin +3 -0

- checkpoint-20000/unet/config.json +73 -0

- checkpoint-20000/unet/diffusion_pytorch_model.safetensors +3 -0

- checkpoint-20000/unet_ema/config.json +80 -0

- checkpoint-20000/unet_ema/diffusion_pytorch_model.safetensors +3 -0

- checkpoint-30000/optimizer.bin +3 -0

- checkpoint-30000/random_states_0.pkl +3 -0

- checkpoint-30000/scaler.pt +3 -0

- checkpoint-30000/scheduler.bin +3 -0

- checkpoint-30000/unet/config.json +73 -0

- checkpoint-30000/unet/diffusion_pytorch_model.safetensors +3 -0

- checkpoint-30000/unet_ema/config.json +80 -0

- checkpoint-30000/unet_ema/diffusion_pytorch_model.safetensors +3 -0

- checkpoint-40000/optimizer.bin +3 -0

- checkpoint-40000/random_states_0.pkl +3 -0

- checkpoint-40000/scaler.pt +3 -0

- checkpoint-40000/scheduler.bin +3 -0

- checkpoint-40000/unet/config.json +73 -0

- checkpoint-40000/unet/diffusion_pytorch_model.safetensors +3 -0

- checkpoint-40000/unet_ema/config.json +80 -0

- checkpoint-40000/unet_ema/diffusion_pytorch_model.safetensors +3 -0

- feature_extractor/preprocessor_config.json +27 -0

- model_index.json +38 -0

- scheduler/scheduler_config.json +20 -0

- text_encoder/config.json +25 -0

- text_encoder/model.safetensors +3 -0

- tokenizer/merges.txt +0 -0

- tokenizer/special_tokens_map.json +24 -0

- tokenizer/tokenizer_config.json +39 -0

- tokenizer/vocab.json +0 -0

- unet/config.json +73 -0

- unet/diffusion_pytorch_model.safetensors +3 -0

- vae/config.json +38 -0

- vae/diffusion_pytorch_model.safetensors +3 -0

- val_imgs_grid.png +0 -0

README.md

ADDED

|

@@ -0,0 +1,66 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

base_model: stabilityai/stable-diffusion-2-1

|

| 3 |

+

library_name: diffusers

|

| 4 |

+

license: creativeml-openrail-m

|

| 5 |

+

inference: true

|

| 6 |

+

tags:

|

| 7 |

+

- stable-diffusion

|

| 8 |

+

- stable-diffusion-diffusers

|

| 9 |

+

- text-to-image

|

| 10 |

+

- diffusers

|

| 11 |

+

- diffusers-training

|

| 12 |

+

---

|

| 13 |

+

|

| 14 |

+

<!-- This model card has been generated automatically according to the information the training script had access to. You

|

| 15 |

+

should probably proofread and complete it, then remove this comment. -->

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

# Text-to-image finetuning - CasperLD/cartoon_generation_sd_v1

|

| 19 |

+

|

| 20 |

+

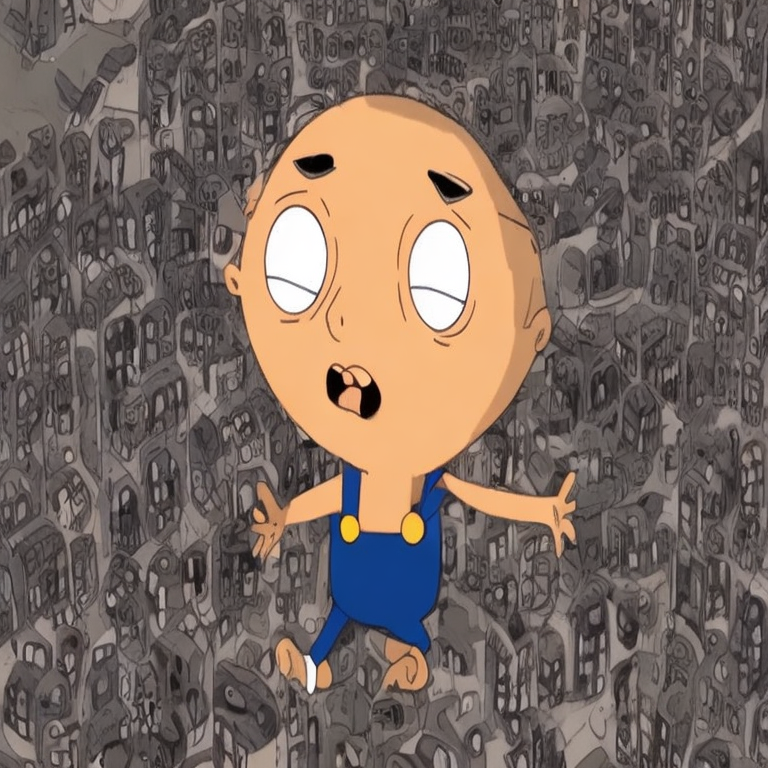

This pipeline was finetuned from **stabilityai/stable-diffusion-2-1** on the **CasperLD/cartoons_with_blip_captions_512_max_3000_at_fg_s_sp** dataset. Below are some example images generated with the finetuned pipeline using the following prompts: ['cartoon character with big eyes']:

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

## Pipeline usage

|

| 26 |

+

|

| 27 |

+

You can use the pipeline like so:

|

| 28 |

+

|

| 29 |

+

```python

|

| 30 |

+

from diffusers import DiffusionPipeline

|

| 31 |

+

import torch

|

| 32 |

+

|

| 33 |

+

pipeline = DiffusionPipeline.from_pretrained("CasperLD/cartoon_generation_sd_v1", torch_dtype=torch.float16)

|

| 34 |

+

prompt = "cartoon character with big eyes"

|

| 35 |

+

image = pipeline(prompt).images[0]

|

| 36 |

+

image.save("my_image.png")

|

| 37 |

+

```

|

| 38 |

+

|

| 39 |

+

## Training info

|

| 40 |

+

|

| 41 |

+

These are the key hyperparameters used during training:

|

| 42 |

+

|

| 43 |

+

* Epochs: 25

|

| 44 |

+

* Learning rate: 1e-05

|

| 45 |

+

* Batch size: 4

|

| 46 |

+

* Gradient accumulation steps: 1

|

| 47 |

+

* Image resolution: 512

|

| 48 |

+

* Mixed-precision: fp16

|

| 49 |

+

|

| 50 |

+

|

| 51 |

+

|

| 52 |

+

## Intended uses & limitations

|

| 53 |

+

|

| 54 |

+

#### How to use

|

| 55 |

+

|

| 56 |

+

```python

|

| 57 |

+

# TODO: add an example code snippet for running this diffusion pipeline

|

| 58 |

+

```

|

| 59 |

+

|

| 60 |

+

#### Limitations and bias

|

| 61 |

+

|

| 62 |

+

[TODO: provide examples of latent issues and potential remediations]

|

| 63 |

+

|

| 64 |

+

## Training details

|

| 65 |

+

|

| 66 |

+

[TODO: describe the data used to train the model]

|

checkpoint-10000/optimizer.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2116868b67fcab17eca980bebf68241d600a187106efb49f4f12f75bdd5e688e

|

| 3 |

+

size 6927867604

|

checkpoint-10000/random_states_0.pkl

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4b16711e345d9d0ee3874b126f817ecf4d306641416704bfab132984e7985a08

|

| 3 |

+

size 14344

|

checkpoint-10000/scaler.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e5c0703094db54145537900cebf6d6cd55d682c3b3377f5c8bad143df538720f

|

| 3 |

+

size 988

|

checkpoint-10000/scheduler.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:051a8b8c4604f484b15934e0fed3e97cb08d998c507a51e61b934ed96e21badb

|

| 3 |

+

size 1000

|

checkpoint-10000/unet/config.json

ADDED

|

@@ -0,0 +1,73 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.33.0.dev0",

|

| 4 |

+

"_name_or_path": "/content/drive/MyDrive/LU CC/Assignment 3: Research Project/Models/cartoon_generation_sd_v1/checkpoint-1000",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"addition_embed_type": null,

|

| 7 |

+

"addition_embed_type_num_heads": 64,

|

| 8 |

+

"addition_time_embed_dim": null,

|

| 9 |

+

"attention_head_dim": [

|

| 10 |

+

5,

|

| 11 |

+

10,

|

| 12 |

+

20,

|

| 13 |

+

20

|

| 14 |

+

],

|

| 15 |

+

"attention_type": "default",

|

| 16 |

+

"block_out_channels": [

|

| 17 |

+

320,

|

| 18 |

+

640,

|

| 19 |

+

1280,

|

| 20 |

+

1280

|

| 21 |

+

],

|

| 22 |

+

"center_input_sample": false,

|

| 23 |

+

"class_embed_type": null,

|

| 24 |

+

"class_embeddings_concat": false,

|

| 25 |

+

"conv_in_kernel": 3,

|

| 26 |

+

"conv_out_kernel": 3,

|

| 27 |

+

"cross_attention_dim": 1024,

|

| 28 |

+

"cross_attention_norm": null,

|

| 29 |

+

"down_block_types": [

|

| 30 |

+

"CrossAttnDownBlock2D",

|

| 31 |

+

"CrossAttnDownBlock2D",

|

| 32 |

+

"CrossAttnDownBlock2D",

|

| 33 |

+

"DownBlock2D"

|

| 34 |

+

],

|

| 35 |

+

"downsample_padding": 1,

|

| 36 |

+

"dropout": 0.0,

|

| 37 |

+

"dual_cross_attention": false,

|

| 38 |

+

"encoder_hid_dim": null,

|

| 39 |

+

"encoder_hid_dim_type": null,

|

| 40 |

+

"flip_sin_to_cos": true,

|

| 41 |

+

"freq_shift": 0,

|

| 42 |

+

"in_channels": 4,

|

| 43 |

+

"layers_per_block": 2,

|

| 44 |

+

"mid_block_only_cross_attention": null,

|

| 45 |

+

"mid_block_scale_factor": 1,

|

| 46 |

+

"mid_block_type": "UNetMidBlock2DCrossAttn",

|

| 47 |

+

"norm_eps": 1e-05,

|

| 48 |

+

"norm_num_groups": 32,

|

| 49 |

+

"num_attention_heads": null,

|

| 50 |

+

"num_class_embeds": null,

|

| 51 |

+

"only_cross_attention": false,

|

| 52 |

+

"out_channels": 4,

|

| 53 |

+

"projection_class_embeddings_input_dim": null,

|

| 54 |

+

"resnet_out_scale_factor": 1.0,

|

| 55 |

+

"resnet_skip_time_act": false,

|

| 56 |

+

"resnet_time_scale_shift": "default",

|

| 57 |

+

"reverse_transformer_layers_per_block": null,

|

| 58 |

+

"sample_size": 96,

|

| 59 |

+

"time_cond_proj_dim": null,

|

| 60 |

+

"time_embedding_act_fn": null,

|

| 61 |

+

"time_embedding_dim": null,

|

| 62 |

+

"time_embedding_type": "positional",

|

| 63 |

+

"timestep_post_act": null,

|

| 64 |

+

"transformer_layers_per_block": 1,

|

| 65 |

+

"up_block_types": [

|

| 66 |

+

"UpBlock2D",

|

| 67 |

+

"CrossAttnUpBlock2D",

|

| 68 |

+

"CrossAttnUpBlock2D",

|

| 69 |

+

"CrossAttnUpBlock2D"

|

| 70 |

+

],

|

| 71 |

+

"upcast_attention": true,

|

| 72 |

+

"use_linear_projection": true

|

| 73 |

+

}

|

checkpoint-10000/unet/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:356cd9b882235091d3f29c1783f749abb0f6ebcb37766e40c67ffb9f13c9e861

|

| 3 |

+

size 3463726504

|

checkpoint-10000/unet_ema/config.json

ADDED

|

@@ -0,0 +1,80 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.33.0.dev0",

|

| 4 |

+

"_name_or_path": "stabilityai/stable-diffusion-2-1",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"addition_embed_type": null,

|

| 7 |

+

"addition_embed_type_num_heads": 64,

|

| 8 |

+

"addition_time_embed_dim": null,

|

| 9 |

+

"attention_head_dim": [

|

| 10 |

+

5,

|

| 11 |

+

10,

|

| 12 |

+

20,

|

| 13 |

+

20

|

| 14 |

+

],

|

| 15 |

+

"attention_type": "default",

|

| 16 |

+

"block_out_channels": [

|

| 17 |

+

320,

|

| 18 |

+

640,

|

| 19 |

+

1280,

|

| 20 |

+

1280

|

| 21 |

+

],

|

| 22 |

+

"center_input_sample": false,

|

| 23 |

+

"class_embed_type": null,

|

| 24 |

+

"class_embeddings_concat": false,

|

| 25 |

+

"conv_in_kernel": 3,

|

| 26 |

+

"conv_out_kernel": 3,

|

| 27 |

+

"cross_attention_dim": 1024,

|

| 28 |

+

"cross_attention_norm": null,

|

| 29 |

+

"decay": 0.9999,

|

| 30 |

+

"down_block_types": [

|

| 31 |

+

"CrossAttnDownBlock2D",

|

| 32 |

+

"CrossAttnDownBlock2D",

|

| 33 |

+

"CrossAttnDownBlock2D",

|

| 34 |

+

"DownBlock2D"

|

| 35 |

+

],

|

| 36 |

+

"downsample_padding": 1,

|

| 37 |

+

"dropout": 0.0,

|

| 38 |

+

"dual_cross_attention": false,

|

| 39 |

+

"encoder_hid_dim": null,

|

| 40 |

+

"encoder_hid_dim_type": null,

|

| 41 |

+

"flip_sin_to_cos": true,

|

| 42 |

+

"freq_shift": 0,

|

| 43 |

+

"in_channels": 4,

|

| 44 |

+

"inv_gamma": 1.0,

|

| 45 |

+

"layers_per_block": 2,

|

| 46 |

+

"mid_block_only_cross_attention": null,

|

| 47 |

+

"mid_block_scale_factor": 1,

|

| 48 |

+

"mid_block_type": "UNetMidBlock2DCrossAttn",

|

| 49 |

+

"min_decay": 0.0,

|

| 50 |

+

"norm_eps": 1e-05,

|

| 51 |

+

"norm_num_groups": 32,

|

| 52 |

+

"num_attention_heads": null,

|

| 53 |

+

"num_class_embeds": null,

|

| 54 |

+

"only_cross_attention": false,

|

| 55 |

+

"optimization_step": 10000,

|

| 56 |

+

"out_channels": 4,

|

| 57 |

+

"power": 0.6666666666666666,

|

| 58 |

+

"projection_class_embeddings_input_dim": null,

|

| 59 |

+

"resnet_out_scale_factor": 1.0,

|

| 60 |

+

"resnet_skip_time_act": false,

|

| 61 |

+

"resnet_time_scale_shift": "default",

|

| 62 |

+

"reverse_transformer_layers_per_block": null,

|

| 63 |

+

"sample_size": 96,

|

| 64 |

+

"time_cond_proj_dim": null,

|

| 65 |

+

"time_embedding_act_fn": null,

|

| 66 |

+

"time_embedding_dim": null,

|

| 67 |

+

"time_embedding_type": "positional",

|

| 68 |

+

"timestep_post_act": null,

|

| 69 |

+

"transformer_layers_per_block": 1,

|

| 70 |

+

"up_block_types": [

|

| 71 |

+

"UpBlock2D",

|

| 72 |

+

"CrossAttnUpBlock2D",

|

| 73 |

+

"CrossAttnUpBlock2D",

|

| 74 |

+

"CrossAttnUpBlock2D"

|

| 75 |

+

],

|

| 76 |

+

"upcast_attention": true,

|

| 77 |

+

"update_after_step": 0,

|

| 78 |

+

"use_ema_warmup": false,

|

| 79 |

+

"use_linear_projection": true

|

| 80 |

+

}

|

checkpoint-10000/unet_ema/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9274abfbc19195879d36631146a5c746ea308422a168c3a97f577e77c7133e09

|

| 3 |

+

size 3463726504

|

checkpoint-20000/optimizer.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:cbc7102195f5983de6fcff3008ea7d44845be4c6aef48a5bd58feacde00b08d8

|

| 3 |

+

size 6927867604

|

checkpoint-20000/random_states_0.pkl

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a61926c16e12c7329b51ca12d8e2e70c518672eee13ce4058e2174a61de2a220

|

| 3 |

+

size 14344

|

checkpoint-20000/scaler.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ad6767527657fcab8603ba5a5e2b1504c485ae087fc3f89ebd8924bfa8535802

|

| 3 |

+

size 988

|

checkpoint-20000/scheduler.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:355ab8372f846f2f943a669eeaee67fba5cc9aa0f014a786dc75d35937b366e0

|

| 3 |

+

size 1000

|

checkpoint-20000/unet/config.json

ADDED

|

@@ -0,0 +1,73 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.33.0.dev0",

|

| 4 |

+

"_name_or_path": "/content/drive/MyDrive/LU CC/Assignment 3: Research Project/Models/cartoon_generation_sd_v1/checkpoint-1000",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"addition_embed_type": null,

|

| 7 |

+

"addition_embed_type_num_heads": 64,

|

| 8 |

+

"addition_time_embed_dim": null,

|

| 9 |

+

"attention_head_dim": [

|

| 10 |

+

5,

|

| 11 |

+

10,

|

| 12 |

+

20,

|

| 13 |

+

20

|

| 14 |

+

],

|

| 15 |

+

"attention_type": "default",

|

| 16 |

+

"block_out_channels": [

|

| 17 |

+

320,

|

| 18 |

+

640,

|

| 19 |

+

1280,

|

| 20 |

+

1280

|

| 21 |

+

],

|

| 22 |

+

"center_input_sample": false,

|

| 23 |

+

"class_embed_type": null,

|

| 24 |

+

"class_embeddings_concat": false,

|

| 25 |

+

"conv_in_kernel": 3,

|

| 26 |

+

"conv_out_kernel": 3,

|

| 27 |

+

"cross_attention_dim": 1024,

|

| 28 |

+

"cross_attention_norm": null,

|

| 29 |

+

"down_block_types": [

|

| 30 |

+

"CrossAttnDownBlock2D",

|

| 31 |

+

"CrossAttnDownBlock2D",

|

| 32 |

+

"CrossAttnDownBlock2D",

|

| 33 |

+

"DownBlock2D"

|

| 34 |

+

],

|

| 35 |

+

"downsample_padding": 1,

|

| 36 |

+

"dropout": 0.0,

|

| 37 |

+

"dual_cross_attention": false,

|

| 38 |

+

"encoder_hid_dim": null,

|

| 39 |

+

"encoder_hid_dim_type": null,

|

| 40 |

+

"flip_sin_to_cos": true,

|

| 41 |

+

"freq_shift": 0,

|

| 42 |

+

"in_channels": 4,

|

| 43 |

+

"layers_per_block": 2,

|

| 44 |

+

"mid_block_only_cross_attention": null,

|

| 45 |

+

"mid_block_scale_factor": 1,

|

| 46 |

+

"mid_block_type": "UNetMidBlock2DCrossAttn",

|

| 47 |

+

"norm_eps": 1e-05,

|

| 48 |

+

"norm_num_groups": 32,

|

| 49 |

+

"num_attention_heads": null,

|

| 50 |

+

"num_class_embeds": null,

|

| 51 |

+

"only_cross_attention": false,

|

| 52 |

+

"out_channels": 4,

|

| 53 |

+

"projection_class_embeddings_input_dim": null,

|

| 54 |

+

"resnet_out_scale_factor": 1.0,

|

| 55 |

+

"resnet_skip_time_act": false,

|

| 56 |

+

"resnet_time_scale_shift": "default",

|

| 57 |

+

"reverse_transformer_layers_per_block": null,

|

| 58 |

+

"sample_size": 96,

|

| 59 |

+

"time_cond_proj_dim": null,

|

| 60 |

+

"time_embedding_act_fn": null,

|

| 61 |

+

"time_embedding_dim": null,

|

| 62 |

+

"time_embedding_type": "positional",

|

| 63 |

+

"timestep_post_act": null,

|

| 64 |

+

"transformer_layers_per_block": 1,

|

| 65 |

+

"up_block_types": [

|

| 66 |

+

"UpBlock2D",

|

| 67 |

+

"CrossAttnUpBlock2D",

|

| 68 |

+

"CrossAttnUpBlock2D",

|

| 69 |

+

"CrossAttnUpBlock2D"

|

| 70 |

+

],

|

| 71 |

+

"upcast_attention": true,

|

| 72 |

+

"use_linear_projection": true

|

| 73 |

+

}

|

checkpoint-20000/unet/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:484372175fb5db1ec19f4fcd87b73c22790a02a6589769addba5f215912632bc

|

| 3 |

+

size 3463726504

|

checkpoint-20000/unet_ema/config.json

ADDED

|

@@ -0,0 +1,80 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.33.0.dev0",

|

| 4 |

+

"_name_or_path": "stabilityai/stable-diffusion-2-1",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"addition_embed_type": null,

|

| 7 |

+

"addition_embed_type_num_heads": 64,

|

| 8 |

+

"addition_time_embed_dim": null,

|

| 9 |

+

"attention_head_dim": [

|

| 10 |

+

5,

|

| 11 |

+

10,

|

| 12 |

+

20,

|

| 13 |

+

20

|

| 14 |

+

],

|

| 15 |

+

"attention_type": "default",

|

| 16 |

+

"block_out_channels": [

|

| 17 |

+

320,

|

| 18 |

+

640,

|

| 19 |

+

1280,

|

| 20 |

+

1280

|

| 21 |

+

],

|

| 22 |

+

"center_input_sample": false,

|

| 23 |

+

"class_embed_type": null,

|

| 24 |

+

"class_embeddings_concat": false,

|

| 25 |

+

"conv_in_kernel": 3,

|

| 26 |

+

"conv_out_kernel": 3,

|

| 27 |

+

"cross_attention_dim": 1024,

|

| 28 |

+

"cross_attention_norm": null,

|

| 29 |

+

"decay": 0.9999,

|

| 30 |

+

"down_block_types": [

|

| 31 |

+

"CrossAttnDownBlock2D",

|

| 32 |

+

"CrossAttnDownBlock2D",

|

| 33 |

+

"CrossAttnDownBlock2D",

|

| 34 |

+

"DownBlock2D"

|

| 35 |

+

],

|

| 36 |

+

"downsample_padding": 1,

|

| 37 |

+

"dropout": 0.0,

|

| 38 |

+

"dual_cross_attention": false,

|

| 39 |

+

"encoder_hid_dim": null,

|

| 40 |

+

"encoder_hid_dim_type": null,

|

| 41 |

+

"flip_sin_to_cos": true,

|

| 42 |

+

"freq_shift": 0,

|

| 43 |

+

"in_channels": 4,

|

| 44 |

+

"inv_gamma": 1.0,

|

| 45 |

+

"layers_per_block": 2,

|

| 46 |

+

"mid_block_only_cross_attention": null,

|

| 47 |

+

"mid_block_scale_factor": 1,

|

| 48 |

+

"mid_block_type": "UNetMidBlock2DCrossAttn",

|

| 49 |

+

"min_decay": 0.0,

|

| 50 |

+

"norm_eps": 1e-05,

|

| 51 |

+

"norm_num_groups": 32,

|

| 52 |

+

"num_attention_heads": null,

|

| 53 |

+

"num_class_embeds": null,

|

| 54 |

+

"only_cross_attention": false,

|

| 55 |

+

"optimization_step": 20000,

|

| 56 |

+

"out_channels": 4,

|

| 57 |

+

"power": 0.6666666666666666,

|

| 58 |

+

"projection_class_embeddings_input_dim": null,

|

| 59 |

+

"resnet_out_scale_factor": 1.0,

|

| 60 |

+

"resnet_skip_time_act": false,

|

| 61 |

+

"resnet_time_scale_shift": "default",

|

| 62 |

+

"reverse_transformer_layers_per_block": null,

|

| 63 |

+

"sample_size": 96,

|

| 64 |

+

"time_cond_proj_dim": null,

|

| 65 |

+

"time_embedding_act_fn": null,

|

| 66 |

+

"time_embedding_dim": null,

|

| 67 |

+

"time_embedding_type": "positional",

|

| 68 |

+

"timestep_post_act": null,

|

| 69 |

+

"transformer_layers_per_block": 1,

|

| 70 |

+

"up_block_types": [

|

| 71 |

+

"UpBlock2D",

|

| 72 |

+

"CrossAttnUpBlock2D",

|

| 73 |

+

"CrossAttnUpBlock2D",

|

| 74 |

+

"CrossAttnUpBlock2D"

|

| 75 |

+

],

|

| 76 |

+

"upcast_attention": true,

|

| 77 |

+

"update_after_step": 0,

|

| 78 |

+

"use_ema_warmup": false,

|

| 79 |

+

"use_linear_projection": true

|

| 80 |

+

}

|

checkpoint-20000/unet_ema/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:00f80eb344509726718de93c000349226127270f66ac5e460cb367cee2bb2d12

|

| 3 |

+

size 3463726504

|

checkpoint-30000/optimizer.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f79a90fd22defd533473a3a0dd7a7c9c93f80a1af820e5caf35c8b5b1b7fdc98

|

| 3 |

+

size 6927867604

|

checkpoint-30000/random_states_0.pkl

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c0774871296d3c55f358d8b875559988d7d85b9b0c0a724f291602e4a8306c76

|

| 3 |

+

size 14344

|

checkpoint-30000/scaler.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5487dc671fccfe7fec9430fdeb122d8dd7dc991ad5300d298b751a0643ab0c7e

|

| 3 |

+

size 988

|

checkpoint-30000/scheduler.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b95631dbf61a71fd7eddaf4c2e9f1535a536126b294dbdc8e09af91ae24bea38

|

| 3 |

+

size 1000

|

checkpoint-30000/unet/config.json

ADDED

|

@@ -0,0 +1,73 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.33.0.dev0",

|

| 4 |

+

"_name_or_path": "/content/drive/MyDrive/LU CC/Assignment 3: Research Project/Models/cartoon_generation_sd_v1/checkpoint-20000",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"addition_embed_type": null,

|

| 7 |

+

"addition_embed_type_num_heads": 64,

|

| 8 |

+

"addition_time_embed_dim": null,

|

| 9 |

+

"attention_head_dim": [

|

| 10 |

+

5,

|

| 11 |

+

10,

|

| 12 |

+

20,

|

| 13 |

+

20

|

| 14 |

+

],

|

| 15 |

+

"attention_type": "default",

|

| 16 |

+

"block_out_channels": [

|

| 17 |

+

320,

|

| 18 |

+

640,

|

| 19 |

+

1280,

|

| 20 |

+

1280

|

| 21 |

+

],

|

| 22 |

+

"center_input_sample": false,

|

| 23 |

+

"class_embed_type": null,

|

| 24 |

+

"class_embeddings_concat": false,

|

| 25 |

+

"conv_in_kernel": 3,

|

| 26 |

+

"conv_out_kernel": 3,

|

| 27 |

+

"cross_attention_dim": 1024,

|

| 28 |

+

"cross_attention_norm": null,

|

| 29 |

+

"down_block_types": [

|

| 30 |

+

"CrossAttnDownBlock2D",

|

| 31 |

+

"CrossAttnDownBlock2D",

|

| 32 |

+

"CrossAttnDownBlock2D",

|

| 33 |

+

"DownBlock2D"

|

| 34 |

+

],

|

| 35 |

+

"downsample_padding": 1,

|

| 36 |

+

"dropout": 0.0,

|

| 37 |

+

"dual_cross_attention": false,

|

| 38 |

+

"encoder_hid_dim": null,

|

| 39 |

+

"encoder_hid_dim_type": null,

|

| 40 |

+

"flip_sin_to_cos": true,

|

| 41 |

+

"freq_shift": 0,

|

| 42 |

+

"in_channels": 4,

|

| 43 |

+

"layers_per_block": 2,

|

| 44 |

+

"mid_block_only_cross_attention": null,

|

| 45 |

+

"mid_block_scale_factor": 1,

|

| 46 |

+

"mid_block_type": "UNetMidBlock2DCrossAttn",

|

| 47 |

+

"norm_eps": 1e-05,

|

| 48 |

+

"norm_num_groups": 32,

|

| 49 |

+

"num_attention_heads": null,

|

| 50 |

+

"num_class_embeds": null,

|

| 51 |

+

"only_cross_attention": false,

|

| 52 |

+

"out_channels": 4,

|

| 53 |

+

"projection_class_embeddings_input_dim": null,

|

| 54 |

+

"resnet_out_scale_factor": 1.0,

|

| 55 |

+

"resnet_skip_time_act": false,

|

| 56 |

+

"resnet_time_scale_shift": "default",

|

| 57 |

+

"reverse_transformer_layers_per_block": null,

|

| 58 |

+

"sample_size": 96,

|

| 59 |

+

"time_cond_proj_dim": null,

|

| 60 |

+

"time_embedding_act_fn": null,

|

| 61 |

+

"time_embedding_dim": null,

|

| 62 |

+

"time_embedding_type": "positional",

|

| 63 |

+

"timestep_post_act": null,

|

| 64 |

+

"transformer_layers_per_block": 1,

|

| 65 |

+

"up_block_types": [

|

| 66 |

+

"UpBlock2D",

|

| 67 |

+

"CrossAttnUpBlock2D",

|

| 68 |

+

"CrossAttnUpBlock2D",

|

| 69 |

+

"CrossAttnUpBlock2D"

|

| 70 |

+

],

|

| 71 |

+

"upcast_attention": true,

|

| 72 |

+

"use_linear_projection": true

|

| 73 |

+

}

|

checkpoint-30000/unet/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:10c6b341293f3f2c7c2f17fb44dce613e287f001e66e2eb559a71702a38c6268

|

| 3 |

+

size 3463726504

|

checkpoint-30000/unet_ema/config.json

ADDED

|

@@ -0,0 +1,80 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.33.0.dev0",

|

| 4 |

+

"_name_or_path": "stabilityai/stable-diffusion-2-1",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"addition_embed_type": null,

|

| 7 |

+

"addition_embed_type_num_heads": 64,

|

| 8 |

+

"addition_time_embed_dim": null,

|

| 9 |

+

"attention_head_dim": [

|

| 10 |

+

5,

|

| 11 |

+

10,

|

| 12 |

+

20,

|

| 13 |

+

20

|

| 14 |

+

],

|

| 15 |

+

"attention_type": "default",

|

| 16 |

+

"block_out_channels": [

|

| 17 |

+

320,

|

| 18 |

+

640,

|

| 19 |

+

1280,

|

| 20 |

+

1280

|

| 21 |

+

],

|

| 22 |

+

"center_input_sample": false,

|

| 23 |

+

"class_embed_type": null,

|

| 24 |

+

"class_embeddings_concat": false,

|

| 25 |

+

"conv_in_kernel": 3,

|

| 26 |

+

"conv_out_kernel": 3,

|

| 27 |

+

"cross_attention_dim": 1024,

|

| 28 |

+

"cross_attention_norm": null,

|

| 29 |

+

"decay": 0.9999,

|

| 30 |

+

"down_block_types": [

|

| 31 |

+

"CrossAttnDownBlock2D",

|

| 32 |

+

"CrossAttnDownBlock2D",

|

| 33 |

+

"CrossAttnDownBlock2D",

|

| 34 |

+

"DownBlock2D"

|

| 35 |

+

],

|

| 36 |

+

"downsample_padding": 1,

|

| 37 |

+

"dropout": 0.0,

|

| 38 |

+

"dual_cross_attention": false,

|

| 39 |

+

"encoder_hid_dim": null,

|

| 40 |

+

"encoder_hid_dim_type": null,

|

| 41 |

+

"flip_sin_to_cos": true,

|

| 42 |

+

"freq_shift": 0,

|

| 43 |

+

"in_channels": 4,

|

| 44 |

+

"inv_gamma": 1.0,

|

| 45 |

+

"layers_per_block": 2,

|

| 46 |

+

"mid_block_only_cross_attention": null,

|

| 47 |

+

"mid_block_scale_factor": 1,

|

| 48 |

+

"mid_block_type": "UNetMidBlock2DCrossAttn",

|

| 49 |

+

"min_decay": 0.0,

|

| 50 |

+

"norm_eps": 1e-05,

|

| 51 |

+

"norm_num_groups": 32,

|

| 52 |

+

"num_attention_heads": null,

|

| 53 |

+

"num_class_embeds": null,

|

| 54 |

+

"only_cross_attention": false,

|

| 55 |

+

"optimization_step": 30000,

|

| 56 |

+

"out_channels": 4,

|

| 57 |

+

"power": 0.6666666666666666,

|

| 58 |

+

"projection_class_embeddings_input_dim": null,

|

| 59 |

+

"resnet_out_scale_factor": 1.0,

|

| 60 |

+

"resnet_skip_time_act": false,

|

| 61 |

+

"resnet_time_scale_shift": "default",

|

| 62 |

+

"reverse_transformer_layers_per_block": null,

|

| 63 |

+

"sample_size": 96,

|

| 64 |

+

"time_cond_proj_dim": null,

|

| 65 |

+

"time_embedding_act_fn": null,

|

| 66 |

+

"time_embedding_dim": null,

|

| 67 |

+

"time_embedding_type": "positional",

|

| 68 |

+

"timestep_post_act": null,

|

| 69 |

+

"transformer_layers_per_block": 1,

|

| 70 |

+

"up_block_types": [

|

| 71 |

+

"UpBlock2D",

|

| 72 |

+

"CrossAttnUpBlock2D",

|

| 73 |

+

"CrossAttnUpBlock2D",

|

| 74 |

+

"CrossAttnUpBlock2D"

|

| 75 |

+

],

|

| 76 |

+

"upcast_attention": true,

|

| 77 |

+

"update_after_step": 0,

|

| 78 |

+

"use_ema_warmup": false,

|

| 79 |

+

"use_linear_projection": true

|

| 80 |

+

}

|

checkpoint-30000/unet_ema/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b9085b3c7470c397e6080dedcb778344874f86cbcd70f5652c1a7ade357a6559

|

| 3 |

+

size 3463726504

|

checkpoint-40000/optimizer.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c9618e9f074a234e2c2232a1010e6cdf468e3f05dbbeaff8d4b9dc74d10921a0

|

| 3 |

+

size 6927867604

|

checkpoint-40000/random_states_0.pkl

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4f886a1011fdad67695b2bc8ad732a07b08b1a81067155cf2a966332beb497af

|

| 3 |

+

size 14344

|

checkpoint-40000/scaler.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d117eb9026340934865d1fbe4757cc0f0a5b66cbc789de4a1328a045762ab158

|

| 3 |

+

size 988

|

checkpoint-40000/scheduler.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:980d020c053a7c640c1aed58ab45ca431d5d36b337e81858aeb5ba2bc6a4a52a

|

| 3 |

+

size 1000

|

checkpoint-40000/unet/config.json

ADDED

|

@@ -0,0 +1,73 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.33.0.dev0",

|

| 4 |

+

"_name_or_path": "/content/drive/MyDrive/LU CC/Assignment 3: Research Project/Models/cartoon_generation_sd_v1/checkpoint-20000",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"addition_embed_type": null,

|

| 7 |

+

"addition_embed_type_num_heads": 64,

|

| 8 |

+

"addition_time_embed_dim": null,

|

| 9 |

+

"attention_head_dim": [

|

| 10 |

+

5,

|

| 11 |

+

10,

|

| 12 |

+

20,

|

| 13 |

+

20

|

| 14 |

+

],

|

| 15 |

+

"attention_type": "default",

|

| 16 |

+

"block_out_channels": [

|

| 17 |

+

320,

|

| 18 |

+

640,

|

| 19 |

+

1280,

|

| 20 |

+

1280

|

| 21 |

+

],

|

| 22 |

+

"center_input_sample": false,

|

| 23 |

+

"class_embed_type": null,

|

| 24 |

+

"class_embeddings_concat": false,

|

| 25 |

+

"conv_in_kernel": 3,

|

| 26 |

+

"conv_out_kernel": 3,

|

| 27 |

+

"cross_attention_dim": 1024,

|

| 28 |

+

"cross_attention_norm": null,

|

| 29 |

+

"down_block_types": [

|

| 30 |

+

"CrossAttnDownBlock2D",

|

| 31 |

+

"CrossAttnDownBlock2D",

|

| 32 |

+

"CrossAttnDownBlock2D",

|

| 33 |

+

"DownBlock2D"

|

| 34 |

+

],

|

| 35 |

+

"downsample_padding": 1,

|

| 36 |

+

"dropout": 0.0,

|

| 37 |

+

"dual_cross_attention": false,

|

| 38 |

+

"encoder_hid_dim": null,

|

| 39 |

+

"encoder_hid_dim_type": null,

|

| 40 |

+

"flip_sin_to_cos": true,

|

| 41 |

+

"freq_shift": 0,

|

| 42 |

+

"in_channels": 4,

|

| 43 |

+

"layers_per_block": 2,

|

| 44 |

+

"mid_block_only_cross_attention": null,

|

| 45 |

+

"mid_block_scale_factor": 1,

|

| 46 |

+

"mid_block_type": "UNetMidBlock2DCrossAttn",

|

| 47 |

+

"norm_eps": 1e-05,

|

| 48 |

+

"norm_num_groups": 32,

|

| 49 |

+

"num_attention_heads": null,

|

| 50 |

+

"num_class_embeds": null,

|

| 51 |

+

"only_cross_attention": false,

|

| 52 |

+

"out_channels": 4,

|

| 53 |

+

"projection_class_embeddings_input_dim": null,

|

| 54 |

+

"resnet_out_scale_factor": 1.0,

|

| 55 |

+

"resnet_skip_time_act": false,

|

| 56 |

+

"resnet_time_scale_shift": "default",

|

| 57 |

+

"reverse_transformer_layers_per_block": null,

|

| 58 |

+

"sample_size": 96,

|

| 59 |

+

"time_cond_proj_dim": null,

|

| 60 |

+

"time_embedding_act_fn": null,

|

| 61 |

+

"time_embedding_dim": null,

|

| 62 |

+

"time_embedding_type": "positional",

|

| 63 |

+

"timestep_post_act": null,

|

| 64 |

+

"transformer_layers_per_block": 1,

|

| 65 |

+

"up_block_types": [

|

| 66 |

+

"UpBlock2D",

|

| 67 |

+

"CrossAttnUpBlock2D",

|

| 68 |

+

"CrossAttnUpBlock2D",

|

| 69 |

+

"CrossAttnUpBlock2D"

|

| 70 |

+

],

|

| 71 |

+

"upcast_attention": true,

|

| 72 |

+

"use_linear_projection": true

|

| 73 |

+

}

|

checkpoint-40000/unet/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:241e0c77bac4b93e0235eb8400844dc8fbf999de5071c2b77a56135489d8c157

|

| 3 |

+

size 3463726504

|

checkpoint-40000/unet_ema/config.json

ADDED

|

@@ -0,0 +1,80 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.33.0.dev0",

|

| 4 |

+

"_name_or_path": "stabilityai/stable-diffusion-2-1",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"addition_embed_type": null,

|

| 7 |

+

"addition_embed_type_num_heads": 64,

|

| 8 |

+

"addition_time_embed_dim": null,

|

| 9 |

+

"attention_head_dim": [

|

| 10 |

+

5,

|

| 11 |

+

10,

|

| 12 |

+

20,

|

| 13 |

+

20

|

| 14 |

+

],

|

| 15 |

+

"attention_type": "default",

|

| 16 |

+

"block_out_channels": [

|

| 17 |

+

320,

|

| 18 |

+

640,

|

| 19 |

+

1280,

|

| 20 |

+

1280

|

| 21 |

+

],

|

| 22 |

+

"center_input_sample": false,

|

| 23 |

+

"class_embed_type": null,

|

| 24 |

+

"class_embeddings_concat": false,

|

| 25 |

+

"conv_in_kernel": 3,

|

| 26 |

+

"conv_out_kernel": 3,

|

| 27 |

+

"cross_attention_dim": 1024,

|

| 28 |

+

"cross_attention_norm": null,

|

| 29 |

+

"decay": 0.9999,

|

| 30 |

+

"down_block_types": [

|

| 31 |

+

"CrossAttnDownBlock2D",

|

| 32 |

+

"CrossAttnDownBlock2D",

|

| 33 |

+

"CrossAttnDownBlock2D",

|

| 34 |

+

"DownBlock2D"

|

| 35 |

+

],

|

| 36 |

+

"downsample_padding": 1,

|

| 37 |

+

"dropout": 0.0,

|

| 38 |

+

"dual_cross_attention": false,

|

| 39 |

+

"encoder_hid_dim": null,

|

| 40 |

+

"encoder_hid_dim_type": null,

|

| 41 |

+

"flip_sin_to_cos": true,

|

| 42 |

+

"freq_shift": 0,

|

| 43 |

+

"in_channels": 4,

|

| 44 |

+

"inv_gamma": 1.0,

|

| 45 |

+

"layers_per_block": 2,

|

| 46 |

+

"mid_block_only_cross_attention": null,

|

| 47 |

+

"mid_block_scale_factor": 1,

|

| 48 |

+

"mid_block_type": "UNetMidBlock2DCrossAttn",

|

| 49 |

+

"min_decay": 0.0,

|

| 50 |

+

"norm_eps": 1e-05,

|

| 51 |

+

"norm_num_groups": 32,

|

| 52 |

+

"num_attention_heads": null,

|

| 53 |

+

"num_class_embeds": null,

|

| 54 |

+

"only_cross_attention": false,

|

| 55 |

+

"optimization_step": 40000,

|

| 56 |

+

"out_channels": 4,

|

| 57 |

+

"power": 0.6666666666666666,

|

| 58 |

+

"projection_class_embeddings_input_dim": null,

|

| 59 |

+

"resnet_out_scale_factor": 1.0,

|

| 60 |

+

"resnet_skip_time_act": false,

|

| 61 |

+

"resnet_time_scale_shift": "default",

|

| 62 |

+

"reverse_transformer_layers_per_block": null,

|

| 63 |

+

"sample_size": 96,

|

| 64 |

+

"time_cond_proj_dim": null,

|

| 65 |

+

"time_embedding_act_fn": null,

|

| 66 |

+

"time_embedding_dim": null,

|

| 67 |

+

"time_embedding_type": "positional",

|

| 68 |

+

"timestep_post_act": null,

|

| 69 |

+

"transformer_layers_per_block": 1,

|

| 70 |

+

"up_block_types": [

|

| 71 |

+

"UpBlock2D",

|

| 72 |

+

"CrossAttnUpBlock2D",

|

| 73 |

+

"CrossAttnUpBlock2D",

|

| 74 |

+

"CrossAttnUpBlock2D"

|

| 75 |

+

],

|

| 76 |

+

"upcast_attention": true,

|

| 77 |

+

"update_after_step": 0,

|

| 78 |

+

"use_ema_warmup": false,

|

| 79 |

+

"use_linear_projection": true

|

| 80 |

+

}

|

checkpoint-40000/unet_ema/diffusion_pytorch_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e8efdb182100a8b14294d320599246e90901524cb8d32f245e05c1ef4c6ff0ca

|

| 3 |

+

size 3463726504

|

feature_extractor/preprocessor_config.json

ADDED

|

@@ -0,0 +1,27 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"crop_size": {

|

| 3 |

+

"height": 224,

|

| 4 |

+

"width": 224

|

| 5 |

+

},

|

| 6 |

+

"do_center_crop": true,

|

| 7 |

+

"do_convert_rgb": true,

|

| 8 |

+

"do_normalize": true,

|

| 9 |

+

"do_rescale": true,

|

| 10 |

+

"do_resize": true,

|

| 11 |

+

"image_mean": [

|

| 12 |

+

0.48145466,

|

| 13 |

+

0.4578275,

|

| 14 |

+

0.40821073

|

| 15 |

+

],

|

| 16 |

+

"image_processor_type": "CLIPImageProcessor",

|

| 17 |

+

"image_std": [

|

| 18 |

+

0.26862954,

|

| 19 |

+

0.26130258,

|

| 20 |

+

0.27577711

|

| 21 |

+

],

|

| 22 |

+

"resample": 3,

|

| 23 |

+

"rescale_factor": 0.00392156862745098,

|

| 24 |

+

"size": {

|

| 25 |

+

"shortest_edge": 224

|

| 26 |

+

}

|

| 27 |

+

}

|

model_index.json

ADDED

|

@@ -0,0 +1,38 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "StableDiffusionPipeline",

|

| 3 |

+

"_diffusers_version": "0.33.0.dev0",

|

| 4 |

+

"_name_or_path": "stabilityai/stable-diffusion-2-1",

|

| 5 |

+

"feature_extractor": [

|

| 6 |

+

"transformers",

|

| 7 |

+

"CLIPImageProcessor"

|

| 8 |

+

],

|

| 9 |

+

"image_encoder": [

|

| 10 |

+

null,

|

| 11 |

+

null

|

| 12 |

+

],

|

| 13 |

+

"requires_safety_checker": false,

|

| 14 |

+

"safety_checker": [

|

| 15 |

+

null,

|

| 16 |

+

null

|

| 17 |

+

],

|

| 18 |

+

"scheduler": [

|

| 19 |

+

"diffusers",

|

| 20 |

+

"DDIMScheduler"

|

| 21 |

+

],

|

| 22 |

+

"text_encoder": [

|

| 23 |

+

"transformers",

|

| 24 |

+

"CLIPTextModel"

|

| 25 |

+

],

|

| 26 |

+

"tokenizer": [

|

| 27 |

+

"transformers",

|

| 28 |

+

"CLIPTokenizer"

|

| 29 |

+

],

|

| 30 |

+

"unet": [

|

| 31 |

+

"diffusers",

|

| 32 |

+

"UNet2DConditionModel"

|

| 33 |

+

],

|

| 34 |

+

"vae": [

|

| 35 |

+

"diffusers",

|

| 36 |

+

"AutoencoderKL"

|

| 37 |

+

]

|

| 38 |

+

}

|

scheduler/scheduler_config.json

ADDED

|

@@ -0,0 +1,20 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|