Commit

•

4333723

1

Parent(s):

7ecfa51

Upload README.md with huggingface_hub

Browse files

README.md

ADDED

|

@@ -0,0 +1,78 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

---

|

| 3 |

+

|

| 4 |

+

base_model: Qwen/Qwen2.5-1.5B

|

| 5 |

+

language:

|

| 6 |

+

- en

|

| 7 |

+

pipeline_tag: text-generation

|

| 8 |

+

library_name: transformers

|

| 9 |

+

license: apache-2.0

|

| 10 |

+

license_link: https://huggingface.co/Qwen/Qwen2.5-Math-1.5B/blob/main/LICENSE

|

| 11 |

+

|

| 12 |

+

---

|

| 13 |

+

|

| 14 |

+

[](https://hf.co/QuantFactory)

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

# QuantFactory/Qwen2.5-Math-1.5B-GGUF

|

| 18 |

+

This is quantized version of [Qwen/Qwen2.5-Math-1.5B](https://huggingface.co/Qwen/Qwen2.5-Math-1.5B) created using llama.cpp

|

| 19 |

+

|

| 20 |

+

# Original Model Card

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

# Qwen2.5-Math-1.5B

|

| 24 |

+

|

| 25 |

+

> [!Warning]

|

| 26 |

+

> <div align="center">

|

| 27 |

+

> <b>

|

| 28 |

+

> 🚨 Qwen2.5-Math mainly supports solving English and Chinese math problems through CoT and TIR. We do not recommend using this series of models for other tasks.

|

| 29 |

+

> </b>

|

| 30 |

+

> </div>

|

| 31 |

+

|

| 32 |

+

## Introduction

|

| 33 |

+

|

| 34 |

+

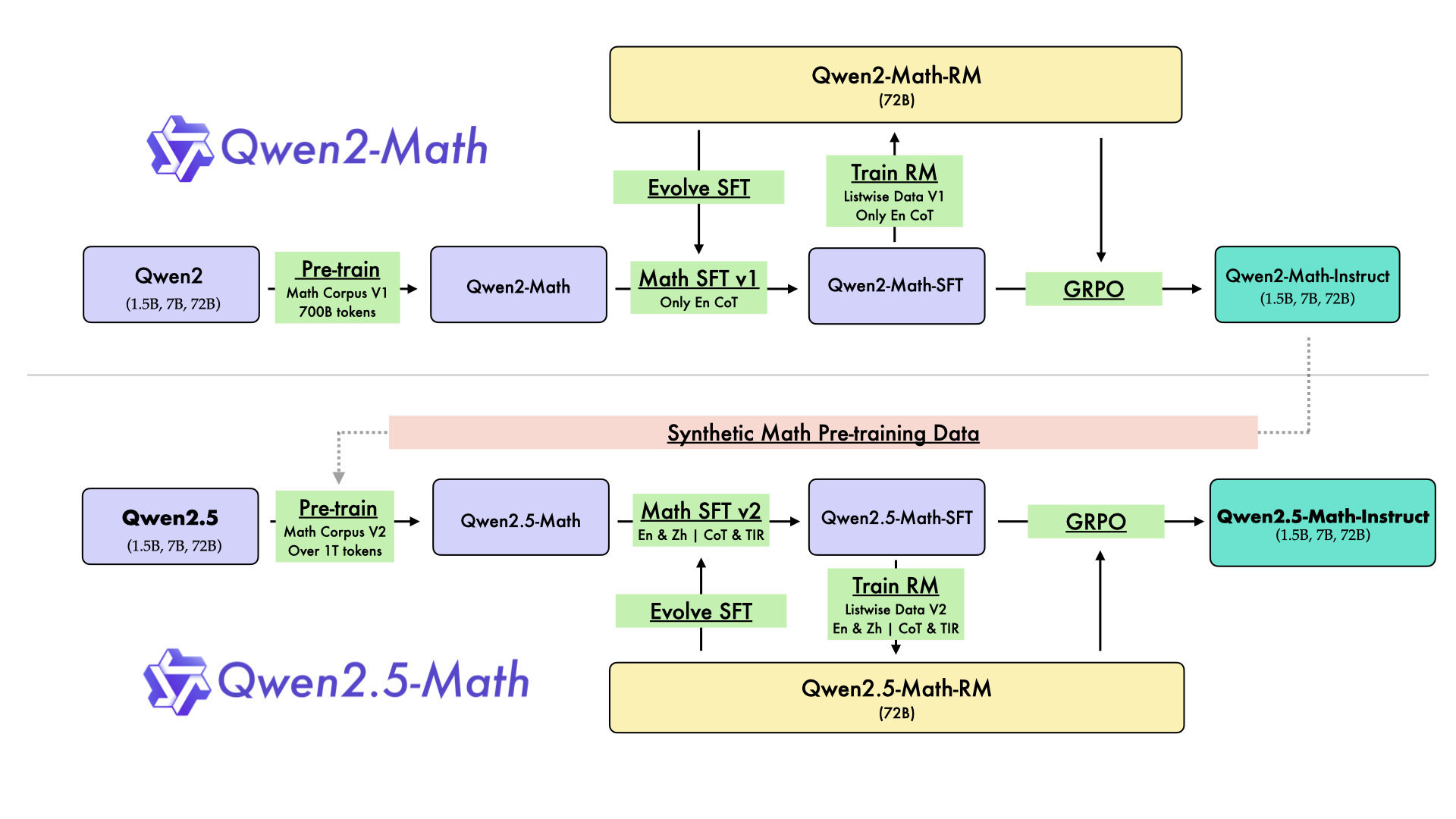

In August 2024, we released the first series of mathematical LLMs - [Qwen2-Math](https://qwenlm.github.io/blog/qwen2-math/) - of our Qwen family. A month later, we have upgraded it and open-sourced **Qwen2.5-Math** series, including base models **Qwen2.5-Math-1.5B/7B/72B**, instruction-tuned models **Qwen2.5-Math-1.5B/7B/72B-Instruct**, and mathematical reward model **Qwen2.5-Math-RM-72B**.

|

| 35 |

+

|

| 36 |

+

Unlike Qwen2-Math series which only supports using Chain-of-Thught (CoT) to solve English math problems, Qwen2.5-Math series is expanded to support using both CoT and Tool-integrated Reasoning (TIR) to solve math problems in both Chinese and English. The Qwen2.5-Math series models have achieved significant performance improvements compared to the Qwen2-Math series models on the Chinese and English mathematics benchmarks with CoT.

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

While CoT plays a vital role in enhancing the reasoning capabilities of LLMs, it faces challenges in achieving computational accuracy and handling complex mathematical or algorithmic reasoning tasks, such as finding the roots of a quadratic equation or computing the eigenvalues of a matrix. TIR can further improve the model's proficiency in precise computation, symbolic manipulation, and algorithmic manipulation. Qwen2.5-Math-1.5B/7B/72B-Instruct achieve 79.7, 85.3, and 87.8 respectively on the MATH benchmark using TIR.

|

| 40 |

+

|

| 41 |

+

## Model Details

|

| 42 |

+

|

| 43 |

+

|

| 44 |

+

For more details, please refer to our [blog post](https://qwenlm.github.io/blog/qwen2.5-math/) and [GitHub repo](https://github.com/QwenLM/Qwen2.5-Math).

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

## Requirements

|

| 48 |

+

* `transformers>=4.37.0` for Qwen2.5-Math models. The latest version is recommended.

|

| 49 |

+

|

| 50 |

+

> [!Warning]

|

| 51 |

+

> <div align="center">

|

| 52 |

+

> <b>

|

| 53 |

+

> 🚨 This is a must because <code>transformers</code> integrated Qwen2 codes since <code>4.37.0</code>.

|

| 54 |

+

> </b>

|

| 55 |

+

> </div>

|

| 56 |

+

|

| 57 |

+

For requirements on GPU memory and the respective throughput, see similar results of Qwen2 [here](https://qwen.readthedocs.io/en/latest/benchmark/speed_benchmark.html).

|

| 58 |

+

|

| 59 |

+

## Quick Start

|

| 60 |

+

|

| 61 |

+

> [!Important]

|

| 62 |

+

>

|

| 63 |

+

> **Qwen2.5-Math-1.5B-Instruct** is an instruction model for chatting;

|

| 64 |

+

>

|

| 65 |

+

> **Qwen2.5-Math-1.5B** is a base model typically used for completion and few-shot inference, serving as a better starting point for fine-tuning.

|

| 66 |

+

|

| 67 |

+

## Citation

|

| 68 |

+

|

| 69 |

+

If you find our work helpful, feel free to give us a citation.

|

| 70 |

+

|

| 71 |

+

```

|

| 72 |

+

@article{yang2024qwen2,

|

| 73 |

+

title={Qwen2 technical report},

|

| 74 |

+

author={Yang, An and Yang, Baosong and Hui, Binyuan and Zheng, Bo and Yu, Bowen and Zhou, Chang and Li, Chengpeng and Li, Chengyuan and Liu, Dayiheng and Huang, Fei and others},

|

| 75 |

+

journal={arXiv preprint arXiv:2407.10671},

|

| 76 |

+

year={2024}

|

| 77 |

+

}

|

| 78 |

+

```

|