Add files using upload-large-folder tool

Browse files- README.md +59 -3

- config.json +37 -0

- configuration_rwkv_hybrid.py +252 -0

- figures/architecture.png +0 -0

- generation_config.json +6 -0

- hybrid_cache.py +154 -0

- model-00001-of-00007.safetensors +3 -0

- model-00002-of-00007.safetensors +3 -0

- model-00003-of-00007.safetensors +3 -0

- model-00004-of-00007.safetensors +3 -0

- model-00005-of-00007.safetensors +3 -0

- model-00006-of-00007.safetensors +3 -0

- model-00007-of-00007.safetensors +3 -0

- model.safetensors.index.json +822 -0

- modeling_rwkv_hybrid.py +632 -0

- test_gradio.py +80 -0

- tokenizer.json +0 -0

- tokenizer_config.json +207 -0

- vocab.json +0 -0

- wkv.py +522 -0

README.md

CHANGED

|

@@ -1,3 +1,59 @@

|

|

| 1 |

-

|

| 2 |

-

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

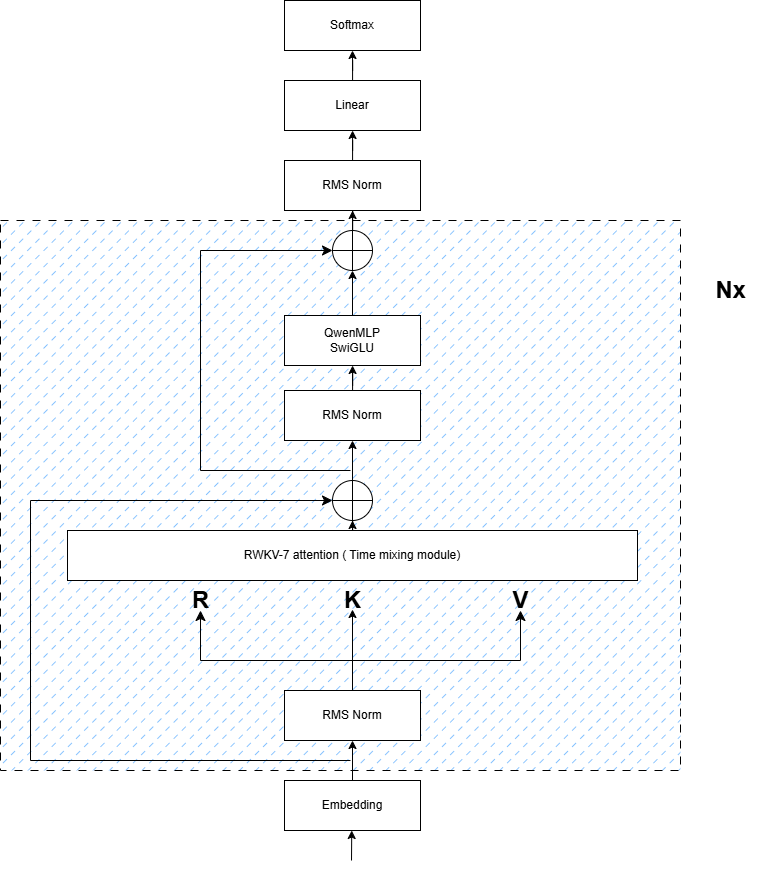

# ARWKV-7B-GATE-MLP (Preview 0.1)

|

| 2 |

+

|

| 3 |

+

<img src="./figures/architecture.png" alt="ARWKV Hybrid Architecture" width="30%">

|

| 4 |

+

|

| 5 |

+

*Preview version with RWKV-7 time mixing and Transformer MLP*

|

| 6 |

+

|

| 7 |

+

## 📌 Overview

|

| 8 |

+

|

| 9 |

+

**ALL YOU NEED IS RWKV**

|

| 10 |

+

|

| 11 |

+

This is an **early preview** of our 7B parameter hybrid RNN-Transformer model, trained on 2k context length through 3-stage knowledge distillation from Qwen2.5-7B-Instruct. While being a foundational version, it demonstrates:

|

| 12 |

+

|

| 13 |

+

- ✅ RWKV-7's efficient recurrence mechanism

|

| 14 |

+

- ✅ No self-attention, fully O(n)

|

| 15 |

+

- ✅ Constant VRAM usage

|

| 16 |

+

- ✅ Single-GPU trainability

|

| 17 |

+

|

| 18 |

+

**Roadmap Notice**: We will soon open-source different enhanced versions with:

|

| 19 |

+

- 🚀 16k+ context capability

|

| 20 |

+

- 🧮 Math-specific improvements

|

| 21 |

+

- 📚 RL enhanced reasoning model

|

| 22 |

+

|

| 23 |

+

## How to use

|

| 24 |

+

```shell

|

| 25 |

+

pip3 install --upgrade rwkv-fla transformers

|

| 26 |

+

```

|

| 27 |

+

|

| 28 |

+

```python

|

| 29 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 33 |

+

"RWKV-Red-Team/ARWKV-7B-Preview-0.1",

|

| 34 |

+

device_map="auto",

|

| 35 |

+

torch_dtype=torch.float16,

|

| 36 |

+

trust_remote_code=True,

|

| 37 |

+

)

|

| 38 |

+

tokenizer = AutoTokenizer.from_pretrained(

|

| 39 |

+

"RWKV-Red-Team/ARWKV-7B-Preview-0.1"

|

| 40 |

+

)

|

| 41 |

+

```

|

| 42 |

+

|

| 43 |

+

## 🔑 Key Features

|

| 44 |

+

| Component | Specification | Note |

|

| 45 |

+

|-----------|---------------|------|

|

| 46 |

+

| Architecture | RWKV-7 TimeMix + SwiGLU | Hybrid design |

|

| 47 |

+

| Context Window | 2048 training CTX | *Preview limitation* |

|

| 48 |

+

| Training Tokens | 40M | Distillation-focused |

|

| 49 |

+

| Precision | FP16 inference recommended | 15%↑ vs BF16 |

|

| 50 |

+

|

| 51 |

+

## 🏗️ Architecture Highlights

|

| 52 |

+

### Core Modification Flow

|

| 53 |

+

```diff

|

| 54 |

+

Qwen2.5 Decoder Layer:

|

| 55 |

+

- Grouped Query Attention

|

| 56 |

+

+ RWKV-7 Time Mixing (Eq.3)

|

| 57 |

+

- RoPE Positional Encoding

|

| 58 |

+

+ State Recurrence

|

| 59 |

+

= Hybrid Layer Output

|

config.json

ADDED

|

@@ -0,0 +1,37 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"RwkvHybridForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"auto_map": {

|

| 6 |

+

"AutoConfig": "configuration_rwkv_hybrid.RwkvHybridConfig",

|

| 7 |

+

"AutoModelForCausalLM": "modeling_rwkv_hybrid.RwkvHybridForCausalLM"

|

| 8 |

+

},

|

| 9 |

+

"attention_dropout": 0.0,

|

| 10 |

+

"bos_token_id": 151643,

|

| 11 |

+

"eos_token_id": 151645,

|

| 12 |

+

"head_size": 64,

|

| 13 |

+

"head_size_divisor": 8,

|

| 14 |

+

"hidden_act": "silu",

|

| 15 |

+

"hidden_size": 3584,

|

| 16 |

+

"initializer_range": 0.02,

|

| 17 |

+

"intermediate_size": 18944,

|

| 18 |

+

"max_position_embeddings": 32768,

|

| 19 |

+

"max_window_layers": 28,

|

| 20 |

+

"model_type": "rwkv_hybrid",

|

| 21 |

+

"num_attention_heads": 28,

|

| 22 |

+

"num_hidden_layers": 28,

|

| 23 |

+

"num_key_value_heads": 4,

|

| 24 |

+

"rms_norm_eps": 1e-06,

|

| 25 |

+

"rope_scaling": null,

|

| 26 |

+

"rope_theta": 1000000.0,

|

| 27 |

+

"sliding_window": null,

|

| 28 |

+

"tie_word_embeddings": false,

|

| 29 |

+

"torch_dtype": "float16",

|

| 30 |

+

"transformers_version": "4.49.0.dev0",

|

| 31 |

+

"use_cache": true,

|

| 32 |

+

"use_sliding_window": false,

|

| 33 |

+

"vocab_size": 152064,

|

| 34 |

+

"wkv_has_gate": true,

|

| 35 |

+

"wkv_has_group_norm": false,

|

| 36 |

+

"wkv_version": 7

|

| 37 |

+

}

|

configuration_rwkv_hybrid.py

ADDED

|

@@ -0,0 +1,252 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# coding=utf-8

|

| 2 |

+

# Copyright 2025 RWKV team. All rights reserved.

|

| 3 |

+

# Copyright 2024 The Qwen team, Alibaba Group and the HuggingFace Inc. team. All rights reserved.

|

| 4 |

+

#

|

| 5 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 6 |

+

# you may not use this file except in compliance with the License.

|

| 7 |

+

# You may obtain a copy of the License at

|

| 8 |

+

#

|

| 9 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 10 |

+

#

|

| 11 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 12 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 13 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 14 |

+

# See the License for the specific language governing permissions and

|

| 15 |

+

# limitations under the License.

|

| 16 |

+

"""RwkvHybrid model configuration"""

|

| 17 |

+

|

| 18 |

+

from transformers.configuration_utils import PretrainedConfig

|

| 19 |

+

from transformers.modeling_rope_utils import rope_config_validation

|

| 20 |

+

from transformers.utils import logging

|

| 21 |

+

from typing import Optional, Union, List

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

logger = logging.get_logger(__name__)

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

class RwkvHybridConfig(PretrainedConfig):

|

| 28 |

+

r"""

|

| 29 |

+

This is the configuration class to store the configuration of a [`RwkvHybridModel`]. It is used to instantiate a

|

| 30 |

+

RwkvHybrid model according to the specified arguments, defining the model architecture. Instantiating a configuration

|

| 31 |

+

with the defaults will yield a similar configuration to that of

|

| 32 |

+

RwkvHybrid-7B-beta.

|

| 33 |

+

|

| 34 |

+

Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

|

| 35 |

+

documentation from [`PretrainedConfig`] for more information.

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

Args:

|

| 39 |

+

vocab_size (`int`, *optional*, defaults to 151936):

|

| 40 |

+

Vocabulary size of the RwkvHybrid model. Defines the number of different tokens that can be represented by the

|

| 41 |

+

`inputs_ids` passed when calling [`RwkvHybridModel`]

|

| 42 |

+

hidden_size (`int`, *optional*, defaults to 4096):

|

| 43 |

+

Dimension of the hidden representations.

|

| 44 |

+

intermediate_size (`int`, *optional*, defaults to 22016):

|

| 45 |

+

Dimension of the MLP representations.

|

| 46 |

+

num_hidden_layers (`int`, *optional*, defaults to 32):

|

| 47 |

+

Number of hidden layers in the Transformer encoder.

|

| 48 |

+

num_attention_heads (`int`, *optional*, defaults to 32):

|

| 49 |

+

Number of attention heads for each attention layer in the Transformer encoder.

|

| 50 |

+

num_key_value_heads (`int`, *optional*, defaults to 32):

|

| 51 |

+

This is the number of key_value heads that should be used to implement Grouped Query Attention. If

|

| 52 |

+

`num_key_value_heads=num_attention_heads`, the model will use Multi Head Attention (MHA), if

|

| 53 |

+

`num_key_value_heads=1` the model will use Multi Query Attention (MQA) otherwise GQA is used. When

|

| 54 |

+

converting a multi-head checkpoint to a GQA checkpoint, each group key and value head should be constructed

|

| 55 |

+

by meanpooling all the original heads within that group. For more details checkout [this

|

| 56 |

+

paper](https://arxiv.org/pdf/2305.13245.pdf). If it is not specified, will default to `32`.

|

| 57 |

+

hidden_act (`str` or `function`, *optional*, defaults to `"silu"`):

|

| 58 |

+

The non-linear activation function (function or string) in the decoder.

|

| 59 |

+

max_position_embeddings (`int`, *optional*, defaults to 32768):

|

| 60 |

+

The maximum sequence length that this model might ever be used with.

|

| 61 |

+

initializer_range (`float`, *optional*, defaults to 0.02):

|

| 62 |

+

The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

|

| 63 |

+

rms_norm_eps (`float`, *optional*, defaults to 1e-06):

|

| 64 |

+

The epsilon used by the rms normalization layers.

|

| 65 |

+

use_cache (`bool`, *optional*, defaults to `True`):

|

| 66 |

+

Whether or not the model should return the last key/values attentions (not used by all models). Only

|

| 67 |

+

relevant if `config.is_decoder=True`.

|

| 68 |

+

tie_word_embeddings (`bool`, *optional*, defaults to `False`):

|

| 69 |

+

Whether the model's input and output word embeddings should be tied.

|

| 70 |

+

rope_theta (`float`, *optional*, defaults to 10000.0):

|

| 71 |

+

The base period of the RoPE embeddings.

|

| 72 |

+

rope_scaling (`Dict`, *optional*):

|

| 73 |

+

Dictionary containing the scaling configuration for the RoPE embeddings. NOTE: if you apply new rope type

|

| 74 |

+

and you expect the model to work on longer `max_position_embeddings`, we recommend you to update this value

|

| 75 |

+

accordingly.

|

| 76 |

+

Expected contents:

|

| 77 |

+

`rope_type` (`str`):

|

| 78 |

+

The sub-variant of RoPE to use. Can be one of ['default', 'linear', 'dynamic', 'yarn', 'longrope',

|

| 79 |

+

'llama3'], with 'default' being the original RoPE implementation.

|

| 80 |

+

`factor` (`float`, *optional*):

|

| 81 |

+

Used with all rope types except 'default'. The scaling factor to apply to the RoPE embeddings. In

|

| 82 |

+

most scaling types, a `factor` of x will enable the model to handle sequences of length x *

|

| 83 |

+

original maximum pre-trained length.

|

| 84 |

+

`original_max_position_embeddings` (`int`, *optional*):

|

| 85 |

+

Used with 'dynamic', 'longrope' and 'llama3'. The original max position embeddings used during

|

| 86 |

+

pretraining.

|

| 87 |

+

`attention_factor` (`float`, *optional*):

|

| 88 |

+

Used with 'yarn' and 'longrope'. The scaling factor to be applied on the attention

|

| 89 |

+

computation. If unspecified, it defaults to value recommended by the implementation, using the

|

| 90 |

+

`factor` field to infer the suggested value.

|

| 91 |

+

`beta_fast` (`float`, *optional*):

|

| 92 |

+

Only used with 'yarn'. Parameter to set the boundary for extrapolation (only) in the linear

|

| 93 |

+

ramp function. If unspecified, it defaults to 32.

|

| 94 |

+

`beta_slow` (`float`, *optional*):

|

| 95 |

+

Only used with 'yarn'. Parameter to set the boundary for interpolation (only) in the linear

|

| 96 |

+

ramp function. If unspecified, it defaults to 1.

|

| 97 |

+

`short_factor` (`List[float]`, *optional*):

|

| 98 |

+

Only used with 'longrope'. The scaling factor to be applied to short contexts (<

|

| 99 |

+

`original_max_position_embeddings`). Must be a list of numbers with the same length as the hidden

|

| 100 |

+

size divided by the number of attention heads divided by 2

|

| 101 |

+

`long_factor` (`List[float]`, *optional*):

|

| 102 |

+

Only used with 'longrope'. The scaling factor to be applied to long contexts (<

|

| 103 |

+

`original_max_position_embeddings`). Must be a list of numbers with the same length as the hidden

|

| 104 |

+

size divided by the number of attention heads divided by 2

|

| 105 |

+

`low_freq_factor` (`float`, *optional*):

|

| 106 |

+

Only used with 'llama3'. Scaling factor applied to low frequency components of the RoPE

|

| 107 |

+

`high_freq_factor` (`float`, *optional*):

|

| 108 |

+

Only used with 'llama3'. Scaling factor applied to high frequency components of the RoPE

|

| 109 |

+

use_sliding_window (`bool`, *optional*, defaults to `False`):

|

| 110 |

+

Whether to use sliding window attention.

|

| 111 |

+

sliding_window (`int`, *optional*, defaults to 4096):

|

| 112 |

+

Sliding window attention (SWA) window size. If not specified, will default to `4096`.

|

| 113 |

+

max_window_layers (`int`, *optional*, defaults to 28):

|

| 114 |

+

The number of layers that use SWA (Sliding Window Attention). The bottom layers use SWA while the top use full attention.

|

| 115 |

+

attention_dropout (`float`, *optional*, defaults to 0.0):

|

| 116 |

+

The dropout ratio for the attention probabilities.

|

| 117 |

+

head_size (`int`, *optional*, defaults to 64):

|

| 118 |

+

Dimensionality of each RWKV attention head. Defines the hidden dimension size for RWKV attention mechanisms.

|

| 119 |

+

head_size_divisor (`int`, *optional*, defaults to 8):

|

| 120 |

+

Constraint for head_size initialization, typically set to the square root of head_size. Ensures divisibility

|

| 121 |

+

between hidden_size and head_size.

|

| 122 |

+

wkv_version (`int`, *optional*, defaults to 7):

|

| 123 |

+

Version of RWKV attention implementation. Currently supports:

|

| 124 |

+

- 6: Original implementation requiring `wkv_has_gate=True` and `wkv_use_vfirst=False`

|

| 125 |

+

- 7: Improved version requiring `wkv_use_vfirst=True`

|

| 126 |

+

wkv_has_gate (`bool`, *optional*, defaults to False):

|

| 127 |

+

Whether to include gating mechanism in RWKV attention. Required for version 6.

|

| 128 |

+

wkv_has_group_norm (`bool`, *optional*, defaults to True):

|

| 129 |

+

Whether to apply group normalization in RWKV attention layers.

|

| 130 |

+

wkv_use_vfirst (`bool`, *optional*, defaults to True):

|

| 131 |

+

Whether to prioritize value projection in RWKV attention computation. Required for version 7.

|

| 132 |

+

wkv_layers (`Union[str, List[int]]`, *optional*, defaults to None):

|

| 133 |

+

Specifies which layers use RWKV attention:

|

| 134 |

+

- `"full"` or `None`: All layers use RWKV

|

| 135 |

+

- List of integers: Only specified layers (e.g., `[0,1,2]`) use RWKV attention

|

| 136 |

+

|

| 137 |

+

```python

|

| 138 |

+

>>> from transformers import RwkvHybridModel, RwkvHybridConfig

|

| 139 |

+

|

| 140 |

+

>>> # Initializing a RwkvHybrid style configuration

|

| 141 |

+

>>> configuration = RwkvHybridConfig()

|

| 142 |

+

|

| 143 |

+

>>> # Initializing a model from the RwkvHybrid-7B style configuration

|

| 144 |

+

>>> model = RwkvHybridModel(configuration)

|

| 145 |

+

|

| 146 |

+

>>> # Accessing the model configuration

|

| 147 |

+

>>> configuration = model.config

|

| 148 |

+

```"""

|

| 149 |

+

|

| 150 |

+

model_type = "rwkv_hybrid"

|

| 151 |

+

keys_to_ignore_at_inference = ["past_key_values"]

|

| 152 |

+

|

| 153 |

+

# Default tensor parallel plan for base model `RwkvHybrid`

|

| 154 |

+

base_model_tp_plan = {

|

| 155 |

+

"layers.*.self_attn.q_proj": "colwise",

|

| 156 |

+

"layers.*.self_attn.k_proj": "colwise",

|

| 157 |

+

"layers.*.self_attn.v_proj": "colwise",

|

| 158 |

+

"layers.*.self_attn.o_proj": "rowwise",

|

| 159 |

+

"layers.*.mlp.gate_proj": "colwise",

|

| 160 |

+

"layers.*.mlp.up_proj": "colwise",

|

| 161 |

+

"layers.*.mlp.down_proj": "rowwise",

|

| 162 |

+

}

|

| 163 |

+

|

| 164 |

+

def __init__(

|

| 165 |

+

self,

|

| 166 |

+

vocab_size: int = 151936,

|

| 167 |

+

hidden_size: int = 4096,

|

| 168 |

+

intermediate_size: int = 22016,

|

| 169 |

+

num_hidden_layers: int = 32,

|

| 170 |

+

num_attention_heads: int = 32,

|

| 171 |

+

num_key_value_heads: int = 32,

|

| 172 |

+

head_size: int = 64,

|

| 173 |

+

head_size_divisor: int = 8,

|

| 174 |

+

hidden_act: str = "silu",

|

| 175 |

+

max_position_embeddings: int = 32768,

|

| 176 |

+

initializer_range: float = 0.02,

|

| 177 |

+

rms_norm_eps: float = 1e-6,

|

| 178 |

+

use_cache: bool = True,

|

| 179 |

+

tie_word_embeddings: bool = False,

|

| 180 |

+

rope_theta: float = 10000.0,

|

| 181 |

+

rope_scaling: Optional[dict] = None,

|

| 182 |

+

use_sliding_window: bool = False,

|

| 183 |

+

sliding_window: int = 4096,

|

| 184 |

+

max_window_layers: int = 28,

|

| 185 |

+

attention_dropout: float = 0.0,

|

| 186 |

+

wkv_version: int = 7,

|

| 187 |

+

wkv_has_gate: bool = False,

|

| 188 |

+

wkv_has_group_norm: bool = True,

|

| 189 |

+

wkv_use_vfirst: bool = True,

|

| 190 |

+

wkv_layers: Optional[Union[str, List[int]]] = None,

|

| 191 |

+

**kwargs,

|

| 192 |

+

):

|

| 193 |

+

self.vocab_size = vocab_size

|

| 194 |

+

self.max_position_embeddings = max_position_embeddings

|

| 195 |

+

self.hidden_size = hidden_size

|

| 196 |

+

self.intermediate_size = intermediate_size

|

| 197 |

+

self.num_hidden_layers = num_hidden_layers

|

| 198 |

+

self.num_wkv_heads = hidden_size // head_size

|

| 199 |

+

assert hidden_size % head_size == 0, "hidden_size must be divisible by head_size"

|

| 200 |

+

self.num_attention_heads = num_attention_heads

|

| 201 |

+

self.use_sliding_window = use_sliding_window

|

| 202 |

+

self.sliding_window = sliding_window if use_sliding_window else None

|

| 203 |

+

self.max_window_layers = max_window_layers

|

| 204 |

+

self.head_size = head_size

|

| 205 |

+

self.head_size_divisor = head_size_divisor

|

| 206 |

+

self.wkv_version = wkv_version

|

| 207 |

+

|

| 208 |

+

self.wkv_has_gate = wkv_has_gate

|

| 209 |

+

self.wkv_has_group_norm = wkv_has_group_norm

|

| 210 |

+

self.wkv_use_vfirst = wkv_use_vfirst

|

| 211 |

+

|

| 212 |

+

if self.wkv_version == 7:

|

| 213 |

+

assert self.wkv_use_vfirst, "wkv_use_vfirst must be True for wkv_version 7"

|

| 214 |

+

elif self.wkv_version == 6:

|

| 215 |

+

assert self.wkv_has_gate, "wkv_has_gate must be True for wkv_version 6"

|

| 216 |

+

assert not self.wkv_use_vfirst, "wkv_use_vfirst must be False for wkv_version 6"

|

| 217 |

+

else:

|

| 218 |

+

raise NotImplementedError(f"Unsupported wkv_version: {self.wkv_version}, \

|

| 219 |

+

wkv_version must be 6 or 7")

|

| 220 |

+

|

| 221 |

+

if wkv_layers == "full" or wkv_layers == None:

|

| 222 |

+

self.wkv_layers = list(range(num_hidden_layers))

|

| 223 |

+

elif isinstance(wkv_layers, list):

|

| 224 |

+

if all(isinstance(layer, int) for layer in wkv_layers):

|

| 225 |

+

self.wkv_layers = wkv_layers

|

| 226 |

+

else:

|

| 227 |

+

raise ValueError("All elements in wkv_layers must be integers.")

|

| 228 |

+

else:

|

| 229 |

+

raise TypeError("wkv_layers must be either 'full', None, or a list of integers.")

|

| 230 |

+

|

| 231 |

+

# for backward compatibility

|

| 232 |

+

if num_key_value_heads is None:

|

| 233 |

+

num_key_value_heads = num_attention_heads

|

| 234 |

+

|

| 235 |

+

self.num_key_value_heads = num_key_value_heads

|

| 236 |

+

self.hidden_act = hidden_act

|

| 237 |

+

self.initializer_range = initializer_range

|

| 238 |

+

self.rms_norm_eps = rms_norm_eps

|

| 239 |

+

self.use_cache = use_cache

|

| 240 |

+

self.rope_theta = rope_theta

|

| 241 |

+

self.rope_scaling = rope_scaling

|

| 242 |

+

self.attention_dropout = attention_dropout

|

| 243 |

+

# Validate the correctness of rotary position embeddings parameters

|

| 244 |

+

# BC: if there is a 'type' field, move it to 'rope_type'.

|

| 245 |

+

if self.rope_scaling is not None and "type" in self.rope_scaling:

|

| 246 |

+

self.rope_scaling["rope_type"] = self.rope_scaling["type"]

|

| 247 |

+

rope_config_validation(self)

|

| 248 |

+

|

| 249 |

+

super().__init__(

|

| 250 |

+

tie_word_embeddings=tie_word_embeddings,

|

| 251 |

+

**kwargs,

|

| 252 |

+

)

|

figures/architecture.png

ADDED

|

generation_config.json

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"bos_token_id": 151643,

|

| 4 |

+

"eos_token_id": 151645,

|

| 5 |

+

"transformers_version": "4.48.0.dev0"

|

| 6 |

+

}

|

hybrid_cache.py

ADDED

|

@@ -0,0 +1,154 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import torch

|

| 2 |

+

from typing import Any, Dict, Optional, Union

|

| 3 |

+

from transformers.cache_utils import DynamicCache

|

| 4 |

+

|

| 5 |

+

|

| 6 |

+

class TimeMixState:

|

| 7 |

+

def __init__(self, shift_state: torch.Tensor, wkv_state: torch.Tensor):

|

| 8 |

+

self.shift_state = shift_state

|

| 9 |

+

self.wkv_state = wkv_state

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

class ChannelMixState:

|

| 13 |

+

def __init__(self, shift_state: torch.Tensor):

|

| 14 |

+

self.shift_state = shift_state

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

class BlockState:

|

| 18 |

+

def __init__(self, time_mix_state: TimeMixState,

|

| 19 |

+

channel_mix_state: ChannelMixState):

|

| 20 |

+

self.time_mix_state = time_mix_state

|

| 21 |

+

self.channel_mix_state = channel_mix_state

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

class BlockStateList:

|

| 25 |

+

def __init__(self, shift_states, wkv_states):

|

| 26 |

+

self.wkv_states = wkv_states

|

| 27 |

+

self.shift_states = shift_states

|

| 28 |

+

|

| 29 |

+

@staticmethod

|

| 30 |

+

def create(N, B, C, H, device, dtype):

|

| 31 |

+

result = BlockStateList.empty(N, B, C, H, device, dtype)

|

| 32 |

+

result.wkv_states[:] = 0

|

| 33 |

+

result.wkv_states[:] = 0

|

| 34 |

+

result.shift_states[:] = 0

|

| 35 |

+

return result

|

| 36 |

+

|

| 37 |

+

@staticmethod

|

| 38 |

+

def empty(N, B, C, H, device, dtype):

|

| 39 |

+

wkv_states = torch.empty((N, B, H, C//H, C//H),

|

| 40 |

+

device=device,

|

| 41 |

+

dtype=torch.bfloat16)

|

| 42 |

+

shift_states = torch.empty((N, 2, B, C), device=device, dtype=dtype)

|

| 43 |

+

return BlockStateList(shift_states, wkv_states)

|

| 44 |

+

|

| 45 |

+

def __getitem__(self, layer: int):

|

| 46 |

+

return BlockState(

|

| 47 |

+

TimeMixState(self.shift_states[layer, 0], self.wkv_states[layer]),

|

| 48 |

+

ChannelMixState(self.shift_states[layer, 1]))

|

| 49 |

+

|

| 50 |

+

def __setitem__(self, layer: int, state: BlockState):

|

| 51 |

+

self.shift_states[layer, 0] = state.time_mix_state.shift_state

|

| 52 |

+

self.wkv_states[layer] = state.time_mix_state.wkv_state

|

| 53 |

+

self.shift_states[layer, 1] = state.channel_mix_state.shift_state

|

| 54 |

+

|

| 55 |

+

|

| 56 |

+

class HybridCache(DynamicCache):

|

| 57 |

+

def __init__(self) -> None:

|

| 58 |

+

super().__init__()

|

| 59 |

+

self.rwkv_layers = set()

|

| 60 |

+

|

| 61 |

+

def __repr__(self) -> str:

|

| 62 |

+

rwkv_layers = f"HybridCache(rwkv_layers={self.rwkv_layers})"

|

| 63 |

+

# count the number of key_cache and value_cache

|

| 64 |

+

key_cache_count = sum(len(cache) for cache in self.key_cache)

|

| 65 |

+

value_cache_count = sum(len(cache) for cache in self.value_cache)

|

| 66 |

+

count_info = rwkv_layers + \

|

| 67 |

+

f", key_cache_count={key_cache_count}, value_cache_count={value_cache_count}"

|

| 68 |

+

memories = 0

|

| 69 |

+

seq_length = self.get_seq_length()

|

| 70 |

+

for cache in self.value_cache:

|

| 71 |

+

for data in cache:

|

| 72 |

+

if not isinstance(data, torch.Tensor):

|

| 73 |

+

memories += data.time_mix_state.wkv_state.numel()

|

| 74 |

+

else:

|

| 75 |

+

memories += data.numel()

|

| 76 |

+

count_info += f", memories={memories / 1024/1024}MB, seq_length={seq_length}"

|

| 77 |

+

return count_info

|

| 78 |

+

|

| 79 |

+

def update(self,

|

| 80 |

+

key_states: Union[int, torch.Tensor],

|

| 81 |

+

value_states: Union[torch.Tensor, BlockState],

|

| 82 |

+

layer_idx: int,

|

| 83 |

+

cache_kwargs: Optional[Dict[str, Any]] = None):

|

| 84 |

+

if isinstance(key_states, int) and not isinstance(value_states, torch.Tensor):

|

| 85 |

+

self.rwkv_layers.add(layer_idx)

|

| 86 |

+

if layer_idx >= len(self.key_cache):

|

| 87 |

+

self.key_cache.append([])

|

| 88 |

+

self.value_cache.append([])

|

| 89 |

+

|

| 90 |

+

if len(self.key_cache[layer_idx]) == 0:

|

| 91 |

+

self.key_cache[layer_idx].append(key_states)

|

| 92 |

+

self.value_cache[layer_idx].append(value_states)

|

| 93 |

+

else:

|

| 94 |

+

self.key_cache[layer_idx][0] = self.key_cache[layer_idx][0]+key_states

|

| 95 |

+

self.value_cache[layer_idx][0] = value_states

|

| 96 |

+

|

| 97 |

+

return key_states, value_states

|

| 98 |

+

|

| 99 |

+

return super().update(key_states, value_states, layer_idx, cache_kwargs)

|

| 100 |

+

|

| 101 |

+

def get_seq_length(self, layer_idx: Optional[int] = 0):

|

| 102 |

+

if layer_idx in self.rwkv_layers:

|

| 103 |

+

return self.key_cache[layer_idx][0]

|

| 104 |

+

return super().get_seq_length(layer_idx)

|

| 105 |

+

|

| 106 |

+

def get_max_length(self):

|

| 107 |

+

return super().get_max_length()

|

| 108 |

+

|

| 109 |

+

def reorder_cache(self, beam_idx):

|

| 110 |

+

return super().reorder_cache(beam_idx)

|

| 111 |

+

|

| 112 |

+

def __getitem__(self, item):

|

| 113 |

+

if item in self.rwkv_layers:

|

| 114 |

+

return self.value_cache[item]

|

| 115 |

+

return super().__getitem__(item)

|

| 116 |

+

|

| 117 |

+

def offload_to_cpu(self):

|

| 118 |

+

for cache in self.value_cache:

|

| 119 |

+

for data in cache:

|

| 120 |

+

if isinstance(data, torch.Tensor):

|

| 121 |

+

data.cpu()

|

| 122 |

+

else:

|

| 123 |

+

data.time_mix_state.wkv_state.cpu()

|

| 124 |

+

data.time_mix_state.shift_state.cpu()

|

| 125 |

+

|

| 126 |

+

def offload_to_cuda(self, device: str):

|

| 127 |

+

for cache in self.value_cache:

|

| 128 |

+

for data in cache:

|

| 129 |

+

if isinstance(data, torch.Tensor):

|

| 130 |

+

data.cuda(device)

|

| 131 |

+

else:

|

| 132 |

+

data.time_mix_state.wkv_state.cuda(device)

|

| 133 |

+

data.time_mix_state.shift_state.cuda(device)

|

| 134 |

+

|

| 135 |

+

def offload_to_device(self, device_type: str, device_id: int = 0):

|

| 136 |

+

for cache in self.value_cache:

|

| 137 |

+

for data in cache:

|

| 138 |

+

if isinstance(data, torch.Tensor):

|

| 139 |

+

method = getattr(data, device_type)

|

| 140 |

+

if device_type == 'cpu':

|

| 141 |

+

method()

|

| 142 |

+

else:

|

| 143 |

+

method(device_id)

|

| 144 |

+

else:

|

| 145 |

+

wkv_state_method = getattr(

|

| 146 |

+

data.time_mix_state.wkv_state, device_type)

|

| 147 |

+

shift_state_method = getattr(

|

| 148 |

+

data.time_mix_state.shift_state, device_type)

|

| 149 |

+

if device_type == 'cpu':

|

| 150 |

+

wkv_state_method()

|

| 151 |

+

shift_state_method()

|

| 152 |

+

else:

|

| 153 |

+

wkv_state_method(device_id)

|

| 154 |

+

shift_state_method(device_id)

|

model-00001-of-00007.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c8c13c712edc185ad5f3cbb70d1be8cf614ea758d46955d4d0457188c25f419f

|

| 3 |

+

size 4994557640

|

model-00002-of-00007.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6b580e7029be7d39e4611398571615478e406e0ecf102a64cf5604d9d9c948a8

|

| 3 |

+

size 4872049040

|

model-00003-of-00007.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d3a17bf7ef65b25ca36667d241da976d2b8a92415c50518a97b7bbfc555cc133

|

| 3 |

+

size 4872049096

|

model-00004-of-00007.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:3ddef2d83f1b62d76e83e7b1c9ee6664de10d03e0a3ba9b0fafb0bd4fb2dc8ab

|

| 3 |

+

size 4989489432

|

model-00005-of-00007.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:59ba5eef2db961008887debbe34115f5883d85b0de30da22d213e6dfa1e25791

|

| 3 |

+

size 4812208032

|

model-00006-of-00007.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b8876934389ca0245be337ccb042ba0fb39e1172edb1826c915c56107d0c2639

|

| 3 |

+

size 4872049184

|

model-00007-of-00007.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:813be839a70ed5c89a7242e95bd690c954cb1530d7a284b5dce4dd74d76b2c7f

|

| 3 |

+

size 3751921648

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,822 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 33164228608

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"lm_head.weight": "model-00007-of-00007.safetensors",

|

| 7 |

+

"model.embed_tokens.weight": "model-00001-of-00007.safetensors",

|

| 8 |

+

"model.layers.0.input_layernorm.weight": "model-00001-of-00007.safetensors",

|

| 9 |

+

"model.layers.0.mlp.down_proj.weight": "model-00001-of-00007.safetensors",

|

| 10 |

+

"model.layers.0.mlp.gate_proj.weight": "model-00001-of-00007.safetensors",

|

| 11 |

+

"model.layers.0.mlp.up_proj.weight": "model-00001-of-00007.safetensors",

|

| 12 |

+

"model.layers.0.post_attention_layernorm.weight": "model-00001-of-00007.safetensors",

|

| 13 |

+

"model.layers.0.self_attn.time_mixer.a0": "model-00001-of-00007.safetensors",

|

| 14 |

+

"model.layers.0.self_attn.time_mixer.a1": "model-00001-of-00007.safetensors",

|

| 15 |

+

"model.layers.0.self_attn.time_mixer.a2": "model-00001-of-00007.safetensors",

|

| 16 |

+

"model.layers.0.self_attn.time_mixer.g1": "model-00001-of-00007.safetensors",

|

| 17 |

+

"model.layers.0.self_attn.time_mixer.g2": "model-00001-of-00007.safetensors",

|

| 18 |

+

"model.layers.0.self_attn.time_mixer.k_a": "model-00001-of-00007.safetensors",

|

| 19 |

+

"model.layers.0.self_attn.time_mixer.k_k": "model-00001-of-00007.safetensors",

|

| 20 |

+

"model.layers.0.self_attn.time_mixer.key.weight": "model-00001-of-00007.safetensors",

|

| 21 |

+

"model.layers.0.self_attn.time_mixer.output.weight": "model-00001-of-00007.safetensors",