Update README.md

Browse files

README.md

CHANGED

|

@@ -20,4 +20,89 @@ metrics:

|

|

| 20 |

---

|

| 21 |

|

| 22 |

# Mantis

|

|

|

|

| 23 |

[Paper](https://arxiv.org/abs/2405.01483) | [Website](https://tiger-ai-lab.github.io/Mantis/) | [Github](https://github.com/TIGER-AI-Lab/Mantis) | [Models](https://huggingface.co/collections/TIGER-Lab/mantis-6619b0834594c878cdb1d6e4) | [Demo](https://huggingface.co/spaces/TIGER-Lab/Mantis)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 20 |

---

|

| 21 |

|

| 22 |

# Mantis

|

| 23 |

+

|

| 24 |

[Paper](https://arxiv.org/abs/2405.01483) | [Website](https://tiger-ai-lab.github.io/Mantis/) | [Github](https://github.com/TIGER-AI-Lab/Mantis) | [Models](https://huggingface.co/collections/TIGER-Lab/mantis-6619b0834594c878cdb1d6e4) | [Demo](https://huggingface.co/spaces/TIGER-Lab/Mantis)

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

|

| 28 |

+

|

| 29 |

+

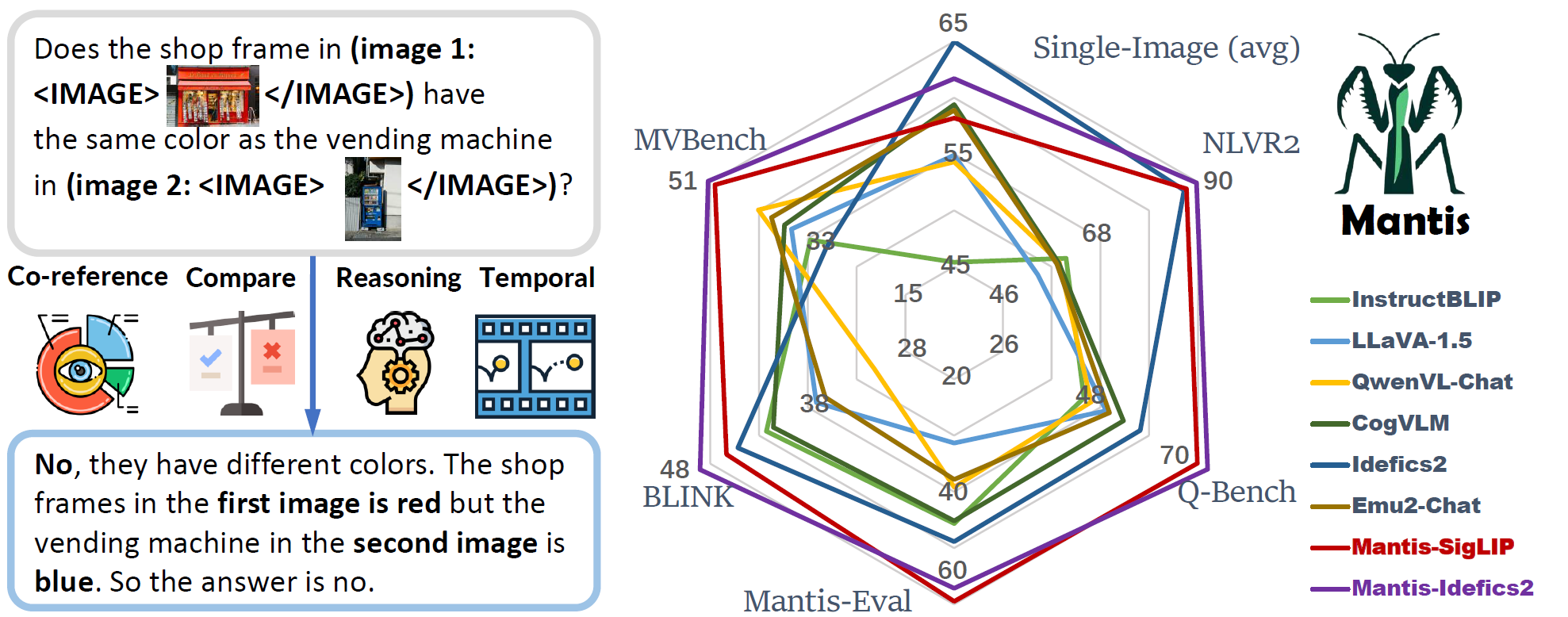

## Summary

|

| 30 |

+

|

| 31 |

+

- Mantis is an LLaMA-3 based LMM with **interleaved text and image as inputs**, train on Mantis-Instruct under academic-level resources (i.e. 36 hours on 16xA100-40G).

|

| 32 |

+

- Mantis is trained to have multi-image skills including co-reference, reasoning, comparing, temporal understanding.

|

| 33 |

+

- Mantis reaches the state-of-the-art performance on five multi-image benchmarks (NLVR2, Q-Bench, BLINK, MVBench, Mantis-Eval), and also maintain a strong single-image performance on par with CogVLM and Emu2.

|

| 34 |

+

|

| 35 |

+

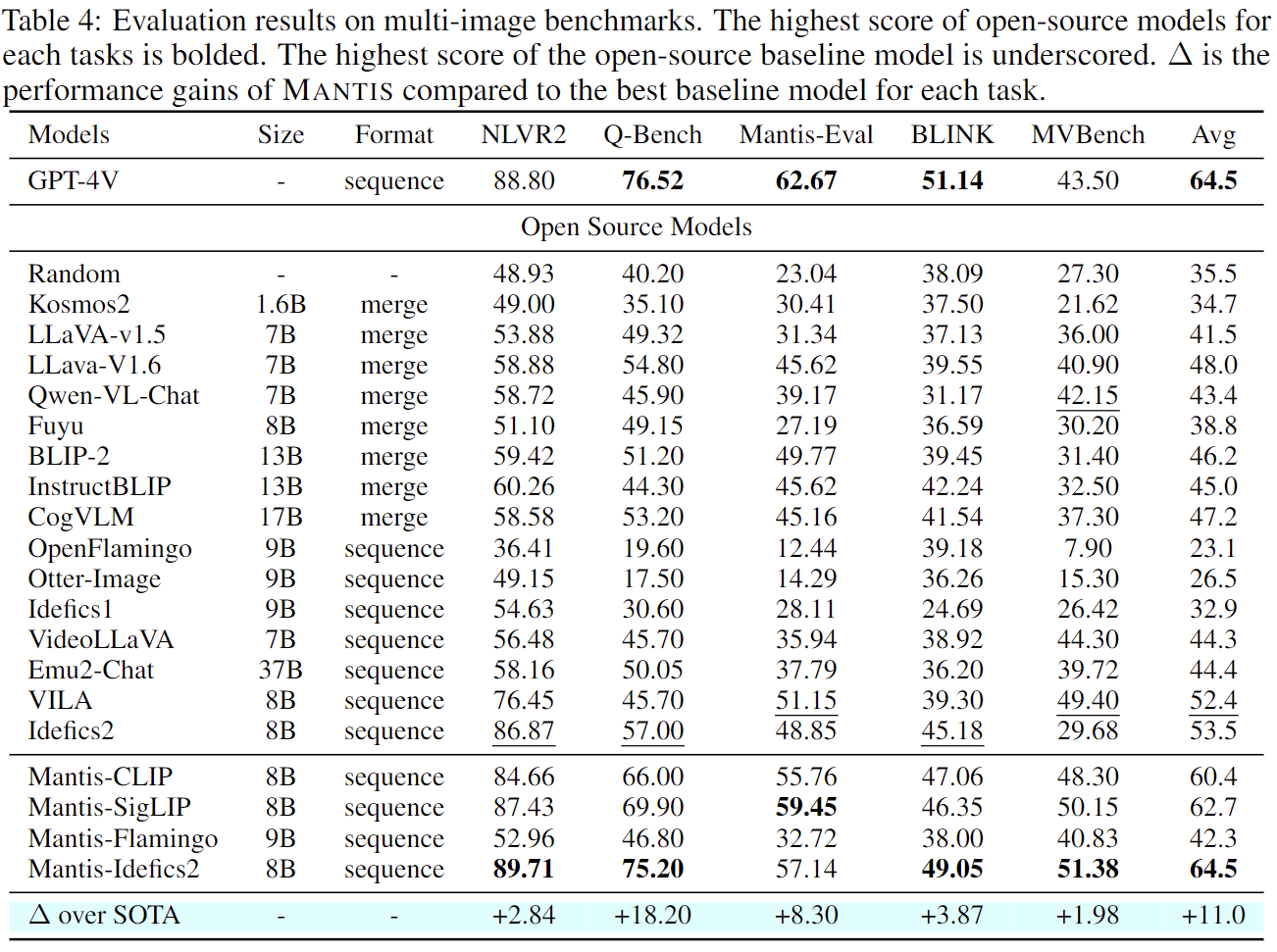

## Multi-Image Performance

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

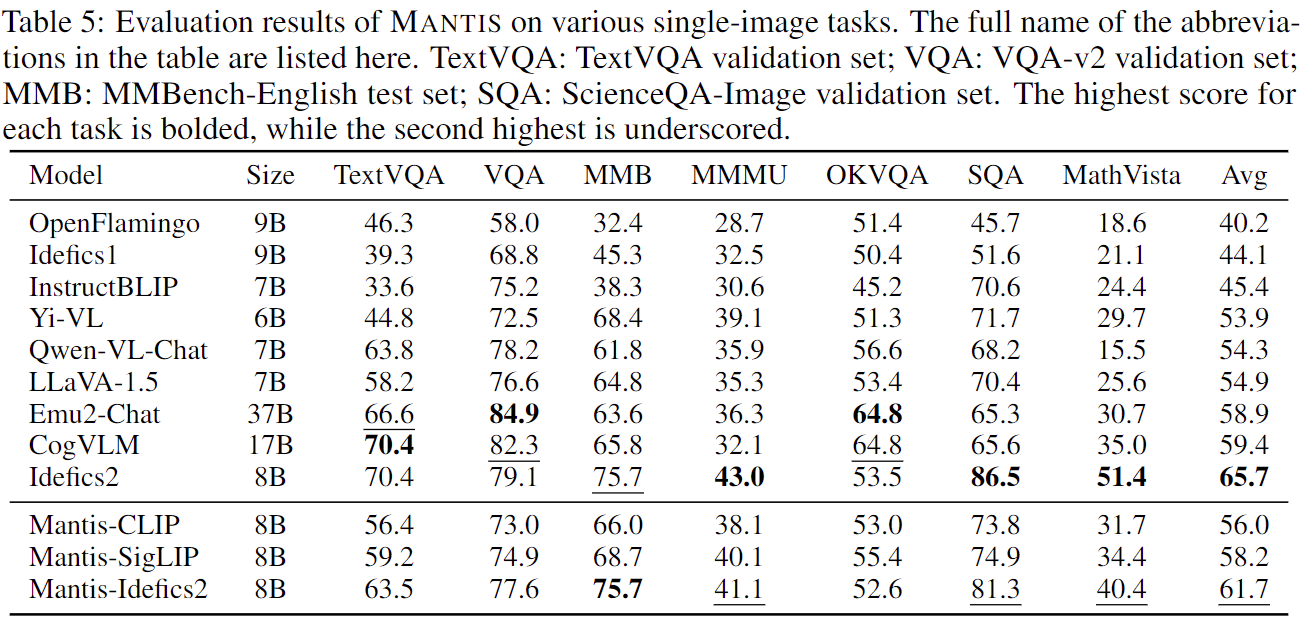

## Single-Image Performance

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

|

| 43 |

+

## How to use

|

| 44 |

+

|

| 45 |

+

### Installation

|

| 46 |

+

```bash

|

| 47 |

+

pip install git+

|

| 48 |

+

```

|

| 49 |

+

|

| 50 |

+

### Run example inference:

|

| 51 |

+

```python

|

| 52 |

+

from mantis.models.mllava import chat_mllava

|

| 53 |

+

from PIL import Image

|

| 54 |

+

import torch

|

| 55 |

+

|

| 56 |

+

|

| 57 |

+

image1 = "image1.jpg"

|

| 58 |

+

image2 = "image2.jpg"

|

| 59 |

+

images = [Image.open(image1), Image.open(image2)]

|

| 60 |

+

|

| 61 |

+

# load processor and model

|

| 62 |

+

from mantis.models.mllava import MLlavaProcessor, LlavaForConditionalGeneration

|

| 63 |

+

processor = MLlavaProcessor.from_pretrained("TIGER-Lab/Mantis-8B-clip-llama3")

|

| 64 |

+

attn_implementation = None # or "flash_attention_2"

|

| 65 |

+

model = LlavaForConditionalGeneration.from_pretrained("TIGER-Lab/Mantis-8B-clip-llama3", device_map="cuda", torch_dtype=torch.bfloat16, attn_implementation=attn_implementation)

|

| 66 |

+

|

| 67 |

+

generation_kwargs = {

|

| 68 |

+

"max_new_tokens": 1024,

|

| 69 |

+

"num_beams": 1,

|

| 70 |

+

"do_sample": False

|

| 71 |

+

}

|

| 72 |

+

|

| 73 |

+

# chat

|

| 74 |

+

text = "Describe the difference of <image> and <image> as much as you can."

|

| 75 |

+

response, history = chat_mllava(text, images, model, processor, **generation_kwargs)

|

| 76 |

+

|

| 77 |

+

print("USER: ", text)

|

| 78 |

+

print("ASSISTANT: ", response)

|

| 79 |

+

|

| 80 |

+

text = "How many wallets are there in image 1 and image 2 respectively?"

|

| 81 |

+

response, history = chat_mllava(text, images, model, processor, history=history, **generation_kwargs)

|

| 82 |

+

|

| 83 |

+

print("USER: ", text)

|

| 84 |

+

print("ASSISTANT: ", response)

|

| 85 |

+

|

| 86 |

+

"""

|

| 87 |

+

USER: Describe the difference of <image> and <image> as much as you can.

|

| 88 |

+

ASSISTANT: The second image has more variety in terms of colors and designs. While the first image only shows two brown leather pouches, the second image features four different pouches in various colors and designs, including a purple one with a gold coin, a red one with a gold coin, a black one with a gold coin, and a brown one with a gold coin. This variety makes the second image more visually interesting and dynamic.

|

| 89 |

+

USER: How many wallets are there in image 1 and image 2 respectively?

|

| 90 |

+

ASSISTANT: There are two wallets in image 1, and four wallets in image 2.

|

| 91 |

+

"""

|

| 92 |

+

```

|

| 93 |

+

|

| 94 |

+

### Training

|

| 95 |

+

See [mantis/train](https://github.com/TIGER-AI-Lab/Mantis/tree/main/mantis/train) for details

|

| 96 |

+

|

| 97 |

+

### Evaluation

|

| 98 |

+

See [mantis/benchmark](https://github.com/TIGER-AI-Lab/Mantis/tree/main/mantis/benchmark) for details

|

| 99 |

+

|

| 100 |

+

## Citation

|

| 101 |

+

```

|

| 102 |

+

@inproceedings{Jiang2024MANTISIM,

|

| 103 |

+

title={MANTIS: Interleaved Multi-Image Instruction Tuning},

|

| 104 |

+

author={Dongfu Jiang and Xuan He and Huaye Zeng and Cong Wei and Max W.F. Ku and Qian Liu and Wenhu Chen},

|

| 105 |

+

publisher={arXiv2405.01483}

|

| 106 |

+

year={2024},

|

| 107 |

+

}

|

| 108 |

+

```

|