url

stringlengths 58

61

| repository_url

stringclasses 1

value | labels_url

stringlengths 72

75

| comments_url

stringlengths 67

70

| events_url

stringlengths 65

68

| html_url

stringlengths 46

51

| id

int64 600M

2.05B

| node_id

stringlengths 18

32

| number

int64 2

6.51k

| title

stringlengths 1

290

| user

dict | labels

listlengths 0

4

| state

stringclasses 2

values | locked

bool 1

class | assignee

dict | assignees

listlengths 0

4

| milestone

dict | comments

sequencelengths 0

30

| created_at

unknown | updated_at

unknown | closed_at

unknown | author_association

stringclasses 3

values | active_lock_reason

float64 | draft

float64 0

1

⌀ | pull_request

dict | body

stringlengths 0

228k

⌀ | reactions

dict | timeline_url

stringlengths 67

70

| performed_via_github_app

float64 | state_reason

stringclasses 3

values | is_pull_request

bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/778 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/778/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/778/comments | https://api.github.com/repos/huggingface/datasets/issues/778/events | https://github.com/huggingface/datasets/issues/778 | 732,449,652 | MDU6SXNzdWU3MzI0NDk2NTI= | 778 | Unexpected behavior when loading cached csv file? | {

"avatar_url": "https://avatars.githubusercontent.com/u/15979778?v=4",

"events_url": "https://api.github.com/users/dcfidalgo/events{/privacy}",

"followers_url": "https://api.github.com/users/dcfidalgo/followers",

"following_url": "https://api.github.com/users/dcfidalgo/following{/other_user}",

"gists_url": "https://api.github.com/users/dcfidalgo/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/dcfidalgo",

"id": 15979778,

"login": "dcfidalgo",

"node_id": "MDQ6VXNlcjE1OTc5Nzc4",

"organizations_url": "https://api.github.com/users/dcfidalgo/orgs",

"received_events_url": "https://api.github.com/users/dcfidalgo/received_events",

"repos_url": "https://api.github.com/users/dcfidalgo/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/dcfidalgo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/dcfidalgo/subscriptions",

"type": "User",

"url": "https://api.github.com/users/dcfidalgo"

} | [] | closed | false | null | [] | null | [

"Hi ! Thanks for reporting.\r\nThe same issue was reported in #730 (but with the encodings instead of the delimiter). It was fixed by #770 .\r\nThe fix will be available in the next release :)",

"Thanks for the prompt reply and terribly sorry for the spam! \r\nLooking forward to the new release! "

] | "2020-10-29T16:06:10Z" | "2020-10-29T21:21:27Z" | "2020-10-29T21:21:27Z" | CONTRIBUTOR | null | null | null | I read a csv file from disk and forgot so specify the right delimiter. When i read the csv file again specifying the right delimiter it had no effect since it was using the cached dataset. I am not sure if this is unwanted behavior since i can always specify `download_mode="force_redownload"`. But i think it would be nice if the information what `delimiter` or what `column_names` were used would influence the identifier of the cached dataset.

Small snippet to reproduce the behavior:

```python

import datasets

with open("dummy_data.csv", "w") as file:

file.write("test,this;text\n")

print(datasets.load_dataset("csv", data_files="dummy_data.csv", split="train").column_names)

# ["test", "this;text"]

print(datasets.load_dataset("csv", data_files="dummy_data.csv", split="train", delimiter=";").column_names)

# still ["test", "this;text"]

```

By the way, thanks a lot for this amazing library! :) | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/778/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/778/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3462 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3462/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3462/comments | https://api.github.com/repos/huggingface/datasets/issues/3462/events | https://github.com/huggingface/datasets/issues/3462 | 1,085,049,661 | I_kwDODunzps5ArIs9 | 3,462 | Update swahili_news dataset | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova"

} | [

{

"color": "e99695",

"default": false,

"description": "Requesting to add a new dataset",

"id": 2067376369,

"name": "dataset request",

"node_id": "MDU6TGFiZWwyMDY3Mzc2MzY5",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20request"

}

] | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova"

}

] | null | [] | "2021-12-20T17:44:01Z" | "2021-12-21T06:24:02Z" | "2021-12-21T06:24:01Z" | MEMBER | null | null | null | Please note also: the HuggingFace version at https://huggingface.co/datasets/swahili_news is outdated. An updated version, with deduplicated text and official splits, can be found at https://zenodo.org/record/5514203.

## Adding a Dataset

- **Name:** swahili_news

Instructions to add a new dataset can be found [here](https://github.com/huggingface/datasets/blob/master/ADD_NEW_DATASET.md).

Related to:

- bigscience-workshop/data_tooling#107

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3462/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3462/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/1123 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1123/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1123/comments | https://api.github.com/repos/huggingface/datasets/issues/1123/events | https://github.com/huggingface/datasets/pull/1123 | 757,181,014 | MDExOlB1bGxSZXF1ZXN0NTMyNTk5ODQ3 | 1,123 | adding cdt dataset | {

"avatar_url": "https://avatars.githubusercontent.com/u/1654113?v=4",

"events_url": "https://api.github.com/users/abecadel/events{/privacy}",

"followers_url": "https://api.github.com/users/abecadel/followers",

"following_url": "https://api.github.com/users/abecadel/following{/other_user}",

"gists_url": "https://api.github.com/users/abecadel/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/abecadel",

"id": 1654113,

"login": "abecadel",

"node_id": "MDQ6VXNlcjE2NTQxMTM=",

"organizations_url": "https://api.github.com/users/abecadel/orgs",

"received_events_url": "https://api.github.com/users/abecadel/received_events",

"repos_url": "https://api.github.com/users/abecadel/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/abecadel/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/abecadel/subscriptions",

"type": "User",

"url": "https://api.github.com/users/abecadel"

} | [] | closed | false | null | [] | null | [

"the `ms_terms` formatting CI fails is fixed on master",

"merging since the CI is fixed on master"

] | "2020-12-04T15:19:36Z" | "2020-12-04T17:05:56Z" | "2020-12-04T17:05:56Z" | CONTRIBUTOR | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/1123.diff",

"html_url": "https://github.com/huggingface/datasets/pull/1123",

"merged_at": "2020-12-04T17:05:56Z",

"patch_url": "https://github.com/huggingface/datasets/pull/1123.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1123"

} | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1123/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1123/timeline | null | null | true |

|

https://api.github.com/repos/huggingface/datasets/issues/3673 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3673/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3673/comments | https://api.github.com/repos/huggingface/datasets/issues/3673/events | https://github.com/huggingface/datasets/issues/3673 | 1,123,010,520 | I_kwDODunzps5C78fY | 3,673 | `load_dataset("snli")` is different from dataset viewer | {

"avatar_url": "https://avatars.githubusercontent.com/u/61748653?v=4",

"events_url": "https://api.github.com/users/pietrolesci/events{/privacy}",

"followers_url": "https://api.github.com/users/pietrolesci/followers",

"following_url": "https://api.github.com/users/pietrolesci/following{/other_user}",

"gists_url": "https://api.github.com/users/pietrolesci/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/pietrolesci",

"id": 61748653,

"login": "pietrolesci",

"node_id": "MDQ6VXNlcjYxNzQ4NjUz",

"organizations_url": "https://api.github.com/users/pietrolesci/orgs",

"received_events_url": "https://api.github.com/users/pietrolesci/received_events",

"repos_url": "https://api.github.com/users/pietrolesci/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/pietrolesci/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/pietrolesci/subscriptions",

"type": "User",

"url": "https://api.github.com/users/pietrolesci"

} | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

},

{

"color": "E5583E",

"default": false,

"description": "Related to the dataset viewer on huggingface.co",

"id": 3470211881,

"name": "dataset-viewer",

"node_id": "LA_kwDODunzps7O1zsp",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset-viewer"

}

] | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://api.github.com/users/severo/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/severo",

"id": 1676121,

"login": "severo",

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"organizations_url": "https://api.github.com/users/severo/orgs",

"received_events_url": "https://api.github.com/users/severo/received_events",

"repos_url": "https://api.github.com/users/severo/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/severo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/severo/subscriptions",

"type": "User",

"url": "https://api.github.com/users/severo"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/1676121?v=4",

"events_url": "https://api.github.com/users/severo/events{/privacy}",

"followers_url": "https://api.github.com/users/severo/followers",

"following_url": "https://api.github.com/users/severo/following{/other_user}",

"gists_url": "https://api.github.com/users/severo/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/severo",

"id": 1676121,

"login": "severo",

"node_id": "MDQ6VXNlcjE2NzYxMjE=",

"organizations_url": "https://api.github.com/users/severo/orgs",

"received_events_url": "https://api.github.com/users/severo/received_events",

"repos_url": "https://api.github.com/users/severo/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/severo/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/severo/subscriptions",

"type": "User",

"url": "https://api.github.com/users/severo"

}

] | null | [

"Yes, we decided to replace the encoded label with the corresponding label when possible in the dataset viewer. But\r\n1. maybe it's the wrong default\r\n2. we could find a way to show both (with a switch, or showing both ie. `0 (neutral)`).\r\n",

"Hi @severo,\r\n\r\nThanks for clarifying. \r\n\r\nI think this default is a bit counterintuitive for the user. However, this is a personal opinion that might not be general. I think it is nice to have the actual (non-encoded) labels in the viewer. On the other hand, it would be nice to match what the user sees with what they get when they download a dataset. I don't know - I can see the difficulty of choosing a default :)\r\nMaybe having non-encoded labels as a default can be useful?\r\n\r\nAnyway, I think the issue has been addressed. Thanks a lot for your super-quick answer!\r\n\r\n ",

"Thanks for the 👍 in https://github.com/huggingface/datasets/issues/3673#issuecomment-1029008349 @mariosasko @gary149 @pietrolesci, but as I proposed various solutions, it's not clear to me which you prefer. Could you write your preferences as a comment?\r\n\r\n_(note for myself: one idea per comment in the future)_",

"As I am working with seq2seq, I prefer having the label in string form rather than numeric. So the viewer is fine and the underlying dataset should be \"decoded\" (from int to str). In this way, the user does not have to search for a mapping `int -> original name` (even though is trivial to find, I reckon). Also, encoding labels is rather easy.\r\n\r\nI hope this is useful",

"I like the idea of \"0 (neutral)\". The label name can even be greyed to make it clear that it's not part of the actual item in the dataset, it's just the meaning.",

"I like @lhoestq's idea of having grayed-out labels.",

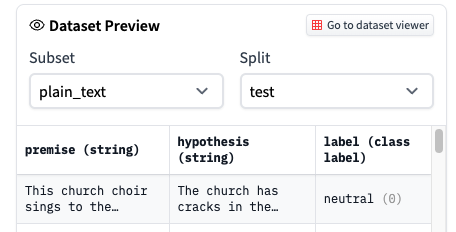

"Proposals by @gary149. Which one do you prefer? Please vote with the thumbs\r\n\r\n- 👍 \r\n\r\n \r\n\r\n- 👎 \r\n\r\n \r\n\r\n",

"I like Option 1 better as it shows clearly what the user is downloading",

"Thanks! ",

"It's [live](https://huggingface.co/datasets/glue/viewer/cola/train):\r\n\r\n<img width=\"1126\" alt=\"Capture d’écran 2022-02-14 à 10 26 03\" src=\"https://user-images.githubusercontent.com/1676121/153836716-25f6205b-96af-42d8-880a-7c09cb24c420.png\">\r\n\r\nThanks all for the help to improve the UI!",

"Love it ! thanks :)"

] | "2022-02-03T12:10:43Z" | "2022-02-16T11:22:31Z" | "2022-02-11T17:01:21Z" | NONE | null | null | null | ## Describe the bug

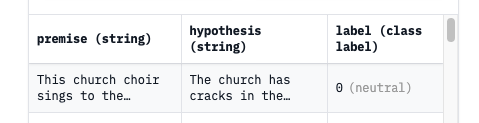

The dataset that is downloaded from the Hub via `load_dataset("snli")` is different from what is available in the dataset viewer. In the viewer the labels are not encoded (i.e., "neutral", "entailment", "contradiction"), while the downloaded dataset shows the encoded labels (i.e., 0, 1, 2).

Is this expected?

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version:

- Platform: Ubuntu 20.4

- Python version: 3.7

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3673/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3673/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/4880 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/4880/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/4880/comments | https://api.github.com/repos/huggingface/datasets/issues/4880/events | https://github.com/huggingface/datasets/pull/4880 | 1,348,452,776 | PR_kwDODunzps49qyJr | 4,880 | Added names of less-studied languages | {

"avatar_url": "https://avatars.githubusercontent.com/u/23100612?v=4",

"events_url": "https://api.github.com/users/BenjaminGalliot/events{/privacy}",

"followers_url": "https://api.github.com/users/BenjaminGalliot/followers",

"following_url": "https://api.github.com/users/BenjaminGalliot/following{/other_user}",

"gists_url": "https://api.github.com/users/BenjaminGalliot/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/BenjaminGalliot",

"id": 23100612,

"login": "BenjaminGalliot",

"node_id": "MDQ6VXNlcjIzMTAwNjEy",

"organizations_url": "https://api.github.com/users/BenjaminGalliot/orgs",

"received_events_url": "https://api.github.com/users/BenjaminGalliot/received_events",

"repos_url": "https://api.github.com/users/BenjaminGalliot/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/BenjaminGalliot/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/BenjaminGalliot/subscriptions",

"type": "User",

"url": "https://api.github.com/users/BenjaminGalliot"

} | [] | closed | false | null | [] | null | [

"OK, I removed Glottolog codes and only added ISO 639-3 ones. The former are for the moment in corpus card description, language details, and in subcorpora names.",

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_4880). All of your documentation changes will be reflected on that endpoint."

] | "2022-08-23T19:32:38Z" | "2022-08-24T12:52:46Z" | "2022-08-24T12:52:46Z" | CONTRIBUTOR | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/4880.diff",

"html_url": "https://github.com/huggingface/datasets/pull/4880",

"merged_at": "2022-08-24T12:52:46Z",

"patch_url": "https://github.com/huggingface/datasets/pull/4880.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4880"

} | Added names of less-studied languages (nru – Narua and jya – Japhug) for existing datasets. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/4880/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/4880/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1527 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1527/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1527/comments | https://api.github.com/repos/huggingface/datasets/issues/1527/events | https://github.com/huggingface/datasets/pull/1527 | 764,638,504 | MDExOlB1bGxSZXF1ZXN0NTM4NjA3MjQw | 1,527 | Add : Conv AI 2 (Messed up original PR) | {

"avatar_url": "https://avatars.githubusercontent.com/u/22396042?v=4",

"events_url": "https://api.github.com/users/rkc007/events{/privacy}",

"followers_url": "https://api.github.com/users/rkc007/followers",

"following_url": "https://api.github.com/users/rkc007/following{/other_user}",

"gists_url": "https://api.github.com/users/rkc007/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/rkc007",

"id": 22396042,

"login": "rkc007",

"node_id": "MDQ6VXNlcjIyMzk2MDQy",

"organizations_url": "https://api.github.com/users/rkc007/orgs",

"received_events_url": "https://api.github.com/users/rkc007/received_events",

"repos_url": "https://api.github.com/users/rkc007/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/rkc007/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/rkc007/subscriptions",

"type": "User",

"url": "https://api.github.com/users/rkc007"

} | [] | closed | false | null | [] | null | [] | "2020-12-13T00:21:14Z" | "2020-12-13T19:14:24Z" | "2020-12-13T19:14:24Z" | CONTRIBUTOR | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/1527.diff",

"html_url": "https://github.com/huggingface/datasets/pull/1527",

"merged_at": "2020-12-13T19:14:24Z",

"patch_url": "https://github.com/huggingface/datasets/pull/1527.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1527"

} | @lhoestq Sorry I messed up the previous 2 PR's -> https://github.com/huggingface/datasets/pull/1462 -> https://github.com/huggingface/datasets/pull/1383. So created a new one. Also, everything is fixed in this PR. Can you please review it ?

Thanks in advance. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1527/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1527/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3809 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3809/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3809/comments | https://api.github.com/repos/huggingface/datasets/issues/3809/events | https://github.com/huggingface/datasets/issues/3809 | 1,158,143,480 | I_kwDODunzps5FB934 | 3,809 | Checksums didn't match for datasets on Google Drive | {

"avatar_url": "https://avatars.githubusercontent.com/u/11507045?v=4",

"events_url": "https://api.github.com/users/muelletm/events{/privacy}",

"followers_url": "https://api.github.com/users/muelletm/followers",

"following_url": "https://api.github.com/users/muelletm/following{/other_user}",

"gists_url": "https://api.github.com/users/muelletm/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/muelletm",

"id": 11507045,

"login": "muelletm",

"node_id": "MDQ6VXNlcjExNTA3MDQ1",

"organizations_url": "https://api.github.com/users/muelletm/orgs",

"received_events_url": "https://api.github.com/users/muelletm/received_events",

"repos_url": "https://api.github.com/users/muelletm/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/muelletm/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/muelletm/subscriptions",

"type": "User",

"url": "https://api.github.com/users/muelletm"

} | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

},

{

"color": "cfd3d7",

"default": true,

"description": "This issue or pull request already exists",

"id": 1935892865,

"name": "duplicate",

"node_id": "MDU6TGFiZWwxOTM1ODkyODY1",

"url": "https://api.github.com/repos/huggingface/datasets/labels/duplicate"

}

] | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova"

}

] | null | [

"Hi @muelletm, thanks for reporting.\r\n\r\nThis issue was already reported and its root cause is a change in the Google Drive service. See:\r\n- #3786 \r\n\r\nWe have already fixed it. See:\r\n- #3787 \r\n\r\nUntil our next `datasets` library release, you can get this fix by installing our library from the GitHub master branch:\r\n```shell\r\npip install git+https://github.com/huggingface/datasets#egg=datasets\r\n```\r\nThen, if you had previously tried to load the data and got the checksum error, you should force the redownload of the data (before the fix, you just downloaded and cached the virus scan warning page, instead of the data file):\r\n```shell\r\nload_dataset(\"...\", download_mode=\"force_redownload\")\r\n```"

] | "2022-03-03T09:01:10Z" | "2022-03-03T09:24:58Z" | "2022-03-03T09:24:05Z" | NONE | null | null | null | ## Describe the bug

Datasets hosted on Google Drive do not seem to work right now.

Loading them fails with a checksum error.

## Steps to reproduce the bug

```python

from datasets import load_dataset

for dataset in ["head_qa", "yelp_review_full"]:

try:

load_dataset(dataset)

except Exception as exception:

print("Error", dataset, exception)

```

Here is a [colab](https://colab.research.google.com/drive/1wOtHBmL8I65NmUYakzPV5zhVCtHhi7uQ#scrollTo=cDzdCLlk-Bo4).

## Expected results

The datasets should be loaded.

## Actual results

```

Downloading and preparing dataset head_qa/es (download: 75.69 MiB, generated: 2.86 MiB, post-processed: Unknown size, total: 78.55 MiB) to /root/.cache/huggingface/datasets/head_qa/es/1.1.0/583ab408e8baf54aab378c93715fadc4d8aa51b393e27c3484a877e2ac0278e9...

Error head_qa Checksums didn't match for dataset source files:

['https://drive.google.com/u/0/uc?export=download&id=1a_95N5zQQoUCq8IBNVZgziHbeM-QxG2t']

Downloading and preparing dataset yelp_review_full/yelp_review_full (download: 187.06 MiB, generated: 496.94 MiB, post-processed: Unknown size, total: 684.00 MiB) to /root/.cache/huggingface/datasets/yelp_review_full/yelp_review_full/1.0.0/13c31a618ba62568ec8572a222a283dfc29a6517776a3ac5945fb508877dde43...

Error yelp_review_full Checksums didn't match for dataset source files:

['https://drive.google.com/uc?export=download&id=0Bz8a_Dbh9QhbZlU4dXhHTFhZQU0']

```

## Environment info

- `datasets` version: 1.18.3

- Platform: Linux-5.4.144+-x86_64-with-Ubuntu-18.04-bionic

- Python version: 3.7.12

- PyArrow version: 6.0.1

| {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3809/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3809/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/3926 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3926/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3926/comments | https://api.github.com/repos/huggingface/datasets/issues/3926/events | https://github.com/huggingface/datasets/pull/3926 | 1,169,945,052 | PR_kwDODunzps40ehVP | 3,926 | Doc maintenance | {

"avatar_url": "https://avatars.githubusercontent.com/u/59462357?v=4",

"events_url": "https://api.github.com/users/stevhliu/events{/privacy}",

"followers_url": "https://api.github.com/users/stevhliu/followers",

"following_url": "https://api.github.com/users/stevhliu/following{/other_user}",

"gists_url": "https://api.github.com/users/stevhliu/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/stevhliu",

"id": 59462357,

"login": "stevhliu",

"node_id": "MDQ6VXNlcjU5NDYyMzU3",

"organizations_url": "https://api.github.com/users/stevhliu/orgs",

"received_events_url": "https://api.github.com/users/stevhliu/received_events",

"repos_url": "https://api.github.com/users/stevhliu/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/stevhliu/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/stevhliu/subscriptions",

"type": "User",

"url": "https://api.github.com/users/stevhliu"

} | [

{

"color": "0075ca",

"default": true,

"description": "Improvements or additions to documentation",

"id": 1935892861,

"name": "documentation",

"node_id": "MDU6TGFiZWwxOTM1ODkyODYx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/documentation"

}

] | closed | false | null | [] | null | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_3926). All of your documentation changes will be reflected on that endpoint."

] | "2022-03-15T17:00:46Z" | "2022-03-15T19:27:15Z" | "2022-03-15T19:27:12Z" | MEMBER | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/3926.diff",

"html_url": "https://github.com/huggingface/datasets/pull/3926",

"merged_at": "2022-03-15T19:27:12Z",

"patch_url": "https://github.com/huggingface/datasets/pull/3926.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/3926"

} | This PR adds some minor maintenance to the docs. The main fix is properly linking to pages in the callouts because some of the links would just redirect to a non-existent section on the same page. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3926/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3926/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1935 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1935/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1935/comments | https://api.github.com/repos/huggingface/datasets/issues/1935/events | https://github.com/huggingface/datasets/pull/1935 | 814,623,827 | MDExOlB1bGxSZXF1ZXN0NTc4NTgyMzk1 | 1,935 | add CoVoST2 | {

"avatar_url": "https://avatars.githubusercontent.com/u/27137566?v=4",

"events_url": "https://api.github.com/users/patil-suraj/events{/privacy}",

"followers_url": "https://api.github.com/users/patil-suraj/followers",

"following_url": "https://api.github.com/users/patil-suraj/following{/other_user}",

"gists_url": "https://api.github.com/users/patil-suraj/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/patil-suraj",

"id": 27137566,

"login": "patil-suraj",

"node_id": "MDQ6VXNlcjI3MTM3NTY2",

"organizations_url": "https://api.github.com/users/patil-suraj/orgs",

"received_events_url": "https://api.github.com/users/patil-suraj/received_events",

"repos_url": "https://api.github.com/users/patil-suraj/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/patil-suraj/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/patil-suraj/subscriptions",

"type": "User",

"url": "https://api.github.com/users/patil-suraj"

} | [] | closed | false | null | [] | null | [

"@patrickvonplaten \r\nI removed the mp3 files, dummy_data is much smaller now!"

] | "2021-02-23T16:28:16Z" | "2021-02-24T18:09:32Z" | "2021-02-24T18:05:09Z" | MEMBER | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/1935.diff",

"html_url": "https://github.com/huggingface/datasets/pull/1935",

"merged_at": "2021-02-24T18:05:09Z",

"patch_url": "https://github.com/huggingface/datasets/pull/1935.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/1935"

} | This PR adds the CoVoST2 dataset for speech translation and ASR.

https://github.com/facebookresearch/covost#covost-2

The dataset requires manual download as the download page requests an email address and the URLs are temporary.

The dummy data is a bit bigger because of the mp3 files and 36 configs. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 1,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/1935/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/1935/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/3324 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/3324/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/3324/comments | https://api.github.com/repos/huggingface/datasets/issues/3324/events | https://github.com/huggingface/datasets/issues/3324 | 1,064,661,212 | I_kwDODunzps4_dXDc | 3,324 | Can't import `datasets` in python 3.10 | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq"

} | [] | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq"

}

] | null | [] | "2021-11-26T16:06:14Z" | "2021-11-26T16:31:23Z" | "2021-11-26T16:31:23Z" | MEMBER | null | null | null | When importing `datasets` I'm getting this error in python 3.10:

```python

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "/Users/quentinlhoest/Desktop/hf/nlp/src/datasets/__init__.py", line 34, in <module>

from .arrow_dataset import Dataset, concatenate_datasets

File "/Users/quentinlhoest/Desktop/hf/nlp/src/datasets/arrow_dataset.py", line 47, in <module>

from .arrow_reader import ArrowReader

File "/Users/quentinlhoest/Desktop/hf/nlp/src/datasets/arrow_reader.py", line 33, in <module>

from .table import InMemoryTable, MemoryMappedTable, Table, concat_tables

File "/Users/quentinlhoest/Desktop/hf/nlp/src/datasets/table.py", line 334, in <module>

class InMemoryTable(TableBlock):

File "/Users/quentinlhoest/Desktop/hf/nlp/src/datasets/table.py", line 361, in InMemoryTable

def from_pandas(cls, *args, **kwargs):

File "/Users/quentinlhoest/Desktop/hf/nlp/src/datasets/table.py", line 24, in wrapper

out = wraps(arrow_table_method)(method)

File "/Users/quentinlhoest/.pyenv/versions/3.10.0/lib/python3.10/functools.py", line 61, in update_wrapper

wrapper.__wrapped__ = wrapped

AttributeError: readonly attribute

```

This makes the conda build fail.

I'm opening a PR to fix this and do a patch release 1.16.1 | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/3324/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/3324/timeline | null | completed | false |

https://api.github.com/repos/huggingface/datasets/issues/363 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/363/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/363/comments | https://api.github.com/repos/huggingface/datasets/issues/363/events | https://github.com/huggingface/datasets/pull/363 | 653,821,172 | MDExOlB1bGxSZXF1ZXN0NDQ2NjY0NDIy | 363 | Adding support for generic multi dimensional tensors and auxillary image data for multimodal datasets | {

"avatar_url": "https://avatars.githubusercontent.com/u/14030663?v=4",

"events_url": "https://api.github.com/users/eltoto1219/events{/privacy}",

"followers_url": "https://api.github.com/users/eltoto1219/followers",

"following_url": "https://api.github.com/users/eltoto1219/following{/other_user}",

"gists_url": "https://api.github.com/users/eltoto1219/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/eltoto1219",

"id": 14030663,

"login": "eltoto1219",

"node_id": "MDQ6VXNlcjE0MDMwNjYz",

"organizations_url": "https://api.github.com/users/eltoto1219/orgs",

"received_events_url": "https://api.github.com/users/eltoto1219/received_events",

"repos_url": "https://api.github.com/users/eltoto1219/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/eltoto1219/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/eltoto1219/subscriptions",

"type": "User",

"url": "https://api.github.com/users/eltoto1219"

} | [] | closed | false | null | [] | null | [

"Thank you! I just marked this as a draft PR. It probably would be better to create specific Array2D and Array3D classes as needed instead of a generic MultiArray for now, it should simplify the code a lot too so, I'll update it as such. Also i was meaning to reply earlier, but I wanted to thank you for the testing script you sent me earlier since it ended up being tremendously helpful. ",

"Okay, I just converted the MultiArray class to Array2D, and got rid of all those \"globals()\"! \r\n\r\nThe main issues I had were that when including a \"pa.ExtensionType\" as a column, the ordinary methods to batch the data would not work and it would throw me some mysterious error, so I first cleaned up my code to order the row to match the schema (because when including extension types the row is disordered ) and then made each row a pa.Table and then concatenated all the tables. Also each n-dimensional vector class we implement will be size invariant which is some good news. ",

"Okay awesome! I just added your suggestions and changed up my recursive functions. \r\n\r\nHere is the traceback for the when I use the original code in the write_on_file method:\r\n\r\n```\r\nTraceback (most recent call last):\r\n File \"<stdin>\", line 33, in <module>\r\n File \"/home/eltoto/nlp/src/nlp/arrow_writer.py\", line 214, in finalize\r\n self.write_on_file()\r\n File \"/home/eltoto/nlp/src/nlp/arrow_writer.py\", line 134, in write_on_file\r\n pa_array = pa.array(self.current_rows, type=self._type)\r\n File \"pyarrow/array.pxi\", line 269, in pyarrow.lib.array\r\n File \"pyarrow/array.pxi\", line 38, in pyarrow.lib._sequence_to_array\r\n File \"pyarrow/error.pxi\", line 106, in pyarrow.lib.check_status\r\npyarrow.lib.ArrowNotImplementedError: MakeBuilder: cannot construct builder for type extension<arrow.py_extension_type>\r\n\r\nshell returned 1\r\n```\r\n\r\nI think when trying to cast an extension array within a list of dictionaries, some method gets called that bugs out Arrow and somehow doesn't get called when adding a single row to a a table and then appending multiple tables together. I tinkered with this for a while but could not find any workaround. \r\n\r\nIn the case that this new method causes bad compression/worse performance, we can explicitly set the batch size in the pa.Table.to_batches(***batch_size***) method, which will return a list of batches. Perhaps, we can check that the batch size is not too large converting the table to batches after X many rows are appended to it by following the batch_size check below.",

"> I think when trying to cast an extension array within a list of dictionaries, some method gets called that bugs out Arrow and somehow doesn't get called when adding a single row to a a table and then appending multiple tables together. I tinkered with this for a while but could not find any workaround.\r\n\r\nIndeed that's weird.\r\n\r\n> In the case that this new method causes bad compression/worse performance, we can explicitly set the batch size in the pa.Table.to_batches(batch_size) method, which will return a list of batches. Perhaps, we can check that the batch size is not too large converting the table to batches after X many rows are appended to it by following the batch_size check below.\r\n\r\nThe argument of `pa.Table.to_batches` is not `batch_size` but `max_chunksize`, which means that right now it would have no effects (each chunk is of length 1).\r\n\r\nWe can fix that just by doing `entries.combine_chunks().to_batches(batch_size)`. In that case it would write by chunk of 1000 which is what we want. I don't think it will slow down the writing by much, but we may have to do a benchmark just to make sure. If speed is ok we could even replace the original code to always write chunks this way.\r\n\r\nDo you still have errors that need to be fixed ?",

"@lhoestq Nope all should be good! \r\n\r\nWould you like me to add the entries.combine_chunks().to_batch_size() code + benchmark?",

"> @lhoestq Nope all should be good!\r\n\r\nAwesome :)\r\n\r\nI think it would be good to start to add some tests then.\r\nYou already have `test_multi_array.py` which is a good start, maybe you can place it in /tests and make it a `unittest.TestCase` ?\r\n\r\n> Would you like me to add the entries.combine_chunks().to_batch_size() code + benchmark?\r\n\r\nThat would be interesting. We don't want reading/writing to be the bottleneck of dataset processing for example in terms of speed. Maybe we could test the write + read speed of different datasets:\r\n- write speed + read speed a dataset with `nlp.Array2D` features\r\n- write speed + read speed a dataset with `nlp.Sequence(nlp.Sequence(nlp.Value(\"float32\")))` features\r\n- write speed + read speed a dataset with `nlp.Sequence(nlp.Value(\"float32\"))` features (same data but flatten)\r\nIt will be interesting to see the influence of `.combine_chunks()` on the `Array2D` test too.\r\n\r\nWhat do you think ?",

"Well actually it looks like we're still having the `print(dataset[0])` error no ?",

"I just tested your code to try to understand better.\r\n\r\n\r\n- First thing you must know is that we've switched from `dataset._data.to_pandas` to `dataset._data.to_pydict` by default when we call `dataset[0]` in #423 . Right now it raises an error but it can be fixed by adding this method to `ExtensionArray2D`:\r\n\r\n```python\r\n def to_pylist(self):\r\n return self.to_numpy().tolist()\r\n```\r\n\r\n- Second, I noticed that `ExtensionArray2D.to_numpy()` always return a (5, 5) shape in your example. I thought `ExtensionArray` was for possibly multiple examples and so I was expecting a shape like (1, 5, 5) for example. Did I miss something ?\r\nTherefore when I apply the fix I mentioned (adding to_pylist), it returns one example per row in each image (in your example of 2 images of shape 5x5, I get `len(dataset._data.to_pydict()[\"image\"]) == 10 # True`)\r\n\r\n[EDIT] I changed the reshape step in `ExtensionArray2D.to_numpy()` by\r\n```python\r\nnumpy_arr = numpy_arr.reshape(len(self), *ExtensionArray2D._construct_shape(self.storage))\r\n```\r\nand it did the job: `len(dataset._data.to_pydict()[\"image\"]) == 2 # True`\r\n\r\n- Finally, I was able to make `to_pandas` work though, by implementing custom array dtype in pandas with arrow conversion (I got inspiration from [here](https://gist.github.com/Eastsun/a59fb0438f65e8643cd61d8c98ec4c08) and [here](https://pandas.pydata.org/pandas-docs/version/1.0.0/development/extending.html#compatibility-with-apache-arrow))\r\n\r\nMaybe you could add me in your repo so I can open a PR to add these changes to your branch ?",

"`combine_chunks` doesn't seem to work btw:\r\n`ArrowNotImplementedError: concatenation of extension<arrow.py_extension_type>`",

"> > @lhoestq Nope all should be good!\r\n> \r\n> Awesome :)\r\n> \r\n> I think it would be good to start to add some tests then.\r\n> You already have `test_multi_array.py` which is a good start, maybe you can place it in /tests and make it a `unittest.TestCase` ?\r\n> \r\n> > Would you like me to add the entries.combine_chunks().to_batch_size() code + benchmark?\r\n> \r\n> That would be interesting. We don't want reading/writing to be the bottleneck of dataset processing for example in terms of speed. Maybe we could test the write + read speed of different datasets:\r\n> \r\n> * write speed + read speed a dataset with `nlp.Array2D` features\r\n> * write speed + read speed a dataset with `nlp.Sequence(nlp.Sequence(nlp.Value(\"float32\")))` features\r\n> * write speed + read speed a dataset with `nlp.Sequence(nlp.Value(\"float32\"))` features (same data but flatten)\r\n> It will be interesting to see the influence of `.combine_chunks()` on the `Array2D` test too.\r\n> \r\n> What do you think ?\r\n\r\nYa! that should be no problem at all, Ill use the timeit module and get back to you with the results sometime over the weekend.",

"Thank you for all your help getting the pandas and row indexing for the dataset to work! For `print(dataset[0])`, I considered the workaround of doing `print(dataset[\"col_name\"][0])` a temporary solution, but ya, I was never able to figure out how to previously get it to work. I'll add you to my repo right now, let me know if you do not see the invite. Also lastly, it is strange how the to_batches method is not working, so I can check that out while I add some speed tests + add the multi dim test under the unit tests this weekend. ",

"I created the PR :)\r\nI also tested `to_batches` and it works on my side",

"Sorry for the bit of delay! I just added the tests, the PR into my fork, and some speed tests. It should be fairly easy to add more tests if we need. Do you think there is anything else to checkout?",

"Cool thanks for adding the tests :) \r\n\r\nNext step is merge master into this branch.\r\nNot sure I understand what you did in your last commit, but it looks like you discarded all the changes from master ^^'\r\n\r\nWe've done some changes in the features logic on master, so let me know if you need help merging it.\r\n\r\nAs soon as we've merged from master, we'll have to make sure that we have extensive tests and we'll be good to do !\r\nAbout the lxmert dataset, we can probably keep it for another PR as soon as we have working 2d features. What do you think ?",

"We might want to merge this after tomorrow's release though to avoid potential side effects @lhoestq ",

"Yep I'm sure we can have it not for tomorrow's release but for the next one ;)",

"haha, when I tried to rebase, I ran into some conflicts. In that last commit, I restored the features.py from the previous commit on the branch in my fork because upon updating to master, the pandasdtypemanger and pandas extension types disappeared. If you actually could help me with merging in what is needed, that would actually help a lot. \r\n\r\nOther than that, ya let me go ahead and move the dataloader code out of this PR. Perhaps we could discuss in the slack channelk soon about what to do with that because we can either just support the pretraining corpus for lxmert or try to implement the full COCO and visual genome datasets (+VQA +GQA) which im sure people would be pretty happy about. \r\n\r\nAlso we can talk more tests soon too when you are free. \r\n\r\nGoodluck on the release tomorrow guys!",

"Not sure why github thinks there are conflicts here, as I just rebased from the current master branch.\r\nMerging into master locally works on my side without conflicts\r\n```\r\ngit checkout master\r\ngit reset --hard origin/master\r\ngit merge --no-ff eltoto1219/support_multi_dim_tensors_for_images\r\nMerge made by the 'recursive' strategy.\r\n datasets/lxmert_pretraining_beta/lxmert_pretraining_beta.py | 89 +++++++++++++++++++++++++++++++++++++\r\n datasets/lxmert_pretraining_beta/test_multi_array.py | 45 +++++++++++++++++++\r\n datasets/lxmert_pretraining_beta/to_arrow_data.py | 371 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++\r\n src/nlp/arrow_dataset.py | 24 +++++-----\r\n src/nlp/arrow_writer.py | 22 ++++++++--\r\n src/nlp/features.py | 229 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++---\r\n tests/test_array_2d.py | 210 +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++\r\n 7 files changed, 969 insertions(+), 21 deletions(-)\r\n create mode 100644 datasets/lxmert_pretraining_beta/lxmert_pretraining_beta.py\r\n create mode 100644 datasets/lxmert_pretraining_beta/test_multi_array.py\r\n create mode 100644 datasets/lxmert_pretraining_beta/to_arrow_data.py\r\n create mode 100644 tests/test_array_2d.py\r\n```",

"I put everything inside one commit from the master branch but the merge conflicts on github'side were still there for some reason.\r\nClosing and re-opening the PR fixed the conflict check on github's side.",

"Almost done ! It still needs a pass on the docs/comments and maybe a few more tests.\r\n\r\nI had to do several changes for type inference in the ArrowWriter to make it support custom types.",

"Ok this is now ready for review ! Thanks for your awesome work in this @eltoto1219 \r\n\r\nSummary of the changes:\r\n- added new feature type `Array2D`, that can be instantiated like `Array2D(\"float32\")` for example\r\n- added pyarrow extension type `Array2DExtensionType` and array `Array2DExtensionArray` that take care of converting from and to arrow. `Array2DExtensionType`'s storage is a list of list of any pyarrow array.\r\n- added pandas extension type `PandasArrayExtensionType` and array `PandasArrayExtensionArray` for conversion from and to arrow/python objects\r\n- refactor of the `ArrowWriter` write and write_batch functions to support extension types while preserving the type inference behavior.\r\n- added a utility object `TypedSequence` that is helpful to combine extension arrays and type inference inside the writer's methods.\r\n- added speed test for sequences writing (printed as warnings in pytest)\r\n- breaking: set disable_nullable to False by default as pyarrow's type inference returns nullable fields\r\n\r\nAnd there are plenty of new tests, mainly in `test_array2d.py` and `test_arrow_writer.py`.\r\n\r\nNote that there are some collisions in `arrow_dataset.py` with #513 so let's be careful when we'll merge this one.\r\n\r\nI know this is a big PR so feel free to ask questions",

"I'll add Array3D, 4D.. tomorrow but it should take only a few lines. The rest won't change",

"I took your comments into account and I added Array[3-5]D.\r\nI changed the storage type to fixed lengths lists. I had to update the `to_numpy` function because of that. Indeed slicing a FixedLengthListArray returns a view a of the original array, while in the previous case slicing a ListArray copies the storage.\r\n"

] | "2020-07-09T07:10:30Z" | "2020-08-24T09:59:35Z" | "2020-08-24T09:59:35Z" | CONTRIBUTOR | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/363.diff",

"html_url": "https://github.com/huggingface/datasets/pull/363",

"merged_at": "2020-08-24T09:59:35Z",

"patch_url": "https://github.com/huggingface/datasets/pull/363.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/363"

} | nlp/features.py:

The main factory class is MultiArray, every single time this class is called, a corresponding pyarrow extension array and type class is generated (and added to the list of globals for future use) for a given root data type and set of dimensions/shape. I provide examples on working with this in datasets/lxmert_pretraining_beta/test_multi_array.py

src/nlp/arrow_writer.py

I had to add a method for writing batches that include extension array types because despite having a unique class for each multidimensional array shape, pyarrow is unable to write any other "array-like" data class to a batch object unless it is of the type pyarrow.ExtensionType. The problem in this is that when writing multiple batches, the order of the schema and data to be written get mixed up (where the pyarrow datatype in the schema only refers to as ExtensionAray, but each ExtensionArray subclass has a different shape) ... possibly I am missing something here and would be grateful if anyone else could take a look!

datasets/lxmert_pretraining_beta/lxmert_pretraining_beta.py & datasets/lxmert_pretraining_beta/to_arrow_data.py:

I have begun adding the data from the original LXMERT paper (https://arxiv.org/abs/1908.07490) hosted here: (https://github.com/airsplay/lxmert). The reason I am not pulling from the source of truth for each individual dataset is because it seems that there will also need to be functionality to aggregate multimodal datasets to create a pre-training corpus (:sleepy: ).

For now, this is just being used to test and run edge-cases for the MultiArray feature, so ive labeled it as "beta_pretraining"!

(still working on the pretraining, just wanted to push out the new functionality sooner than later) | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/363/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/363/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/5449 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5449/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5449/comments | https://api.github.com/repos/huggingface/datasets/issues/5449/events | https://github.com/huggingface/datasets/pull/5449 | 1,550,801,453 | PR_kwDODunzps5INgD9 | 5,449 | Support fsspec 2023.1.0 in CI | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova"

} | [] | closed | false | null | [] | null | [

"_The documentation is not available anymore as the PR was closed or merged._",

"<details>\n<summary>Show benchmarks</summary>\n\nPyArrow==6.0.0\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | read_batch_formatted_as_numpy after write_flattened_sequence | read_batch_formatted_as_numpy after write_nested_sequence | read_batch_unformated after write_array2d | read_batch_unformated after write_flattened_sequence | read_batch_unformated after write_nested_sequence | read_col_formatted_as_numpy after write_array2d | read_col_formatted_as_numpy after write_flattened_sequence | read_col_formatted_as_numpy after write_nested_sequence | read_col_unformated after write_array2d | read_col_unformated after write_flattened_sequence | read_col_unformated after write_nested_sequence | read_formatted_as_numpy after write_array2d | read_formatted_as_numpy after write_flattened_sequence | read_formatted_as_numpy after write_nested_sequence | read_unformated after write_array2d | read_unformated after write_flattened_sequence | read_unformated after write_nested_sequence | write_array2d | write_flattened_sequence | write_nested_sequence |\n|--------|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|\n| new / old (diff) | 0.008227 / 0.011353 (-0.003126) | 0.004496 / 0.011008 (-0.006512) | 0.099319 / 0.038508 (0.060811) | 0.029929 / 0.023109 (0.006820) | 0.296686 / 0.275898 (0.020788) | 0.355372 / 0.323480 (0.031892) | 0.006864 / 0.007986 (-0.001122) | 0.003458 / 0.004328 (-0.000871) | 0.077234 / 0.004250 (0.072983) | 0.037072 / 0.037052 (0.000020) | 0.311675 / 0.258489 (0.053186) | 0.338965 / 0.293841 (0.045124) | 0.033562 / 0.128546 (-0.094985) | 0.011399 / 0.075646 (-0.064248) | 0.322406 / 0.419271 (-0.096865) | 0.043034 / 0.043533 (-0.000499) | 0.298083 / 0.255139 (0.042944) | 0.323661 / 0.283200 (0.040462) | 0.089380 / 0.141683 (-0.052303) | 1.479363 / 1.452155 (0.027208) | 1.518337 / 1.492716 (0.025620) |\n\n### Benchmark: benchmark_getitem\\_100B.json\n\n| metric | get_batch_of\\_1024\\_random_rows | get_batch_of\\_1024\\_rows | get_first_row | get_last_row |\n|--------|---|---|---|---|\n| new / old (diff) | 0.177822 / 0.018006 (0.159816) | 0.400806 / 0.000490 (0.400317) | 0.002121 / 0.000200 (0.001921) | 0.000074 / 0.000054 (0.000019) |\n\n### Benchmark: benchmark_indices_mapping.json\n\n| metric | select | shard | shuffle | sort | train_test_split |\n|--------|---|---|---|---|---|\n| new / old (diff) | 0.021986 / 0.037411 (-0.015426) | 0.096749 / 0.014526 (0.082223) | 0.101443 / 0.176557 (-0.075113) | 0.137519 / 0.737135 (-0.599616) | 0.105558 / 0.296338 (-0.190780) |\n\n### Benchmark: benchmark_iterating.json\n\n| metric | read 5000 | read 50000 | read_batch 50000 10 | read_batch 50000 100 | read_batch 50000 1000 | read_formatted numpy 5000 | read_formatted pandas 5000 | read_formatted tensorflow 5000 | read_formatted torch 5000 | read_formatted_batch numpy 5000 10 | read_formatted_batch numpy 5000 1000 | shuffled read 5000 | shuffled read 50000 | shuffled read_batch 50000 10 | shuffled read_batch 50000 100 | shuffled read_batch 50000 1000 | shuffled read_formatted numpy 5000 | shuffled read_formatted_batch numpy 5000 10 | shuffled read_formatted_batch numpy 5000 1000 |\n|--------|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|\n| new / old (diff) | 0.418983 / 0.215209 (0.203774) | 4.189579 / 2.077655 (2.111924) | 1.877831 / 1.504120 (0.373711) | 1.666213 / 1.541195 (0.125019) | 1.680735 / 1.468490 (0.212245) | 0.693033 / 4.584777 (-3.891744) | 3.420553 / 3.745712 (-0.325160) | 1.819647 / 5.269862 (-3.450214) | 1.144934 / 4.565676 (-3.420743) | 0.082209 / 0.424275 (-0.342066) | 0.012433 / 0.007607 (0.004826) | 0.526781 / 0.226044 (0.300737) | 5.273689 / 2.268929 (3.004760) | 2.323468 / 55.444624 (-53.121156) | 1.960508 / 6.876477 (-4.915969) | 2.035338 / 2.142072 (-0.106735) | 0.812789 / 4.805227 (-3.992438) | 0.148429 / 6.500664 (-6.352235) | 0.064727 / 0.075469 (-0.010742) |\n\n### Benchmark: benchmark_map_filter.json\n\n| metric | filter | map fast-tokenizer batched | map identity | map identity batched | map no-op batched | map no-op batched numpy | map no-op batched pandas | map no-op batched pytorch | map no-op batched tensorflow |\n|--------|---|---|---|---|---|---|---|---|---|\n| new / old (diff) | 1.253218 / 1.841788 (-0.588569) | 13.303426 / 8.074308 (5.229118) | 13.651074 / 10.191392 (3.459682) | 0.135178 / 0.680424 (-0.545246) | 0.028483 / 0.534201 (-0.505717) | 0.393284 / 0.579283 (-0.185999) | 0.401957 / 0.434364 (-0.032407) | 0.457136 / 0.540337 (-0.083201) | 0.535835 / 1.386936 (-0.851101) |\n\n</details>\nPyArrow==latest\n\n<details>\n<summary>Show updated benchmarks!</summary>\n\n### Benchmark: benchmark_array_xd.json\n\n| metric | read_batch_formatted_as_numpy after write_array2d | read_batch_formatted_as_numpy after write_flattened_sequence | read_batch_formatted_as_numpy after write_nested_sequence | read_batch_unformated after write_array2d | read_batch_unformated after write_flattened_sequence | read_batch_unformated after write_nested_sequence | read_col_formatted_as_numpy after write_array2d | read_col_formatted_as_numpy after write_flattened_sequence | read_col_formatted_as_numpy after write_nested_sequence | read_col_unformated after write_array2d | read_col_unformated after write_flattened_sequence | read_col_unformated after write_nested_sequence | read_formatted_as_numpy after write_array2d | read_formatted_as_numpy after write_flattened_sequence | read_formatted_as_numpy after write_nested_sequence | read_unformated after write_array2d | read_unformated after write_flattened_sequence | read_unformated after write_nested_sequence | write_array2d | write_flattened_sequence | write_nested_sequence |\n|--------|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|\n| new / old (diff) | 0.006335 / 0.011353 (-0.005017) | 0.004454 / 0.011008 (-0.006554) | 0.097565 / 0.038508 (0.059057) | 0.026917 / 0.023109 (0.003808) | 0.350779 / 0.275898 (0.074881) | 0.391979 / 0.323480 (0.068499) | 0.004648 / 0.007986 (-0.003337) | 0.003204 / 0.004328 (-0.001124) | 0.076987 / 0.004250 (0.072737) | 0.035257 / 0.037052 (-0.001796) | 0.347193 / 0.258489 (0.088704) | 0.391462 / 0.293841 (0.097621) | 0.031244 / 0.128546 (-0.097302) | 0.011460 / 0.075646 (-0.064186) | 0.321606 / 0.419271 (-0.097665) | 0.041218 / 0.043533 (-0.002315) | 0.341884 / 0.255139 (0.086745) | 0.374920 / 0.283200 (0.091720) | 0.086383 / 0.141683 (-0.055300) | 1.501750 / 1.452155 (0.049595) | 1.565060 / 1.492716 (0.072344) |\n\n### Benchmark: benchmark_getitem\\_100B.json\n\n| metric | get_batch_of\\_1024\\_random_rows | get_batch_of\\_1024\\_rows | get_first_row | get_last_row |\n|--------|---|---|---|---|\n| new / old (diff) | 0.165447 / 0.018006 (0.147441) | 0.401885 / 0.000490 (0.401395) | 0.000975 / 0.000200 (0.000775) | 0.000070 / 0.000054 (0.000015) |\n\n### Benchmark: benchmark_indices_mapping.json\n\n| metric | select | shard | shuffle | sort | train_test_split |\n|--------|---|---|---|---|---|\n| new / old (diff) | 0.024494 / 0.037411 (-0.012917) | 0.097334 / 0.014526 (0.082808) | 0.105324 / 0.176557 (-0.071232) | 0.142430 / 0.737135 (-0.594705) | 0.107249 / 0.296338 (-0.189089) |\n\n### Benchmark: benchmark_iterating.json\n\n| metric | read 5000 | read 50000 | read_batch 50000 10 | read_batch 50000 100 | read_batch 50000 1000 | read_formatted numpy 5000 | read_formatted pandas 5000 | read_formatted tensorflow 5000 | read_formatted torch 5000 | read_formatted_batch numpy 5000 10 | read_formatted_batch numpy 5000 1000 | shuffled read 5000 | shuffled read 50000 | shuffled read_batch 50000 10 | shuffled read_batch 50000 100 | shuffled read_batch 50000 1000 | shuffled read_formatted numpy 5000 | shuffled read_formatted_batch numpy 5000 10 | shuffled read_formatted_batch numpy 5000 1000 |\n|--------|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|\n| new / old (diff) | 0.441632 / 0.215209 (0.226423) | 4.407729 / 2.077655 (2.330074) | 2.078167 / 1.504120 (0.574047) | 1.864210 / 1.541195 (0.323015) | 1.885948 / 1.468490 (0.417458) | 0.693974 / 4.584777 (-3.890803) | 3.386837 / 3.745712 (-0.358875) | 1.840291 / 5.269862 (-3.429571) | 1.150524 / 4.565676 (-3.415153) | 0.082240 / 0.424275 (-0.342035) | 0.012488 / 0.007607 (0.004881) | 0.537589 / 0.226044 (0.311545) | 5.404007 / 2.268929 (3.135078) | 2.537467 / 55.444624 (-52.907157) | 2.190775 / 6.876477 (-4.685702) | 2.224746 / 2.142072 (0.082674) | 0.799524 / 4.805227 (-4.005703) | 0.150639 / 6.500664 (-6.350025) | 0.066473 / 0.075469 (-0.008997) |\n\n### Benchmark: benchmark_map_filter.json\n\n| metric | filter | map fast-tokenizer batched | map identity | map identity batched | map no-op batched | map no-op batched numpy | map no-op batched pandas | map no-op batched pytorch | map no-op batched tensorflow |\n|--------|---|---|---|---|---|---|---|---|---|\n| new / old (diff) | 1.258559 / 1.841788 (-0.583228) | 13.773583 / 8.074308 (5.699275) | 13.964322 / 10.191392 (3.772930) | 0.156295 / 0.680424 (-0.524129) | 0.016824 / 0.534201 (-0.517377) | 0.377476 / 0.579283 (-0.201807) | 0.390163 / 0.434364 (-0.044201) | 0.442541 / 0.540337 (-0.097796) | 0.529404 / 1.386936 (-0.857532) |\n\n</details>\n</details>\n\n\n"

] | "2023-01-20T12:53:17Z" | "2023-01-20T13:32:50Z" | "2023-01-20T13:26:03Z" | MEMBER | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/5449.diff",

"html_url": "https://github.com/huggingface/datasets/pull/5449",

"merged_at": "2023-01-20T13:26:03Z",

"patch_url": "https://github.com/huggingface/datasets/pull/5449.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/5449"

} | Support fsspec 2023.1.0 in CI.

In the 2023.1.0 fsspec release, they replaced the type of `fsspec.registry`:

- from `ReadOnlyRegistry`, with an attribute called `target`

- to `MappingProxyType`, without that attribute

Consequently, we need to change our `mock_fsspec` fixtures, that were using the `target` attribute.

Fix #5448. | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5449/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5449/timeline | null | null | true |

https://api.github.com/repos/huggingface/datasets/issues/1925 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/1925/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/1925/comments | https://api.github.com/repos/huggingface/datasets/issues/1925/events | https://github.com/huggingface/datasets/pull/1925 | 813,600,902 | MDExOlB1bGxSZXF1ZXN0NTc3NzIyMzc3 | 1,925 | Fix: Wiki_dpr - fix when with_embeddings is False or index_name is "no_index" | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq"

} | [] | closed | false | null | [] | null | [