Update README.md

Browse files

README.md

CHANGED

|

@@ -23,7 +23,7 @@ pipeline_tag: text-generation

|

|

| 23 |

|

| 24 |

**Update:** Lamarck has, for the moment, taken the #1 average score for 14 billion parameter models. Counting all the way up to 32 billion parameters, it's #7. This validates the complex merge techniques which captured the complementary strengths of other work in this community. Humor me, I'm giving our guy his meme shades!

|

| 25 |

|

| 26 |

-

Lamarck 14B v0.6: A generalist merge focused on multi-step reasoning, prose, multi-language ability

|

| 27 |

|

| 28 |

|

| 29 |

|

|

|

|

| 23 |

|

| 24 |

**Update:** Lamarck has, for the moment, taken the #1 average score for 14 billion parameter models. Counting all the way up to 32 billion parameters, it's #7. This validates the complex merge techniques which captured the complementary strengths of other work in this community. Humor me, I'm giving our guy his meme shades!

|

| 25 |

|

| 26 |

+

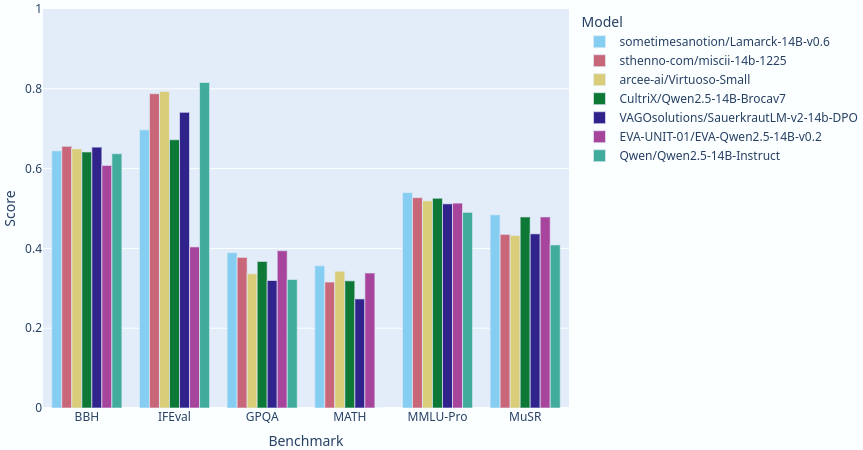

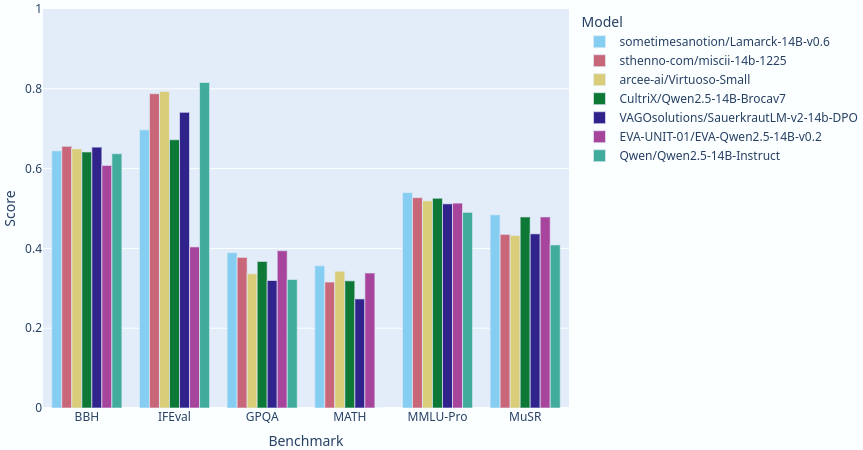

Lamarck 14B v0.6: A generalist merge focused on multi-step reasoning, prose, and multi-language ability. It is based on components that have punched above their weight in the 14 billion parameter class. Here you can see a comparison between Lamarck and other top-performing merges and finetunes:

|

| 27 |

|

| 28 |

|

| 29 |

|