File size: 1,691 Bytes

7def60a |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 |

# k8sgpt example

This example show how to use LocalAI with k8sgpt

## Create the cluster locally with Kind (optional)

If you want to test this locally without a remote Kubernetes cluster, you can use kind.

Install [kind](https://kind.sigs.k8s.io/) and create a cluster:

```

kind create cluster

```

## Setup LocalAI

We will use [helm](https://helm.sh/docs/intro/install/):

```

helm repo add go-skynet https://go-skynet.github.io/helm-charts/

helm repo update

# Clone LocalAI

git clone https://github.com/go-skynet/LocalAI

cd LocalAI/examples/k8sgpt

# modify values.yaml preload_models with the models you want to install.

# CHANGE the URL to a model in huggingface.

helm install local-ai go-skynet/local-ai --create-namespace --namespace local-ai --values values.yaml

```

## Setup K8sGPT

```

# Install k8sgpt

helm repo add k8sgpt https://charts.k8sgpt.ai/

helm repo update

helm install release k8sgpt/k8sgpt-operator -n k8sgpt-operator-system --create-namespace --version 0.0.17

```

Apply the k8sgpt-operator configuration:

```

kubectl apply -f - << EOF

apiVersion: core.k8sgpt.ai/v1alpha1

kind: K8sGPT

metadata:

name: k8sgpt-local-ai

namespace: default

spec:

backend: localai

baseUrl: http://local-ai.local-ai.svc.cluster.local:8080/v1

noCache: false

model: gpt-3.5-turbo

version: v0.3.0

enableAI: true

EOF

```

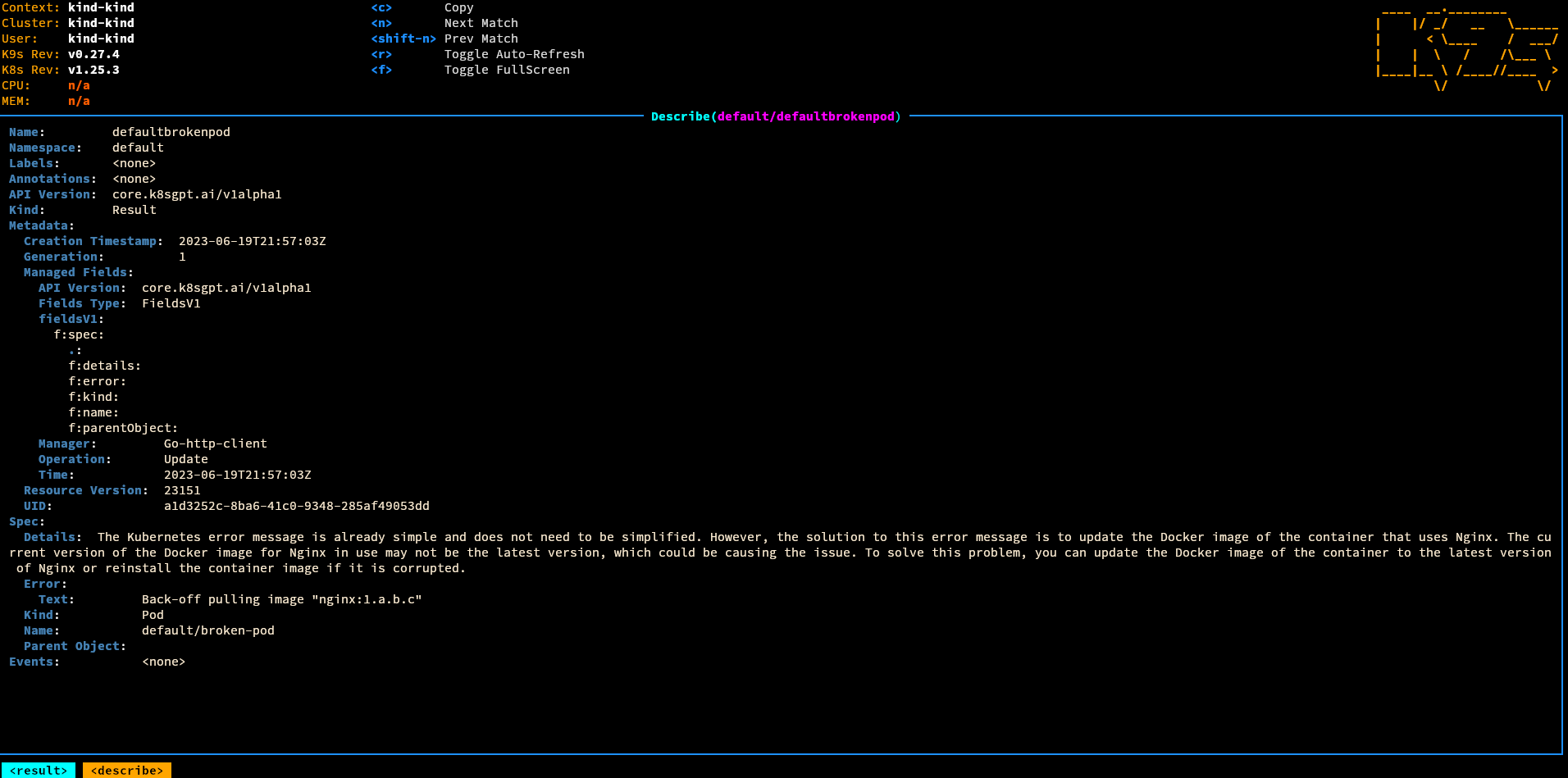

## Test

Apply a broken pod:

```

kubectl apply -f broken-pod.yaml

```

## ArgoCD Deployment Example

[Deploy K8sgpt + localai with Argocd](https://github.com/tyler-harpool/gitops/tree/main/infra/k8gpt)

|