Spaces:

Runtime error

Runtime error

Commit

·

e1ebf71

1

Parent(s):

2ea7ac8

Upload folder using huggingface_hub

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +49 -0

- LICENSE +218 -0

- README.md +147 -7

- __pycache__/drag_pipeline.cpython-38.pyc +0 -0

- drag_pipeline.py +493 -0

- drag_ui.py +335 -0

- environment.yaml +48 -0

- local_pretrained_models/dummy.txt +1 -0

- lora/lora_ckpt/dummy.txt +1 -0

- lora/samples/cat_dog/andrew-s-ouo1hbizWwo-unsplash.jpg +0 -0

- lora/samples/oilpaint1/catherine-kay-greenup-6rhUen8Wrao-unsplash.jpg +0 -0

- lora/samples/oilpaint2/birmingham-museums-trust-wKlHsooRVbg-unsplash.jpg +0 -0

- lora/samples/prompts.txt +6 -0

- lora/samples/sculpture/evan-lee-EdAVNRvUVH4-unsplash.jpg +0 -0

- lora/train_dreambooth_lora.py +1324 -0

- lora/train_lora.sh +21 -0

- lora_tmp/pytorch_lora_weights.bin +3 -0

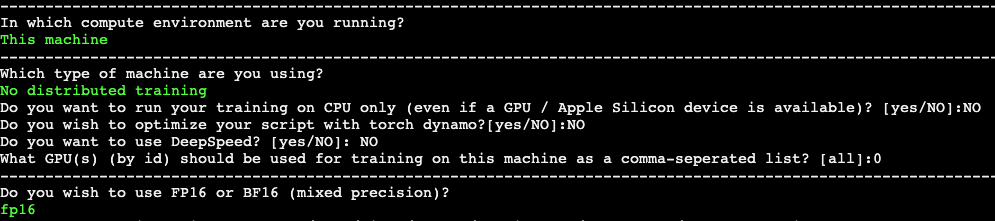

- release-doc/asset/accelerate_config.jpg +0 -0

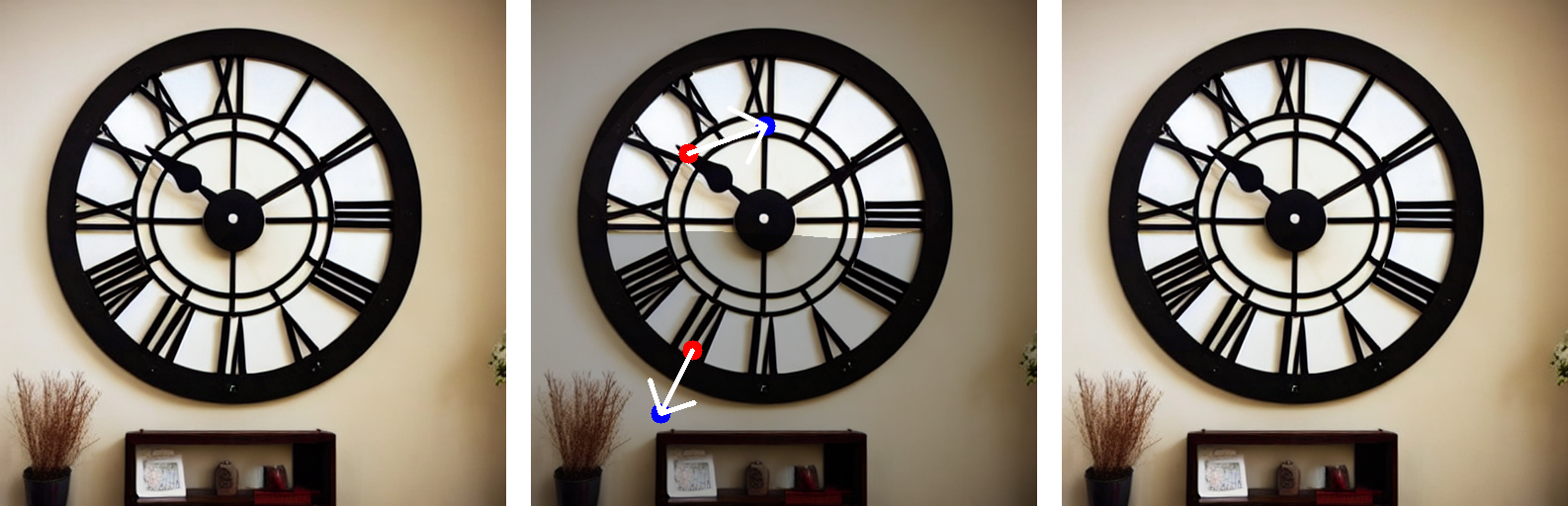

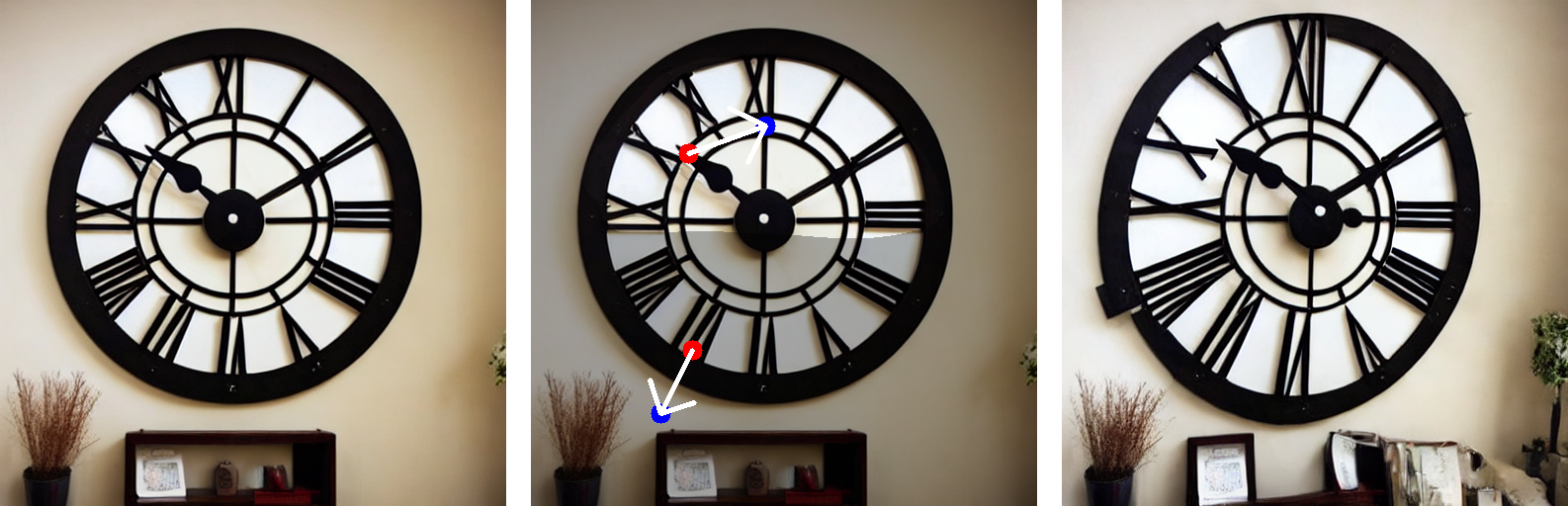

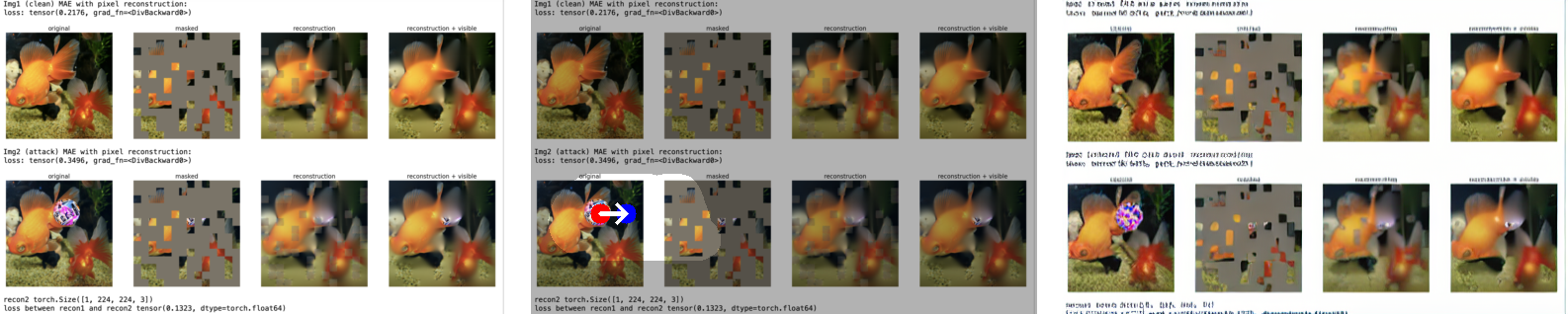

- release-doc/asset/github_video.gif +3 -0

- release-doc/licenses/LICENSE-lora.txt +201 -0

- results/2023-12-01-2318-20.png +0 -0

- results/2023-12-01-2319-14.png +0 -0

- results/2023-12-01-2320-47.png +0 -0

- results/2023-12-01-2321-38.png +0 -0

- results/2023-12-01-2322-25.png +0 -0

- results/2023-12-01-2324-23.png +0 -0

- results/2023-12-01-2326-06.png +0 -0

- results/2023-12-01-2328-23.png +0 -0

- results/2023-12-01-2329-06.png +0 -0

- results/2023-12-01-2330-14.png +0 -0

- results/2023-12-01-2331-09.png +0 -0

- results/2023-12-01-2331-41.png +0 -0

- results/2023-12-01-2332-17.png +0 -0

- results/2023-12-01-2336-40.png +0 -0

- results/2023-12-01-2338-51.png +3 -0

- results/2023-12-01-2340-40.png +3 -0

- results/2023-12-01-2342-40.png +0 -0

- results/2023-12-01-2349-09.png +3 -0

- results/2023-12-01-2350-12.png +3 -0

- results/2023-12-01-2353-51.png +3 -0

- results/2023-12-01-2355-54.png +3 -0

- results/2023-12-01-2357-39.png +3 -0

- results/2023-12-02-0000-23.png +3 -0

- results/2023-12-02-0002-02.png +3 -0

- results/2023-12-02-0004-46.png +0 -0

- results/2023-12-05-1935-28.png +3 -0

- results/2023-12-05-1936-51.png +3 -0

- results/2023-12-05-1937-52.png +3 -0

- results/2023-12-05-1939-28.png +3 -0

- results/2023-12-05-1944-37.png +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,52 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

release-doc/asset/github_video.gif filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

results/2023-12-01-2338-51.png filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

results/2023-12-01-2340-40.png filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

results/2023-12-01-2349-09.png filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

results/2023-12-01-2350-12.png filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

results/2023-12-01-2353-51.png filter=lfs diff=lfs merge=lfs -text

|

| 42 |

+

results/2023-12-01-2355-54.png filter=lfs diff=lfs merge=lfs -text

|

| 43 |

+

results/2023-12-01-2357-39.png filter=lfs diff=lfs merge=lfs -text

|

| 44 |

+

results/2023-12-02-0000-23.png filter=lfs diff=lfs merge=lfs -text

|

| 45 |

+

results/2023-12-02-0002-02.png filter=lfs diff=lfs merge=lfs -text

|

| 46 |

+

results/2023-12-05-1935-28.png filter=lfs diff=lfs merge=lfs -text

|

| 47 |

+

results/2023-12-05-1936-51.png filter=lfs diff=lfs merge=lfs -text

|

| 48 |

+

results/2023-12-05-1937-52.png filter=lfs diff=lfs merge=lfs -text

|

| 49 |

+

results/2023-12-05-1939-28.png filter=lfs diff=lfs merge=lfs -text

|

| 50 |

+

results/2023-12-05-1951-55.png filter=lfs diff=lfs merge=lfs -text

|

| 51 |

+

results/2023-12-05-2007-38.png filter=lfs diff=lfs merge=lfs -text

|

| 52 |

+

results/2023-12-05-2020-44.png filter=lfs diff=lfs merge=lfs -text

|

| 53 |

+

results/2023-12-05-2024-00.gif filter=lfs diff=lfs merge=lfs -text

|

| 54 |

+

results/2023-12-05-2024-01.png filter=lfs diff=lfs merge=lfs -text

|

| 55 |

+

results/2023-12-05-2026-48.gif filter=lfs diff=lfs merge=lfs -text

|

| 56 |

+

results/2023-12-05-2026-50.png filter=lfs diff=lfs merge=lfs -text

|

| 57 |

+

results/2023-12-05-2037-28.gif filter=lfs diff=lfs merge=lfs -text

|

| 58 |

+

results/2023-12-05-2042-05.gif filter=lfs diff=lfs merge=lfs -text

|

| 59 |

+

results/2023-12-05-2047-11.gif filter=lfs diff=lfs merge=lfs -text

|

| 60 |

+

results/2023-12-05-2047-13.png filter=lfs diff=lfs merge=lfs -text

|

| 61 |

+

results/2023-12-05-2050-26.gif filter=lfs diff=lfs merge=lfs -text

|

| 62 |

+

results/2023-12-08-0124-52.png filter=lfs diff=lfs merge=lfs -text

|

| 63 |

+

results/2023-12-08-0136-07.png filter=lfs diff=lfs merge=lfs -text

|

| 64 |

+

results/2023-12-08-0143-46.png filter=lfs diff=lfs merge=lfs -text

|

| 65 |

+

results/2023-12-08-0146-41.gif filter=lfs diff=lfs merge=lfs -text

|

| 66 |

+

results/2023-12-08-0146-45.png filter=lfs diff=lfs merge=lfs -text

|

| 67 |

+

results/2023-12-08-0149-29.png filter=lfs diff=lfs merge=lfs -text

|

| 68 |

+

results/2023-12-08-0152-29.png filter=lfs diff=lfs merge=lfs -text

|

| 69 |

+

results/2023-12-08-0153-19.png filter=lfs diff=lfs merge=lfs -text

|

| 70 |

+

results/2023-12-08-0154-20.png filter=lfs diff=lfs merge=lfs -text

|

| 71 |

+

results/2023-12-08-0155-38.png filter=lfs diff=lfs merge=lfs -text

|

| 72 |

+

results/2023-12-08-0156-15.png filter=lfs diff=lfs merge=lfs -text

|

| 73 |

+

results/2023-12-08-0156-34.png filter=lfs diff=lfs merge=lfs -text

|

| 74 |

+

results/2023-12-08-0157-09.png filter=lfs diff=lfs merge=lfs -text

|

| 75 |

+

results/2023-12-08-0157-52.png filter=lfs diff=lfs merge=lfs -text

|

| 76 |

+

results/2023-12-08-0159-25.png filter=lfs diff=lfs merge=lfs -text

|

| 77 |

+

results/2023-12-08-0200-31.gif filter=lfs diff=lfs merge=lfs -text

|

| 78 |

+

results/2023-12-08-0200-33.png filter=lfs diff=lfs merge=lfs -text

|

| 79 |

+

results/2023-12-08-0202-12.gif filter=lfs diff=lfs merge=lfs -text

|

| 80 |

+

results/2023-12-08-0202-13.png filter=lfs diff=lfs merge=lfs -text

|

| 81 |

+

results/2023-12-08-0215-08.gif filter=lfs diff=lfs merge=lfs -text

|

| 82 |

+

results/2023-12-08-0217-26.gif filter=lfs diff=lfs merge=lfs -text

|

| 83 |

+

results/2023-12-08-0219-21.gif filter=lfs diff=lfs merge=lfs -text

|

| 84 |

+

results/2023-12-08-0223-15.gif filter=lfs diff=lfs merge=lfs -text

|

LICENSE

ADDED

|

@@ -0,0 +1,218 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Apache License

|

| 2 |

+

Version 2.0, January 2004

|

| 3 |

+

http://www.apache.org/licenses/

|

| 4 |

+

|

| 5 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 6 |

+

|

| 7 |

+

1. Definitions.

|

| 8 |

+

|

| 9 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 10 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 11 |

+

|

| 12 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 13 |

+

the copyright owner that is granting the License.

|

| 14 |

+

|

| 15 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 16 |

+

other entities that control, are controlled by, or are under common

|

| 17 |

+

control with that entity. For the purposes of this definition,

|

| 18 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 19 |

+

direction or management of such entity, whether by contract or

|

| 20 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 21 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 22 |

+

|

| 23 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 24 |

+

exercising permissions granted by this License.

|

| 25 |

+

|

| 26 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 27 |

+

including but not limited to software source code, documentation

|

| 28 |

+

source, and configuration files.

|

| 29 |

+

|

| 30 |

+

"Object" form shall mean any form resulting from mechanical

|

| 31 |

+

transformation or translation of a Source form, including but

|

| 32 |

+

not limited to compiled object code, generated documentation,

|

| 33 |

+

and conversions to other media types.

|

| 34 |

+

|

| 35 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 36 |

+

Object form, made available under the License, as indicated by a

|

| 37 |

+

copyright notice that is included in or attached to the work

|

| 38 |

+

(an example is provided in the Appendix below).

|

| 39 |

+

|

| 40 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 41 |

+

form, that is based on (or derived from) the Work and for which the

|

| 42 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 43 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 44 |

+

of this License, Derivative Works shall not include works that remain

|

| 45 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 46 |

+

the Work and Derivative Works thereof.

|

| 47 |

+

|

| 48 |

+

"Contribution" shall mean any work of authorship, including

|

| 49 |

+

the original version of the Work and any modifications or additions

|

| 50 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 51 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 52 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 53 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 54 |

+

means any form of electronic, verbal, or written communication sent

|

| 55 |

+

to the Licensor or its representatives, including but not limited to

|

| 56 |

+

communication on electronic mailing lists, source code control systems,

|

| 57 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 58 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 59 |

+

excluding communication that is conspicuously marked or otherwise

|

| 60 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 61 |

+

|

| 62 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 63 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 64 |

+

subsequently incorporated within the Work.

|

| 65 |

+

|

| 66 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 67 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 68 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 69 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 70 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 71 |

+

Work and such Derivative Works in Source or Object form.

|

| 72 |

+

|

| 73 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 74 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 75 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 76 |

+

(except as stated in this section) patent license to make, have made,

|

| 77 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 78 |

+

where such license applies only to those patent claims licensable

|

| 79 |

+

by such Contributor that are necessarily infringed by their

|

| 80 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 81 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 82 |

+

institute patent litigation against any entity (including a

|

| 83 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 84 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 85 |

+

or contributory patent infringement, then any patent licenses

|

| 86 |

+

granted to You under this License for that Work shall terminate

|

| 87 |

+

as of the date such litigation is filed.

|

| 88 |

+

|

| 89 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 90 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 91 |

+

modifications, and in Source or Object form, provided that You

|

| 92 |

+

meet the following conditions:

|

| 93 |

+

|

| 94 |

+

(a) You must give any other recipients of the Work or

|

| 95 |

+

Derivative Works a copy of this License; and

|

| 96 |

+

|

| 97 |

+

(b) You must cause any modified files to carry prominent notices

|

| 98 |

+

stating that You changed the files; and

|

| 99 |

+

|

| 100 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 101 |

+

that You distribute, all copyright, patent, trademark, and

|

| 102 |

+

attribution notices from the Source form of the Work,

|

| 103 |

+

excluding those notices that do not pertain to any part of

|

| 104 |

+

the Derivative Works; and

|

| 105 |

+

|

| 106 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 107 |

+

distribution, then any Derivative Works that You distribute must

|

| 108 |

+

include a readable copy of the attribution notices contained

|

| 109 |

+

within such NOTICE file, excluding those notices that do not

|

| 110 |

+

pertain to any part of the Derivative Works, in at least one

|

| 111 |

+

of the following places: within a NOTICE text file distributed

|

| 112 |

+

as part of the Derivative Works; within the Source form or

|

| 113 |

+

documentation, if provided along with the Derivative Works; or,

|

| 114 |

+

within a display generated by the Derivative Works, if and

|

| 115 |

+

wherever such third-party notices normally appear. The contents

|

| 116 |

+

of the NOTICE file are for informational purposes only and

|

| 117 |

+

do not modify the License. You may add Your own attribution

|

| 118 |

+

notices within Derivative Works that You distribute, alongside

|

| 119 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 120 |

+

that such additional attribution notices cannot be construed

|

| 121 |

+

as modifying the License.

|

| 122 |

+

|

| 123 |

+

You may add Your own copyright statement to Your modifications and

|

| 124 |

+

may provide additional or different license terms and conditions

|

| 125 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 126 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 127 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 128 |

+

the conditions stated in this License.

|

| 129 |

+

|

| 130 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 131 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 132 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 133 |

+

this License, without any additional terms or conditions.

|

| 134 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 135 |

+

the terms of any separate license agreement you may have executed

|

| 136 |

+

with Licensor regarding such Contributions.

|

| 137 |

+

|

| 138 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 139 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 140 |

+

except as required for reasonable and customary use in describing the

|

| 141 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 142 |

+

|

| 143 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 144 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 145 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 146 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 147 |

+

implied, including, without limitation, any warranties or conditions

|

| 148 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 149 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 150 |

+

appropriateness of using or redistributing the Work and assume any

|

| 151 |

+

risks associated with Your exercise of permissions under this License.

|

| 152 |

+

|

| 153 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 154 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 155 |

+

unless required by applicable law (such as deliberate and grossly

|

| 156 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 157 |

+

liable to You for damages, including any direct, indirect, special,

|

| 158 |

+

incidental, or consequential damages of any character arising as a

|

| 159 |

+

result of this License or out of the use or inability to use the

|

| 160 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 161 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 162 |

+

other commercial damages or losses), even if such Contributor

|

| 163 |

+

has been advised of the possibility of such damages.

|

| 164 |

+

|

| 165 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 166 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 167 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 168 |

+

or other liability obligations and/or rights consistent with this

|

| 169 |

+

License. However, in accepting such obligations, You may act only

|

| 170 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 171 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 172 |

+

defend, and hold each Contributor harmless for any liability

|

| 173 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 174 |

+

of your accepting any such warranty or additional liability.

|

| 175 |

+

|

| 176 |

+

END OF TERMS AND CONDITIONS

|

| 177 |

+

|

| 178 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 179 |

+

|

| 180 |

+

To apply the Apache License to your work, attach the following

|

| 181 |

+

boilerplate notice, with the fields enclosed by brackets "{}"

|

| 182 |

+

replaced with your own identifying information. (Don't include

|

| 183 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 184 |

+

comment syntax for the file format. We also recommend that a

|

| 185 |

+

file or class name and description of purpose be included on the

|

| 186 |

+

same "printed page" as the copyright notice for easier

|

| 187 |

+

identification within third-party archives.

|

| 188 |

+

|

| 189 |

+

Copyright {yyyy} {name of copyright owner}

|

| 190 |

+

|

| 191 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 192 |

+

you may not use this file except in compliance with the License.

|

| 193 |

+

You may obtain a copy of the License at

|

| 194 |

+

|

| 195 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 196 |

+

|

| 197 |

+

Unless required by applicable law or agreed to in writing, software

|

| 198 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 199 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 200 |

+

See the License for the specific language governing permissions and

|

| 201 |

+

limitations under the License.

|

| 202 |

+

|

| 203 |

+

=======================================================================

|

| 204 |

+

Apache DragDiffusion Subcomponents:

|

| 205 |

+

|

| 206 |

+

The Apache DragDiffusion project contains subcomponents with separate copyright

|

| 207 |

+

notices and license terms. Your use of the source code for the these

|

| 208 |

+

subcomponents is subject to the terms and conditions of the following

|

| 209 |

+

licenses.

|

| 210 |

+

|

| 211 |

+

========================================================================

|

| 212 |

+

Apache 2.0 licenses

|

| 213 |

+

========================================================================

|

| 214 |

+

|

| 215 |

+

The following components are provided under the Apache License. See project link for details.

|

| 216 |

+

The text of each license is the standard Apache 2.0 license.

|

| 217 |

+

|

| 218 |

+

files from lora: https://github.com/huggingface/diffusers/blob/v0.17.1/examples/dreambooth/train_dreambooth_lora.py apache 2.0

|

README.md

CHANGED

|

@@ -1,12 +1,152 @@

|

|

| 1 |

---

|

| 2 |

title: DragDiffusion

|

| 3 |

-

|

| 4 |

-

colorFrom: indigo

|

| 5 |

-

colorTo: purple

|

| 6 |

sdk: gradio

|

| 7 |

-

sdk_version:

|

| 8 |

-

app_file: app.py

|

| 9 |

-

pinned: false

|

| 10 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 11 |

|

| 12 |

-

Check out the configuration reference at https://huggingface.co/docs/hub/spaces-config-reference

|

|

|

|

| 1 |

---

|

| 2 |

title: DragDiffusion

|

| 3 |

+

app_file: drag_ui.py

|

|

|

|

|

|

|

| 4 |

sdk: gradio

|

| 5 |

+

sdk_version: 3.41.1

|

|

|

|

|

|

|

| 6 |

---

|

| 7 |

+

<p align="center">

|

| 8 |

+

<h1 align="center">DragDiffusion: Harnessing Diffusion Models for Interactive Point-based Image Editing</h1>

|

| 9 |

+

<p align="center">

|

| 10 |

+

<a href="https://yujun-shi.github.io/"><strong>Yujun Shi</strong></a>

|

| 11 |

+

|

| 12 |

+

<strong>Chuhui Xue</strong>

|

| 13 |

+

|

| 14 |

+

<strong>Jiachun Pan</strong>

|

| 15 |

+

|

| 16 |

+

<strong>Wenqing Zhang</strong>

|

| 17 |

+

|

| 18 |

+

<a href="https://vyftan.github.io/"><strong>Vincent Y. F. Tan</strong></a>

|

| 19 |

+

|

| 20 |

+

<a href="https://songbai.site/"><strong>Song Bai</strong></a>

|

| 21 |

+

</p>

|

| 22 |

+

<div align="center">

|

| 23 |

+

<img src="./release-doc/asset/github_video.gif", width="700">

|

| 24 |

+

</div>

|

| 25 |

+

<br>

|

| 26 |

+

<p align="center">

|

| 27 |

+

<a href="https://arxiv.org/abs/2306.14435"><img alt='arXiv' src="https://img.shields.io/badge/arXiv-2306.14435-b31b1b.svg"></a>

|

| 28 |

+

<a href="https://yujun-shi.github.io/projects/dragdiffusion.html"><img alt='page' src="https://img.shields.io/badge/Project-Website-orange"></a>

|

| 29 |

+

<a href="https://twitter.com/YujunPeiyangShi"><img alt='Twitter' src="https://img.shields.io/twitter/follow/YujunPeiyangShi?label=%40YujunPeiyangShi"></a>

|

| 30 |

+

</p>

|

| 31 |

+

<br>

|

| 32 |

+

</p>

|

| 33 |

+

|

| 34 |

+

## Disclaimer

|

| 35 |

+

This is a research project, NOT a commercial product.

|

| 36 |

+

|

| 37 |

+

## News and Update

|

| 38 |

+

* [Sept 3rd] v0.1.0 Release.

|

| 39 |

+

* Enable **Dragging Diffusion-Generated Images.**

|

| 40 |

+

* Introducing a new guidance mechanism that **greatly improve quality of dragging results.** (Inspired by [MasaCtrl](https://ljzycmd.github.io/projects/MasaCtrl/))

|

| 41 |

+

* Enable Dragging Images with arbitrary aspect ratio

|

| 42 |

+

* Adding support for DPM++Solver (Generated Images)

|

| 43 |

+

* [July 18th] v0.0.1 Release.

|

| 44 |

+

* Integrate LoRA training into the User Interface. **No need to use training script and everything can be conveniently done in UI!**

|

| 45 |

+

* Optimize User Interface layout.

|

| 46 |

+

* Enable using better VAE for eyes and faces (See [this](https://stable-diffusion-art.com/how-to-use-vae/))

|

| 47 |

+

* [July 8th] v0.0.0 Release.

|

| 48 |

+

* Implement Basic function of DragDiffusion

|

| 49 |

+

|

| 50 |

+

## Installation

|

| 51 |

+

|

| 52 |

+

It is recommended to run our code on a Nvidia GPU with a linux system. We have not yet tested on other configurations. Currently, it requires around 14 GB GPU memory to run our method. We will continue to optimize memory efficiency

|

| 53 |

+

|

| 54 |

+

To install the required libraries, simply run the following command:

|

| 55 |

+

```

|

| 56 |

+

conda env create -f environment.yaml

|

| 57 |

+

conda activate dragdiff

|

| 58 |

+

```

|

| 59 |

+

|

| 60 |

+

## Run DragDiffusion

|

| 61 |

+

To start with, in command line, run the following to start the gradio user interface:

|

| 62 |

+

```

|

| 63 |

+

python3 drag_ui_real.py

|

| 64 |

+

```

|

| 65 |

+

|

| 66 |

+

You may check our [GIF above](https://github.com/Yujun-Shi/DragDiffusion/blob/main/release-doc/asset/github_video.gif) that demonstrate the usage of UI in a step-by-step manner.

|

| 67 |

+

|

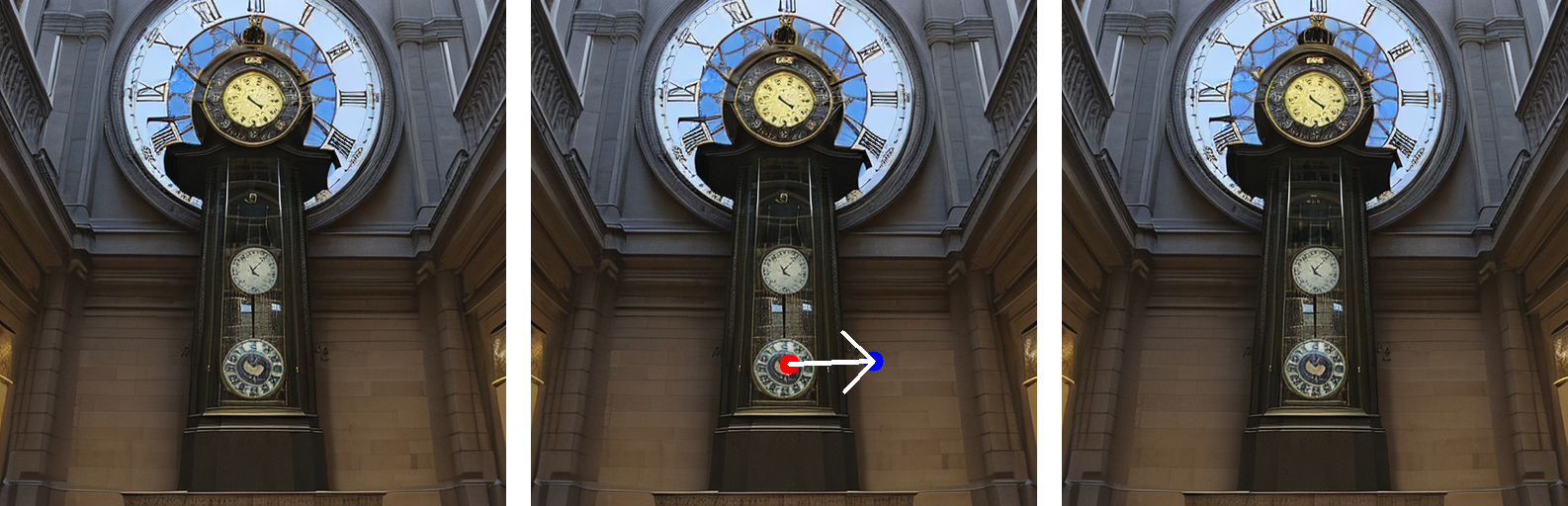

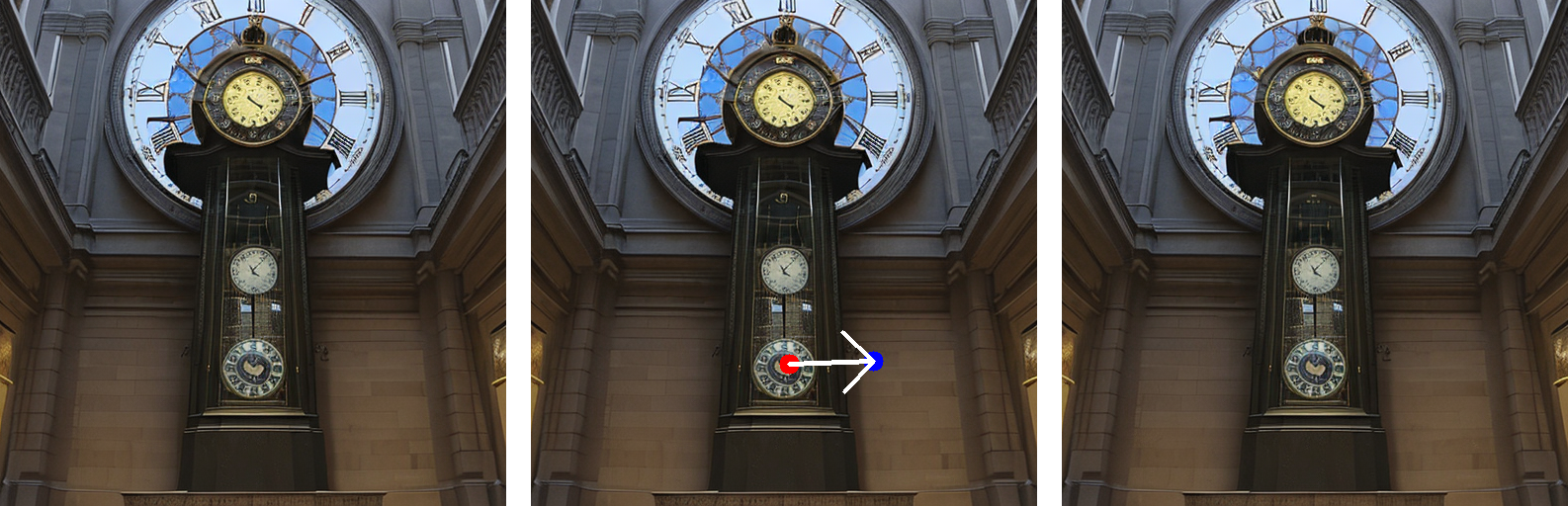

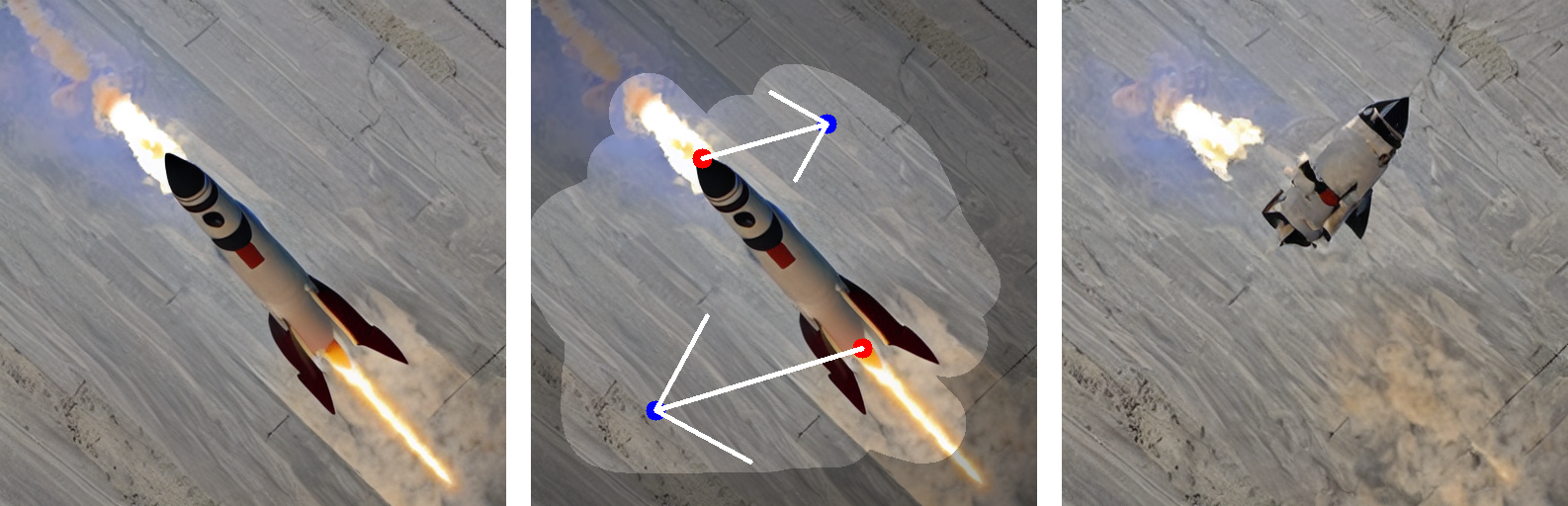

| 68 |

+

Basically, it consists of the following steps:

|

| 69 |

+

|

| 70 |

+

#### Step 1: train a LoRA

|

| 71 |

+

1) Drop our input image into the left-most box.

|

| 72 |

+

2) Input a prompt describing the image in the "prompt" field

|

| 73 |

+

3) Click the "Train LoRA" button to train a LoRA given the input image

|

| 74 |

+

|

| 75 |

+

#### Step 2: do "drag" editing

|

| 76 |

+

1) Draw a mask in the left-most box to specify the editable areas.

|

| 77 |

+

2) Click handle and target points in the middle box. Also, you may reset all points by clicking "Undo point".

|

| 78 |

+

3) Click the "Run" button to run our algorithm. Edited results will be displayed in the right-most box.

|

| 79 |

+

|

| 80 |

+

|

| 81 |

+

## Explanation for parameters in the user interface:

|

| 82 |

+

#### General Parameters

|

| 83 |

+

|Parameter|Explanation|

|

| 84 |

+

|-----|------|

|

| 85 |

+

|prompt|The prompt describing the user input image (This will be used to train the LoRA and conduct "drag" editing).|

|

| 86 |

+

|lora_path|The directory where the trained LoRA will be saved.|

|

| 87 |

+

|

| 88 |

+

|

| 89 |

+

#### Algorithm Parameters

|

| 90 |

+

These parameters are collapsed by default as we normally do not have to tune them. Here are the explanations:

|

| 91 |

+

* Base Model Config

|

| 92 |

+

|

| 93 |

+

|Parameter|Explanation|

|

| 94 |

+

|-----|------|

|

| 95 |

+

|Diffusion Model Path|The path to the diffusion models. By default we are using "runwayml/stable-diffusion-v1-5". We will add support for more models in the future.|

|

| 96 |

+

|VAE Choice|The Choice of VAE. Now there are two choices, one is "default", which will use the original VAE. Another choice is "stabilityai/sd-vae-ft-mse", which can improve results on images with human eyes and faces (see [explanation](https://stable-diffusion-art.com/how-to-use-vae/))|

|

| 97 |

+

|

| 98 |

+

* Drag Parameters

|

| 99 |

+

|

| 100 |

+

|Parameter|Explanation|

|

| 101 |

+

|-----|------|

|

| 102 |

+

|n_pix_step|Maximum number of steps of motion supervision. **Increase this if handle points have not been "dragged" to desired position.**|

|

| 103 |

+

|lam|The regularization coefficient controlling unmasked region stays unchanged. Increase this value if the unmasked region has changed more than what was desired (do not have to tune in most cases).|

|

| 104 |

+

|n_actual_inference_step|Number of DDIM inversion steps performed (do not have to tune in most cases).|

|

| 105 |

+

|

| 106 |

+

* LoRA Parameters

|

| 107 |

+

|

| 108 |

+

|Parameter|Explanation|

|

| 109 |

+

|-----|------|

|

| 110 |

+

|LoRA training steps|Number of LoRA training steps (do not have to tune in most cases).|

|

| 111 |

+

|LoRA learning rate|Learning rate of LoRA (do not have to tune in most cases)|

|

| 112 |

+

|LoRA rank|Rank of the LoRA (do not have to tune in most cases).|

|

| 113 |

+

|

| 114 |

+

|

| 115 |

+

## License

|

| 116 |

+

Code related to the DragDiffusion algorithm is under Apache 2.0 license.

|

| 117 |

+

|

| 118 |

+

|

| 119 |

+

## BibTeX

|

| 120 |

+

```bibtex

|

| 121 |

+

@article{shi2023dragdiffusion,

|

| 122 |

+

title={DragDiffusion: Harnessing Diffusion Models for Interactive Point-based Image Editing},

|

| 123 |

+

author={Shi, Yujun and Xue, Chuhui and Pan, Jiachun and Zhang, Wenqing and Tan, Vincent YF and Bai, Song},

|

| 124 |

+

journal={arXiv preprint arXiv:2306.14435},

|

| 125 |

+

year={2023}

|

| 126 |

+

}

|

| 127 |

+

```

|

| 128 |

+

|

| 129 |

+

## TODO

|

| 130 |

+

- [x] Upload trained LoRAs of our examples

|

| 131 |

+

- [x] Integrate the lora training function into the user interface.

|

| 132 |

+

- [ ] Support using more diffusion models

|

| 133 |

+

- [ ] Support using LoRA downloaded online

|

| 134 |

+

|

| 135 |

+

## Contact

|

| 136 |

+

For any questions on this project, please contact [Yujun](https://yujun-shi.github.io/) ([email protected])

|

| 137 |

+

|

| 138 |

+

## Acknowledgement

|

| 139 |

+

This work is inspired by the amazing [DragGAN](https://vcai.mpi-inf.mpg.de/projects/DragGAN/). The lora training code is modified from an [example](https://github.com/huggingface/diffusers/blob/v0.17.1/examples/dreambooth/train_dreambooth_lora.py) of diffusers. Image samples are collected from [unsplash](https://unsplash.com/), [pexels](https://www.pexels.com/zh-cn/), [pixabay](https://pixabay.com/). Finally, a huge shout-out to all the amazing open source diffusion models and libraries.

|

| 140 |

+

|

| 141 |

+

## Related Links

|

| 142 |

+

* [Drag Your GAN: Interactive Point-based Manipulation on the Generative Image Manifold](https://vcai.mpi-inf.mpg.de/projects/DragGAN/)

|

| 143 |

+

* [MasaCtrl: Tuning-free Mutual Self-Attention Control for Consistent Image Synthesis and Editing](https://ljzycmd.github.io/projects/MasaCtrl/)

|

| 144 |

+

* [Emergent Correspondence from Image Diffusion](https://diffusionfeatures.github.io/)

|

| 145 |

+

* [DragonDiffusion: Enabling Drag-style Manipulation on Diffusion Models](https://mc-e.github.io/project/DragonDiffusion/)

|

| 146 |

+

* [FreeDrag: Point Tracking is Not You Need for Interactive Point-based Image Editing](https://lin-chen.site/projects/freedrag/)

|

| 147 |

+

|

| 148 |

+

|

| 149 |

+

## Common Issues and Solutions

|

| 150 |

+

1) For users struggling in loading models from huggingface due to internet constraint, please 1) follow this [links](https://zhuanlan.zhihu.com/p/475260268) and download the model into the directory "local\_pretrained\_models"; 2) Run "drag\_ui\_real.py" and select the directory to your pretrained model in "Algorithm Parameters -> Base Model Config -> Diffusion Model Path".

|

| 151 |

+

|

| 152 |

|

|

|

__pycache__/drag_pipeline.cpython-38.pyc

ADDED

|

Binary file (10 kB). View file

|

|

|

drag_pipeline.py

ADDED

|

@@ -0,0 +1,493 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# *************************************************************************

|

| 2 |

+

# Copyright (2023) Bytedance Inc.

|

| 3 |

+

#

|

| 4 |

+

# Copyright (2023) DragDiffusion Authors

|

| 5 |

+

#

|

| 6 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 7 |

+

# you may not use this file except in compliance with the License.

|

| 8 |

+

# You may obtain a copy of the License at

|

| 9 |

+

#

|

| 10 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 11 |

+

#

|

| 12 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 13 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 14 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 15 |

+

# See the License for the specific language governing permissions and

|

| 16 |

+

# limitations under the License.

|

| 17 |

+

# *************************************************************************

|

| 18 |

+

|

| 19 |

+

import torch

|

| 20 |

+

import numpy as np

|

| 21 |

+

|

| 22 |

+

import torch.nn.functional as F

|

| 23 |

+

from tqdm import tqdm

|

| 24 |

+

from PIL import Image

|

| 25 |

+

from typing import Any, Dict, List, Optional, Tuple, Union

|

| 26 |

+

|

| 27 |

+

from diffusers import StableDiffusionPipeline

|

| 28 |

+

|

| 29 |

+

# override unet forward

|

| 30 |

+

# The only difference from diffusers:

|

| 31 |

+

# return intermediate UNet features of all UpSample blocks

|

| 32 |

+

def override_forward(self):

|

| 33 |

+

|

| 34 |

+

def forward(

|

| 35 |

+

sample: torch.FloatTensor,

|

| 36 |

+

timestep: Union[torch.Tensor, float, int],

|

| 37 |

+

encoder_hidden_states: torch.Tensor,

|

| 38 |

+

class_labels: Optional[torch.Tensor] = None,

|

| 39 |

+

timestep_cond: Optional[torch.Tensor] = None,

|

| 40 |

+

attention_mask: Optional[torch.Tensor] = None,

|

| 41 |

+

cross_attention_kwargs: Optional[Dict[str, Any]] = None,

|

| 42 |

+

down_block_additional_residuals: Optional[Tuple[torch.Tensor]] = None,

|

| 43 |

+

mid_block_additional_residual: Optional[torch.Tensor] = None,

|

| 44 |

+

return_intermediates: bool = False,

|

| 45 |

+

last_up_block_idx: int = None,

|

| 46 |

+

):

|

| 47 |

+

# By default samples have to be AT least a multiple of the overall upsampling factor.

|

| 48 |

+

# The overall upsampling factor is equal to 2 ** (# num of upsampling layers).

|

| 49 |

+

# However, the upsampling interpolation output size can be forced to fit any upsampling size

|

| 50 |

+

# on the fly if necessary.

|

| 51 |

+

default_overall_up_factor = 2**self.num_upsamplers

|

| 52 |

+

|

| 53 |

+

# upsample size should be forwarded when sample is not a multiple of `default_overall_up_factor`

|

| 54 |

+

forward_upsample_size = False

|

| 55 |

+

upsample_size = None

|

| 56 |

+

|

| 57 |

+

if any(s % default_overall_up_factor != 0 for s in sample.shape[-2:]):

|

| 58 |

+

logger.info("Forward upsample size to force interpolation output size.")

|

| 59 |

+

forward_upsample_size = True

|

| 60 |

+

|

| 61 |

+

# prepare attention_mask

|

| 62 |

+

if attention_mask is not None:

|

| 63 |

+

attention_mask = (1 - attention_mask.to(sample.dtype)) * -10000.0

|

| 64 |

+

attention_mask = attention_mask.unsqueeze(1)

|

| 65 |

+

|

| 66 |

+

# 0. center input if necessary

|

| 67 |

+

if self.config.center_input_sample:

|

| 68 |

+

sample = 2 * sample - 1.0

|

| 69 |

+

|

| 70 |

+

# 1. time

|

| 71 |

+

timesteps = timestep

|

| 72 |

+

if not torch.is_tensor(timesteps):

|

| 73 |

+

# TODO: this requires sync between CPU and GPU. So try to pass timesteps as tensors if you can

|

| 74 |

+

# This would be a good case for the `match` statement (Python 3.10+)

|

| 75 |

+

is_mps = sample.device.type == "mps"

|

| 76 |

+

if isinstance(timestep, float):

|

| 77 |

+

dtype = torch.float32 if is_mps else torch.float64

|

| 78 |

+

else:

|

| 79 |

+

dtype = torch.int32 if is_mps else torch.int64

|

| 80 |

+

timesteps = torch.tensor([timesteps], dtype=dtype, device=sample.device)

|

| 81 |

+

elif len(timesteps.shape) == 0:

|

| 82 |

+

timesteps = timesteps[None].to(sample.device)

|

| 83 |

+

|

| 84 |

+

# broadcast to batch dimension in a way that's compatible with ONNX/Core ML

|

| 85 |

+

timesteps = timesteps.expand(sample.shape[0])

|

| 86 |

+

|

| 87 |

+

t_emb = self.time_proj(timesteps)

|

| 88 |

+

|

| 89 |

+

# `Timesteps` does not contain any weights and will always return f32 tensors

|

| 90 |

+

# but time_embedding might actually be running in fp16. so we need to cast here.

|

| 91 |

+

# there might be better ways to encapsulate this.

|

| 92 |

+

t_emb = t_emb.to(dtype=self.dtype)

|

| 93 |

+

|

| 94 |

+

emb = self.time_embedding(t_emb, timestep_cond)

|

| 95 |

+

|

| 96 |

+

if self.class_embedding is not None:

|

| 97 |

+

if class_labels is None:

|

| 98 |

+

raise ValueError("class_labels should be provided when num_class_embeds > 0")

|

| 99 |

+

|

| 100 |

+

if self.config.class_embed_type == "timestep":

|

| 101 |

+

class_labels = self.time_proj(class_labels)

|

| 102 |

+

|

| 103 |

+

# `Timesteps` does not contain any weights and will always return f32 tensors

|

| 104 |

+

# there might be better ways to encapsulate this.

|

| 105 |

+

class_labels = class_labels.to(dtype=sample.dtype)

|

| 106 |

+

|

| 107 |

+

class_emb = self.class_embedding(class_labels).to(dtype=self.dtype)

|

| 108 |

+

|

| 109 |

+

if self.config.class_embeddings_concat:

|

| 110 |

+

emb = torch.cat([emb, class_emb], dim=-1)

|

| 111 |

+

else:

|

| 112 |

+

emb = emb + class_emb

|

| 113 |

+

|

| 114 |

+

if self.config.addition_embed_type == "text":

|

| 115 |

+

aug_emb = self.add_embedding(encoder_hidden_states)

|

| 116 |

+

emb = emb + aug_emb

|

| 117 |

+

|

| 118 |

+

if self.time_embed_act is not None:

|

| 119 |

+

emb = self.time_embed_act(emb)

|

| 120 |

+

|

| 121 |

+

if self.encoder_hid_proj is not None:

|

| 122 |

+

encoder_hidden_states = self.encoder_hid_proj(encoder_hidden_states)

|

| 123 |

+

|

| 124 |

+

# 2. pre-process

|

| 125 |

+

sample = self.conv_in(sample)

|

| 126 |

+

|

| 127 |

+

# 3. down

|

| 128 |

+

down_block_res_samples = (sample,)

|

| 129 |

+

for downsample_block in self.down_blocks:

|

| 130 |

+

if hasattr(downsample_block, "has_cross_attention") and downsample_block.has_cross_attention:

|

| 131 |

+

sample, res_samples = downsample_block(

|

| 132 |

+

hidden_states=sample,

|

| 133 |

+

temb=emb,

|

| 134 |

+

encoder_hidden_states=encoder_hidden_states,

|

| 135 |

+

attention_mask=attention_mask,

|

| 136 |

+

cross_attention_kwargs=cross_attention_kwargs,

|

| 137 |

+

)

|

| 138 |

+

else:

|

| 139 |

+

sample, res_samples = downsample_block(hidden_states=sample, temb=emb)

|

| 140 |

+

|

| 141 |

+

down_block_res_samples += res_samples

|

| 142 |

+

|

| 143 |

+

if down_block_additional_residuals is not None:

|

| 144 |

+

new_down_block_res_samples = ()

|

| 145 |

+

|

| 146 |

+

for down_block_res_sample, down_block_additional_residual in zip(

|

| 147 |

+

down_block_res_samples, down_block_additional_residuals

|

| 148 |

+

):

|

| 149 |

+

down_block_res_sample = down_block_res_sample + down_block_additional_residual

|

| 150 |

+

new_down_block_res_samples += (down_block_res_sample,)

|

| 151 |

+

|

| 152 |

+

down_block_res_samples = new_down_block_res_samples

|

| 153 |

+

|

| 154 |

+

# 4. mid

|

| 155 |

+

if self.mid_block is not None:

|

| 156 |

+

sample = self.mid_block(

|

| 157 |

+

sample,

|

| 158 |

+

emb,

|

| 159 |

+

encoder_hidden_states=encoder_hidden_states,

|

| 160 |

+

attention_mask=attention_mask,

|

| 161 |

+

cross_attention_kwargs=cross_attention_kwargs,

|

| 162 |

+

)

|

| 163 |

+

|

| 164 |

+

if mid_block_additional_residual is not None:

|

| 165 |

+

sample = sample + mid_block_additional_residual

|

| 166 |

+

|

| 167 |

+

# 5. up

|

| 168 |

+

# only difference from diffusers:

|

| 169 |

+

# save the intermediate features of unet upsample blocks

|

| 170 |

+

# the 0-th element is the mid-block output

|

| 171 |

+

all_intermediate_features = [sample]

|

| 172 |

+

for i, upsample_block in enumerate(self.up_blocks):

|

| 173 |

+

is_final_block = i == len(self.up_blocks) - 1

|

| 174 |

+

|

| 175 |

+

res_samples = down_block_res_samples[-len(upsample_block.resnets) :]

|

| 176 |

+

down_block_res_samples = down_block_res_samples[: -len(upsample_block.resnets)]

|

| 177 |

+

|

| 178 |

+

# if we have not reached the final block and need to forward the

|

| 179 |

+

# upsample size, we do it here

|

| 180 |

+

if not is_final_block and forward_upsample_size:

|

| 181 |

+

upsample_size = down_block_res_samples[-1].shape[2:]

|

| 182 |

+

|

| 183 |

+

if hasattr(upsample_block, "has_cross_attention") and upsample_block.has_cross_attention:

|

| 184 |

+

sample = upsample_block(

|

| 185 |

+

hidden_states=sample,

|

| 186 |

+

temb=emb,

|

| 187 |

+

res_hidden_states_tuple=res_samples,

|

| 188 |

+

encoder_hidden_states=encoder_hidden_states,

|

| 189 |

+

cross_attention_kwargs=cross_attention_kwargs,

|

| 190 |

+

upsample_size=upsample_size,

|

| 191 |

+

attention_mask=attention_mask,

|

| 192 |

+

)

|

| 193 |

+

else:

|

| 194 |

+

sample = upsample_block(

|

| 195 |

+

hidden_states=sample, temb=emb, res_hidden_states_tuple=res_samples, upsample_size=upsample_size

|

| 196 |

+

)

|

| 197 |

+

all_intermediate_features.append(sample)

|

| 198 |

+

# return early to save computation time if needed

|

| 199 |

+

if last_up_block_idx is not None and i == last_up_block_idx:

|

| 200 |

+

return all_intermediate_features

|

| 201 |

+

|

| 202 |

+

# 6. post-process

|

| 203 |

+

if self.conv_norm_out:

|

| 204 |

+