Model Card for llm.c GPT3_125M

Instruction Pretraining: Fineweb-edu 10B interleaved with OpenHermes 2.5

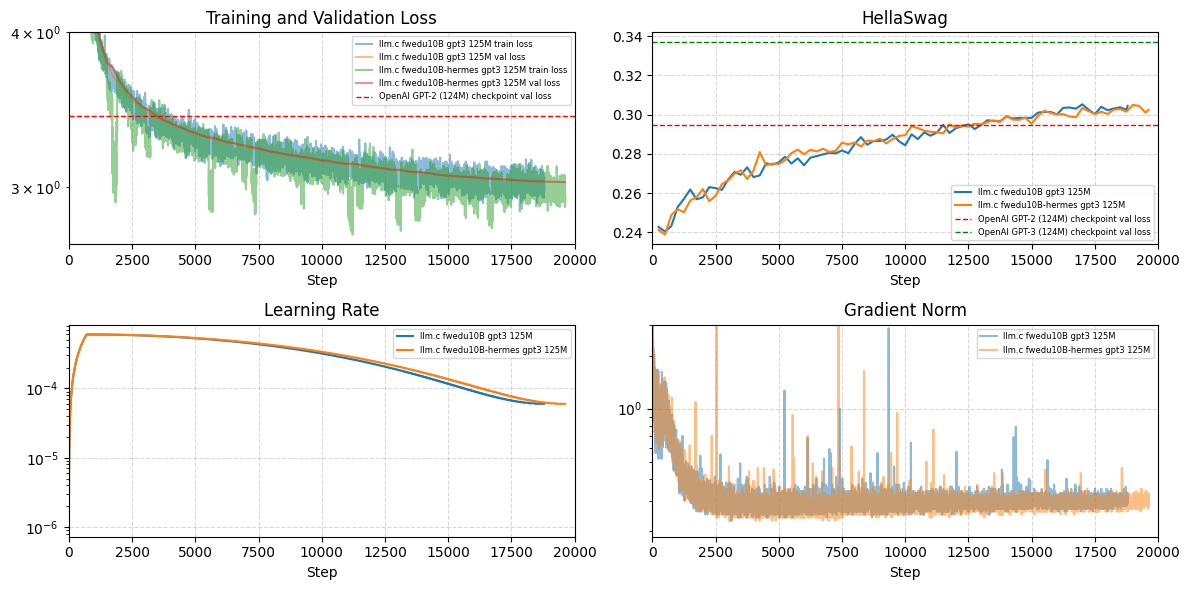

Compare training on fineweb-edu 10b only vs. interleaved

Compare training on fineweb-edu 10b only vs. interleaved

Model Details

How to Get Started with the Model

Use the code below to get started with the model.

from transformers import pipeline

p = pipeline("text-generation", "jrahn/gpt3_125M_edu_hermes")

# instruction following

p("<|im_start|>user\nTeach me to fish.<|im_end|>\n<|im_start|>assistant\n", max_length=128)

# [{'generated_text': '<|im_start|>user\nTeach me to fish.<|im_end|>\n<|im_start|>assistant\nTeach me to fish.\n\nTeach me to fish.\n\nTeach me to fish.\n\nTeach me to fish.\n\nTeach me to fish.\n\nTeach me to fish.\n\nTeach me to fish.\n\nTeach me to fish.\n\nTeach me to fish.\n\nTeach me to fish.\n\nTeach me to fish.\n\n'}]

# text completion

p("In a shocking finding, scientist discovered a herd of unicorns living in a remote, previously unexplored valley, in the Andes Mountains. Even more surprising to the researchers was the fact that the unicorns spoke perfect English. ", max_length=128)

# [{'generated_text': 'In a shocking finding, scientist discovered a herd of unicorns living in a remote, previously unexplored valley, in the Andes Mountains. Even more surprising to the researchers was the fact that the unicorns spoke perfect English. \nThe researchers were able to identify the unicorns by their unique language. The researchers found that the unicorns spoke a language that is similar to the language of the Andes Mountains.\nThe researchers also found that the unicorns spoke a language that is similar to the language of the Andes Mountains. This is the first time that the researchers have been able to identify the language of the Andes Mountains.'}]

Training Details

Training Data

Datasets used: Fineweb-Edu 10B + OpenHermes 2.5

Dataset proportions:

- Part 1: FWE 4,836,050 + OH 100,000 (2.03%) = 4,936,050

- Part 2: FWE 4,336,051 + OH 400,000 (8.45%) = 4,736,051

- Part 3: FWE 500,000 + OH 501,551 (50.08%) = 1,001,551

Total documents: 10,669,024

Training Procedure

Preprocessing [optional]

- Fineweb-Edu: none, just the "text" feature

- OpenHermes 2.5: applied ChatML prompt template to "conversations" to create the "text" feature

Training Hyperparameters

- Training regime:

- bf16

- context length 2048

- per device batch size 16, global batch size 524,288 -> gradient accumulation 16

- zero stage 1

- lr 6e-4, cosine schedule, 700 warmup steps

- more details see run script

Speeds, Sizes, Times [optional]

Params: 125M -> 250MB / checkpoint

Tokens: ~10B (10,287,579,136)

Total training time: ~12hrs

Hardware: 2x RTX4090

MFU: 70% (266,000 tok/s)

Evaluation

Results

HellaSwag: 30.5

- more details see main.log

Technical Specifications [optional]

Model Architecture and Objective

GTP3 125M, Causal Language Modeling

Compute Infrastructure

Hardware

2x RTX4090

Software

- Downloads last month

- 349

This model does not have enough activity to be deployed to Inference API (serverless) yet. Increase its social

visibility and check back later, or deploy to Inference Endpoints (dedicated)

instead.