license: apache-2.0

pipeline_tag: text-generation

🌐 Homepage | 🤗 Model | 📖 arXiv | GitHub

Introduction

CT-LLM-SFT-DPO is an alignment version of CT-LLM. The main features of this model is:

- Our model, an alignment-enhanced variant of CT-LLM-SFT, is trained using DPO, a direct preference-based learning method.

- We utilize a combination of publicly available datasets and synthetic data to train our model.

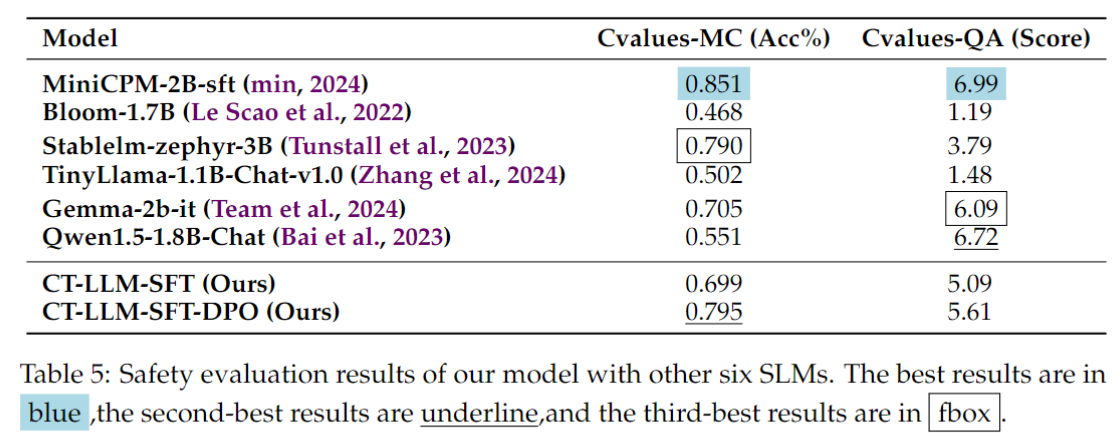

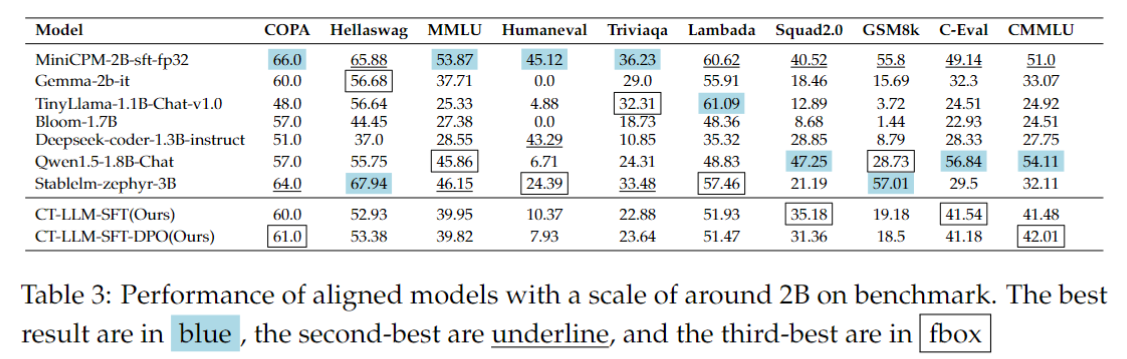

- Our model outperforms a range of 2B LLMs on the Cvalues benchmark, demonstrating its enhanced harmless nature. The Alignment training also enhance its general performances. Specifically, it shows enhanced results on benchmarks like COPA, CMMLU, Hellaswag, and TriviaQA compared to the CT-LLM-SFT version.

Training Data

Our model incorporates a blend of publicly accessible datasets and synthetic data from the LLM. The open-source Chinese datasets consist of non-harmful and beneficial sections from cvalues_rlhf, comparison_gpt4_data_zh and oaast_rm_zh in Llama-factory, huozi, and zhihu. For English, the dataset includes comparison_gpt4_data_en from Llama-factory and beavertails. To construct a more high-quality preference dataset via a synthetics approach, we adopt alpaca-gpt4 which generates "chosen" responses using GPT-4, and we adopt baichuan-6B serving as a weaker model for generating "reject" responses. The dataset comprises 183k Chinese pairs and 46k English pairs in total.

Training Settings

We leverage the CT-LLM-SFT as a reference model $\pi_{sft}$ to optimize the objective language model $\pi_{\theta}$. $\pi_{\theta}$ is initialized by the model parameters of the $\pi_{sft}$. We set the hyperparameters as follows:

- The $\pi_{\theta}$ is trained on 8 H800,

- learning rate $=1e-6$,

- batch size $=4$,

- epoch numbers $=2$,

- weight decay $=0.1$,

- warmup ratio $=0.03$,

- $\beta=0.5$ to control the deviation from $\pi_{sft}$.